上市即熔断,单日暴涨超108%,Cerebras真是“下一个英伟达”?

- 핵심 관점: AI 칩 분야의 신예 Cerebras(CBRS)는 웨이퍼-스케일 칩 기술을 앞세워 엔비디아보다 20배 빠르다고 주장하며, IPO 첫날 주가가 100% 이상 폭등했습니다. 핵심 강점은 초대형 칩 아키텍처와 OpenAI와의 긴밀한 연계(200억 달러 규모의 주문 및 잠재적 지분 포함)에 있지만, 단기적으로 엔비디아를 대체할 수는 없으며 시장 내 위치는 하이엔드 틈새 플레이어에 가깝습니다.

- 핵심 요소:

- 상장 성과: Cerebras는 전날 밤 개장하여 발행가 185달러에서 385달러까지 치솟으며 108% 이상 상승했고, 현재는 311달러로回落했으나 여전히 68% 이상의 상승률을 보이고 있습니다.

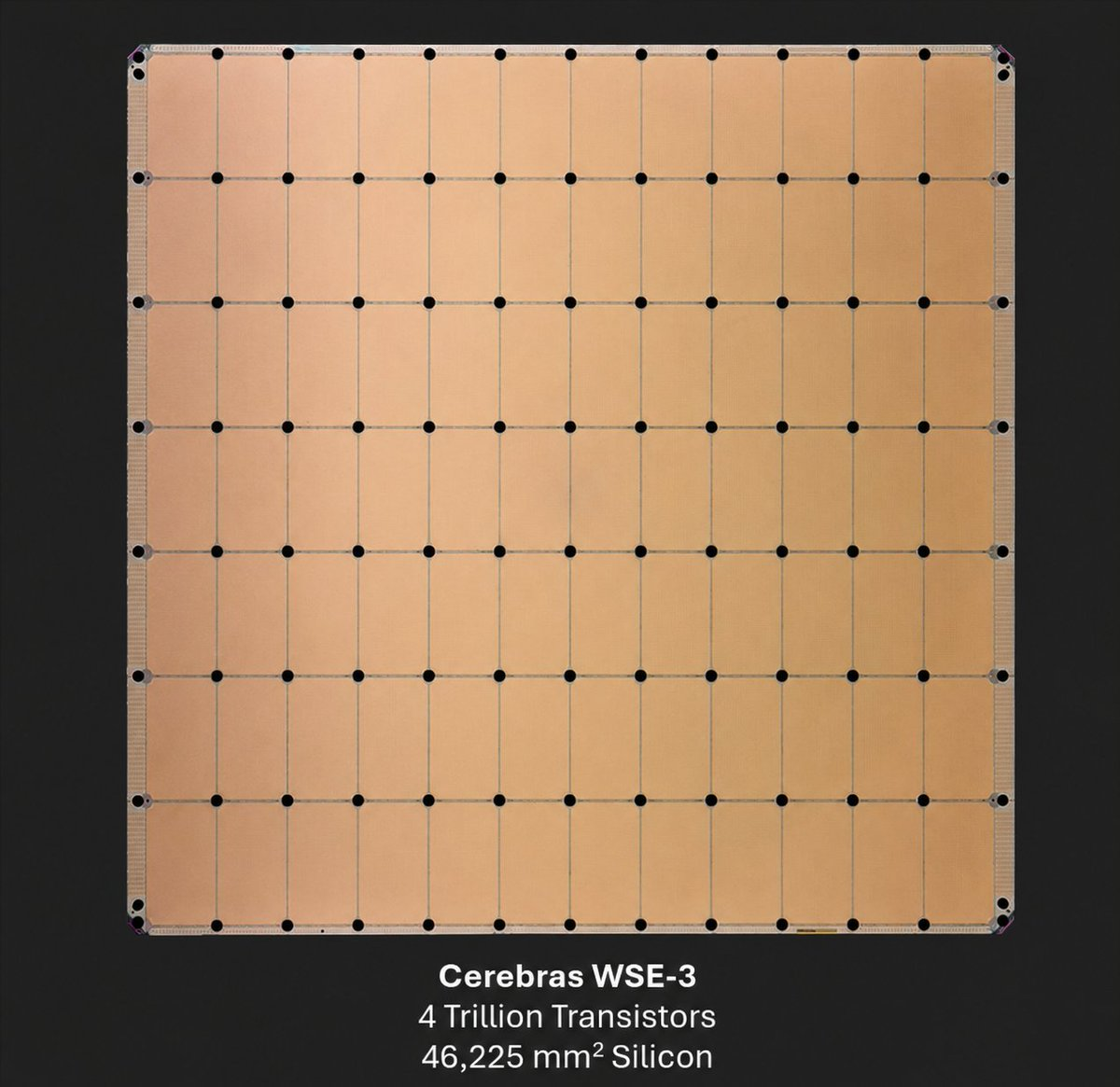

- 기술 차별성: 핵심 제품 WSE-3 칩의 면적은 46,225 mm²(식판 크기)로, 4조 개의 트랜지스터, 90만 개의 AI 코어를 탑재하여 125 petaflops의 컴퓨팅 성능을 제공하며, 단일 웨이퍼 설계를 통해 다중 GPU 간 연결 병목 현상을 방지합니다.

- 사업 실적: 2025년 매출은 5억 1천만 달러(전년 대비 76% 증가)로 흑자를 달성했습니다. OpenAI와 200억 달러 이상의 컴퓨팅 파워 프레임워크 계약을 체결했으며, OpenAI는 3년에 걸쳐 서버를 구매하고 거래의 일부로 지분을 받을 계획입니다.

- OpenAI와의 관계: OpenAI 창업자 Sam Altman, Greg Brockman은 Cerebras의 초기 엔젤 투자자입니다. 2025년 12월 OpenAI는 10억 달러의 운영 대출을 제공했습니다. 투자 설명서에 따르면 OpenAI는 극히 낮은 행사가로 약 3,344만 주의 워런트를 획득할 수 있으며, 행사 시 10%~11%의 지분을 보유하게 됩니다.

- 경쟁 한계: Cerebras가 단기간에 엔비디아를 대체할 수 없는 주된 이유는 네 가지입니다. CUDA 생태계와의 격차가 크고(개발자 전환이 어려움), 규모의 경제 효과가 약하며(엔비디아의 2025년 매출은 수백억 달러인 반면, Cerebras는 5억 1천만 달러), 칩 제조 비용이 높고(웨이퍼-스케일 칩은 수율 확보가 어렵고 단가가 높음), Groq, AMD, Google TPU 등과의 직접적인 경쟁에 직면해 있습니다.

- 주가 전망: AI 열풍과 컴퓨팅 파워 부족의 수혜를 입어 단기적으로는 추가 상승 여력이 있습니다. 그러나 향후 2~3년간의 수주 전환 및 추론 수요 변화가 핵심 변수가 될 것이며, 기대치에 미치지 못할 경우 주가에 하방 압력이 가해질 수 있습니다.

Original|Odaily Planet Daily (@OdailyChina)

Author|Wenser (@wenser 2010)

Last night, Cerebras (CBRS), carrying the moniker of "the next Nvidia," officially began trading. Shortly after, its price surged from the initial offering price of $185 to $350, hitting an intraday high of $385, a gain of over 108%. Although the stock price has since retreated to around $311, it still holds a gain of over 68%. Previously, Cerebras CEO Andrew Feldman told CNBC in an interview: "Our chip is the size of a dinner plate and is 20 times faster than Nvidia's chips."

What gives this chipmaker, which has raised $5.5 billion, the confidence to make such bold claims about being "faster than Nvidia"? How did it secure a $20 billion order from OpenAI amidst intense competition? Will its stock price continue its upward trend in the short term? In this article, Odaily Planet Daily will provide its own answers to these questions.

Cerebras' Confidence in Challenging Nvidia: Opening a New AI World with Wafer-Scale Chips

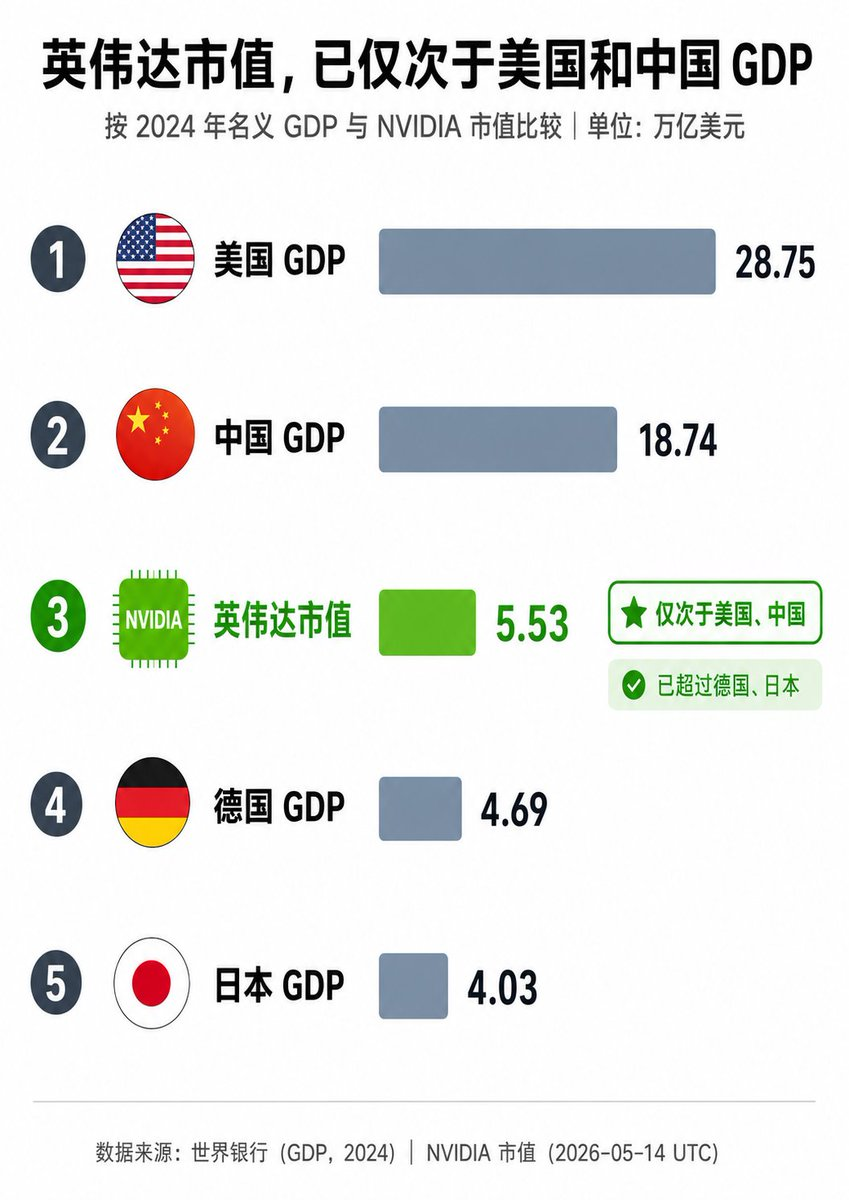

As the gap in AI computing power grows ever larger, surging market demand has propelled Nvidia to become the world's most valuable publicly traded company.

Recently, Nvidia's stock price hit new highs, pushing its market cap past $5.5 trillion at one point. By market cap alone, it has become an economy second only to the GDP of the United States and China, far surpassing major global economies like Germany and Japan, truly deserving the title of a corporate powerhouse richer than many nations.

But unlike the "established giant" Nvidia, which has been around for decades, Cerebras (CBRS) is a relative newcomer in the chip manufacturing industry.

In 2016, industry veterans Andrew Feldman, Gary Lauterbach, Sean Lie, Michael James, and JP Fricker co-founded Cerebras Systems, headquartered in Sunnyvale, California. Unlike Nvidia's strategy of building general-purpose GPUs to maximize market demand, Cerebras' core innovation is the Wafer Scale Engine (WSE), currently the world's largest AI chip.

Cerebras' founding team of five (2022)

Its core products include:

- WSE-3: Area about 46,225 mm² (roughly the size of a dinner plate), containing 4 trillion transistors and 900,000 AI-optimized cores, delivering 125 petaflops of computing power. Unlike traditional GPUs, it creates a single massive processor from an entire wafer, avoiding the bottlenecks of multi-GPU interconnects. It features up to 44GB of on-chip SRAM and extremely high memory bandwidth.

- CS-3 System: An AI supercomputer based on the WSE-3, supporting both training and inference. Currently, Cerebras doesn't just sell chips; it also offers cloud services (Cerebras Inference), dedicated data centers, and technical support for on-premises deployment.

In terms of business model, Cerebras primarily provides ultra-low latency inference for companies like OpenAI, Meta, Perplexity, Mistral, GSK, and the Mayo Clinic. In 2025, Cerebras generated annual revenue of $510 million (up 76% year-over-year), achieved profitability, and is backed by a massive order book (including a multi-year, multi-hundred megawatt computing contract with OpenAI).

Diagram of the Cerebras WSE-3 chip

On the day of its IPO, May 14th, Cerebras CEO Andrew Feldman gave a positive response on CNBC's Squawk Box regarding the company's operational status, technological moat, and future market direction:

- First, Feldman stated that the IPO was "the right way to fund our growth," emphasizing the company is mature and the public market can support significant growth opportunities. He called it the culmination of ten years of effort, expressed immense pride, and noted the market "understood our story and responded positively."

- Second, he repeatedly stressed that Cerebras is the only company in 70 years to successfully manufacture "giant chips," with all other attempts failing, hence "the technical moat is wide and deep." It was here he mentioned that Cerebras' chips are 58 times larger than competitors like Nvidia and run 15-20 times faster, drastically accelerating AI inference and training.

- Finally, addressing concerns about the sustainability of AI spending, Feldman stated that related demand is "enormous and continuously growing." He mentioned key partnerships with OpenAI, AWS, and others, and expressed optimism about the overall AI hardware landscape.

On a side note, similar to Musk and Anthropic betting on "space data centers" (recommended reading: Musk and Anthropic are going to space to find electricity), Feldman boldly predicted, "Data centers in space could very well become a reality within 15 years," showcasing his unwavering confidence in the long-term buildout and rapid expansion of AI infrastructure.

Thus, as a "speed geek" in the AI chip field, Cerebras has successfully broken out by focusing on the extreme performance for ultra-large models, becoming a strong challenger to Nvidia in areas like large model inference and hyperscale training applications.

In this regard, OpenAI's $20 billion order provides ample confidence for its development, and the relationship between the two goes far beyond that of a "chip manufacturer" and a "chip buyer."

The Complex Relationship Between Cerebras and OpenAI: Customer, Creditor, and Potential Major Shareholder

The connection between Cerebras and OpenAI has a long history. Beyond corporate cooperation, OpenAI founder Sam Altman and co-founder Greg Brockman were early angel investors in Cerebras, holding a small number of shares. This might be a key reason for the deep, multi-faceted binding between the two companies today.

In December 2025, OpenAI provided Cerebras with a $1 billion Working Capital Loan, establishing a creditor relationship.

In January of this year, the "750MW Inference Compute Procurement Agreement" between Cerebras and OpenAI was officially announced, with subsequent emphasis on an option to expand the cooperation to 2GW. This news was confirmed again in April. According to media reports, OpenAI plans to spend over $20 billion in the next three years to purchase servers powered by Cerebras chips, and will receive equity in the company as part of the deal. This made OpenAI Cerebras' largest customer, bar none.

Image source: @Xingpt

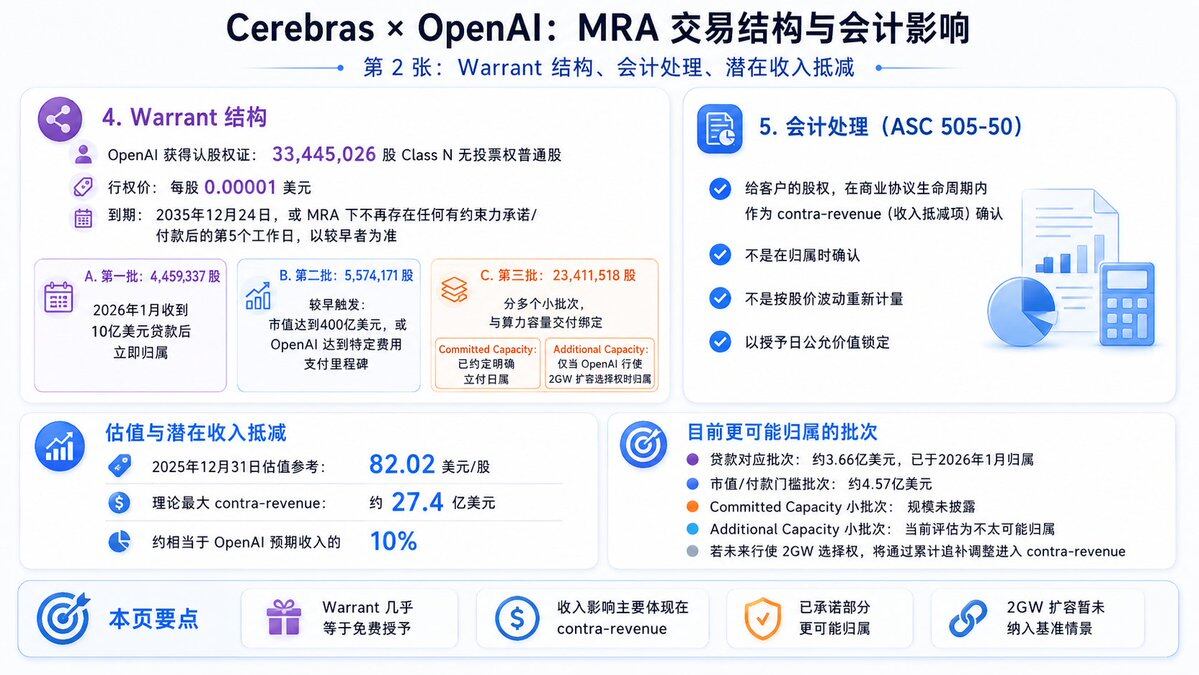

Cerebras' subsequent S-1 prospectus and IPO filing documents show that OpenAI is expected to obtain approximately 33.44 million Cerebras warrants at an extremely low exercise price of $0.00001 per share. Some of the warrants have vesting conditions, including compute delivery dates and milestone requirements like Cerebras' market cap exceeding $40 billion.

If all warrants are exercised and conditions are met, OpenAI could obtain approximately 10%-11% equity (the exact percentage depends on the total share count post-IPO). Based on the valuation of around $56 billion at the time of the IPO pricing, this equity portion is worth about $5-6 billion; based on the current market cap (nearly $95 billion after the IPO's first day of trading), this equity portion is worth over $10.3 billion. Although not all warrants have been exercised yet, it is beyond doubt that OpenAI can be called a "potential major shareholder of Cerebras."

Image source: @Xingpt

Whether Cerebras Can Become the Next Nvidia Remains Uncertain, But the Stock Price May Continue to Rise in the Short Term

Returning to the third question from the beginning: Can Cerebras become the next Nvidia?

From an industry landscape perspective, the answer is undoubtedly no. There are four main reasons:

- First, the ecosystem gap is huge: As the absolute hegemon in chip manufacturing, Nvidia's CUDA software stack is the undisputed industry standard, with countless developers, technical frameworks, and toolchains reliant on it. While Cerebras has its own software stack, it is far from reaching CUDA's maturity and compatibility, making the switching cost extremely high for many developers and enterprises.

- Second, the difference in scale and diversification strategy: In 2025, Nvidia's revenue was tens of billions of dollars, and its GPUs cover all scenarios including training, inference, graphics, automotive, and data centers. Jensen Huang boldly stated at CES 2026, "The AI chip and infrastructure market could reach $1 trillion by 2027," with Nvidia holding the largest piece of the pie. In contrast, Cerebras' 2025 revenue was only $510 million, and its customers are relatively concentrated on a single giant like OpenAI, making it less resilient to risk.

- Third, chip manufacturing and cost control differ: Large AI chips bring not only faster speeds but also higher manufacturing difficulty and cost. Cerebras' wafer-scale chips require a whole wafer per chip, leading to low yields at TSMC, significant yield challenges, and high unit costs (a single CS-3 system costs much more than a single GPU). On the other hand, Nvidia can cut dozens of GPUs from a single wafer, benefiting more from economies of scale and higher economic returns.

- Fourth, the competitive pressures differ: Unlike Nvidia, which holds a dominant position, Cerebras faces direct competition from numerous players like Groq, AMD, Google TPU, and AWS Trainium. Although its current development momentum is good, constrained by time, capital, and resources, its current position is more akin to a "high-end niche player" rather than a "market dominator."

Based on the above information, Cerebras cannot grow into an industry giant like Nvidia in the short term, nor can it disrupt the existing competitive landscape. However, in terms of stock price comparison, its per-share price has already surpassed Nvidia's. Furthermore, thanks to the booming AI craze and the growing computing power gap, before OpenAI and Anthropic go public this year, Cerebras' stock price and market cap may still have some upside.

In the next 2-3 years, if it can successfully convert orders from OpenAI, AWS, etc., into actual revenue, Cerebras' stock price could climb further. However, if order performance falls short of market expectations, or if demand for AI model inference changes, its stock price will face significant downward pressure.

In summary, within 1-3 years, Cerebras is unlikely to replace Nvidia but can carve out a significant share in the AI infrastructure niche, becoming the "King of AI Chip Speed." As for the longer-term competitive landscape, time will tell.

Recommended Reading

A Decade-Long Bet on Cerebras: How a 'Wafer-Scale AI Chip' Reached Nasdaq

Cerebras AI Chip Breaks Nvidia's Monopoly: A Deep Dive into Cerebras' Technical Design