a16z partner refutes AI doomsday theories: Don't panic, technological change will grow the pie

- Core Argument: The "doomsday" theories about AI causing mass unemployment stem from the "lump of labor fallacy," ignoring the historical pattern where humanity's infinite needs and technological progress create new economic ecosystems. AI will boost productivity, expand the economic pie, and create more, higher-value jobs.

- Key Elements:

- Historical Paradox: Transformations like agricultural mechanization and electrification once drastically reduced old jobs but ultimately, by unleashing productivity, gave rise to larger and more diverse new industries and employment opportunities.

- Data Evidence: Studies show that AI adoption currently has a limited impact on total enterprise employment, manifesting more as "augmentation" rather than "substitution"; Goldman Sachs estimates the augmentation effect far outweighs the substitution effect.

- Real-World Examples: After technological disruption in industries like travel agencies and bookkeeping, displaced workers moved into new fields, while those who remained saw higher pay due to increased productivity; AI has birthed entirely new roles like cloud migration specialists.

- Market Signals: "AI as an enhancement" is mentioned 8 times more often than "AI as a replacement" in earnings calls; demand for roles like software engineers and product managers continues to grow thanks to AI-driven efficiency gains.

- Trend Assessment: The emergence of new enterprises, an explosion in application development, and a leap in data concentration in the robotics field indicate that AI is ushering in a new era for knowledge work, not ending it.

Original Author: David George

Original Translation: Felix, PANews

Editor's Note: Currently, the AI "doomsday" narrative seems to dominate public discourse, with fears of "AI taking jobs" and "unemployment" spreading globally. People from various sectors are also brainstorming solutions for the revolutionary changes AI is about to bring. However, a16z General Partner David George argues that this "doomsday" view is completely unfounded, lacking evidence, imagination, and an understanding of humanity. The following is the full text.

The "permanent underclass" theory proposed by AI alarmists is unconvincing. This is nothing new; it's just a repackaged "lump of labor fallacy."

The "lump of labor fallacy" claims that the total amount of work to be done in the world is fixed. It assumes a zero-sum game between existing workers and any person or thing (be it other workers, machines, or now AI) that could potentially do the same work. If the total amount of useful work to be done is fixed, then if AI does more, humans must inevitably do less.

The problem with this premise is that it defies everything we know about people, markets, and economics. Human needs and desires are anything but fixed. Nearly a century ago, Keynes predicted automation would lead to a 15-hour work week, but history proved him wrong. He was right about automation causing a "surplus of labor," but instead of resting on our laurels, we found new, different productive activities to fill our time.

Certainly, AI will absolutely displace some jobs and compress certain roles (and there's evidence this may already be happening). The landscape of the labor market will change, as it inevitably does with every transformative technology. However, the notion that AI will lead to economy-wide, permanent unemployment is bad marketing hype, bad economics, and a profound ignorance of history. On the contrary, increased productivity should increase the demand for labor, as labor becomes more valuable.

Here are our reasons.

"Humanity is Doomed?" Give Me a Break

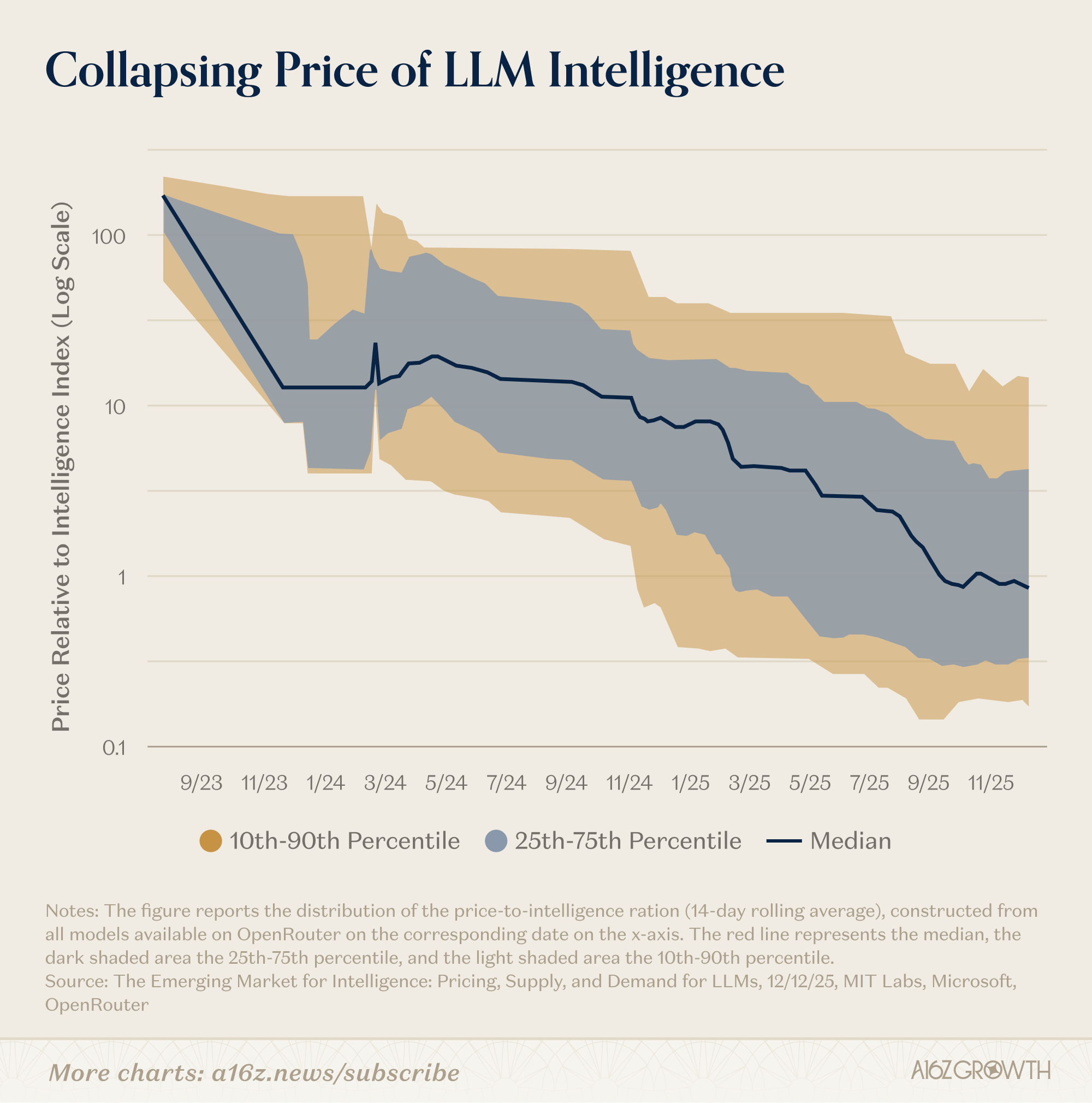

We agree with the "doomsayers" on one point: the cost of cognition is plummeting. AI is becoming increasingly adept at tasks that were, until recently, considered the exclusive domain of the human brain.

"Doomsayers" argue: "If AI can think for us, humanity's 'moat' disappears, and our ultimate value drops to zero." Humanity is finished. Apparently, we've already done all the thinking that needs or wants to be done, and now AI will take on an increasing cognitive load, leading humanity to gradual obsolescence.

However, the reality is: precedent (and intuition) suggests that when the cost of a powerful input falls, the economy doesn't stagnate. Costs fall, quality improves, speed increases, new products become viable, and demand expands outward. Jevons paradox strikes again. When fossil fuels first made energy cheap and abundant, we didn't just put whalers and woodcutters out of work; we invented plastics.

Contrary to the doomsayers' view, we have every reason to expect a similar impact from AI. Since AI will bear an ever-increasing cognitive load, humans can free up to explore new frontiers grander than ever before.

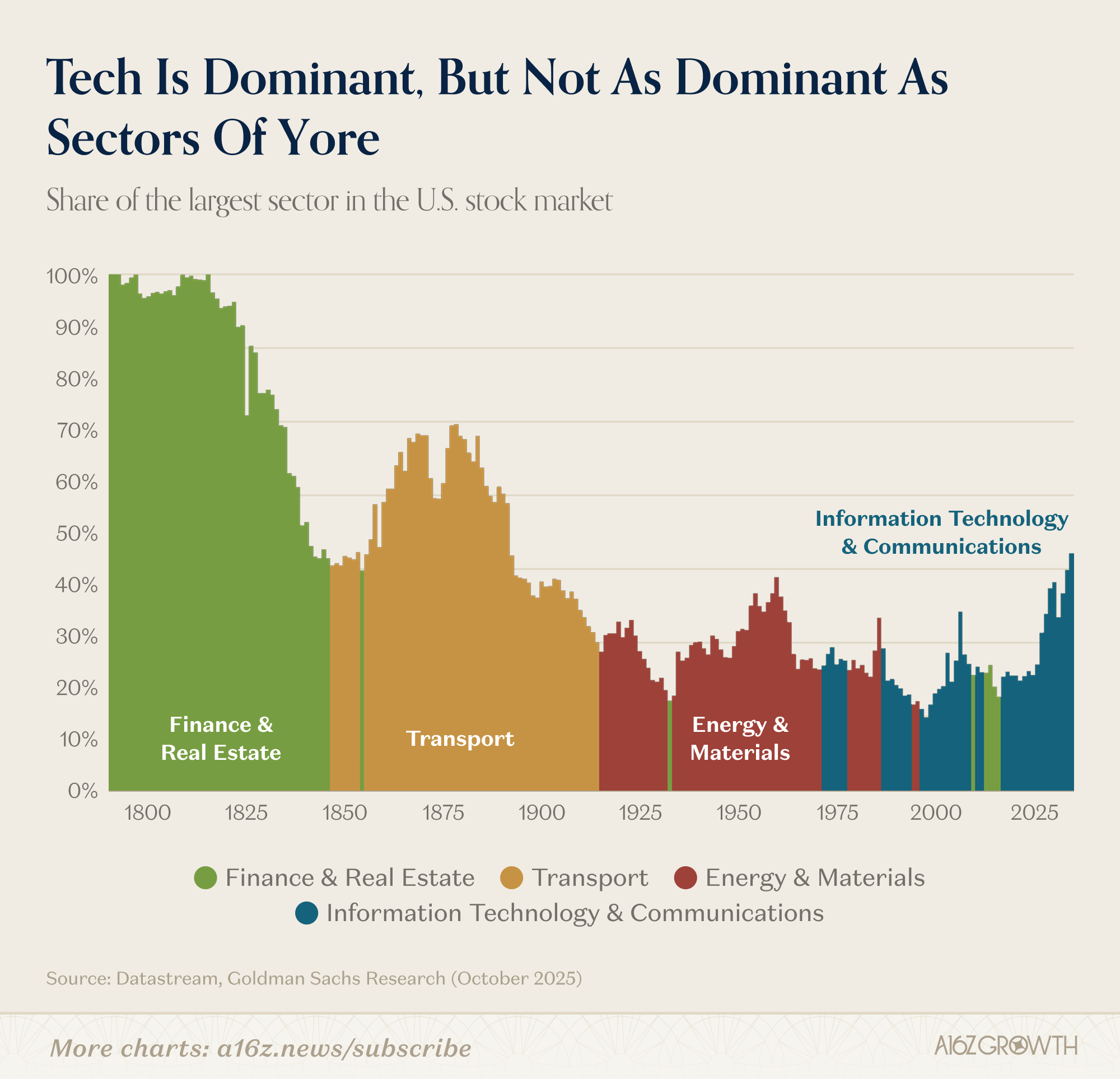

Historically, technological change has invariably made the economic pie bigger.

Each "dominant economic sector" has been superseded by an even larger successor… which, in turn, further expanded the economy's size.

Today's tech sector is far larger than finance, railroads, or manufacturing ever were, yet it still represents a relatively small share of the overall economy or market. Productivity gains are far from a negative-sum game; they are a powerful positive-sum force. Delegating so much work to machines ultimately results in a larger, more diverse, and more complex labor market and economy.

The "doomsayers" want you to ignore the history of innovation, fixate on the dramatic fall in cognitive costs, and take that as the whole truth. They see task substitution and stop thinking.

"We'll increase cognitive output tenfold, but we won't engage in more thinking; we'll pat our bellies, take an early lunch, and everyone else will do the same." This statement reflects not only a profound lack of imagination but also a failure to observe basic facts. The "doomsayers" call this "realism," but it's simply not going to happen.

The Failure of Luddism

(PANews Note: Luddism refers to the early 19th-century social movement in England where workers protested against the Industrial Revolution by destroying industrial machinery, fearing worsening working conditions and unemployment.)

Let's look at what actually happened when massive productivity leaps swept through the economy.

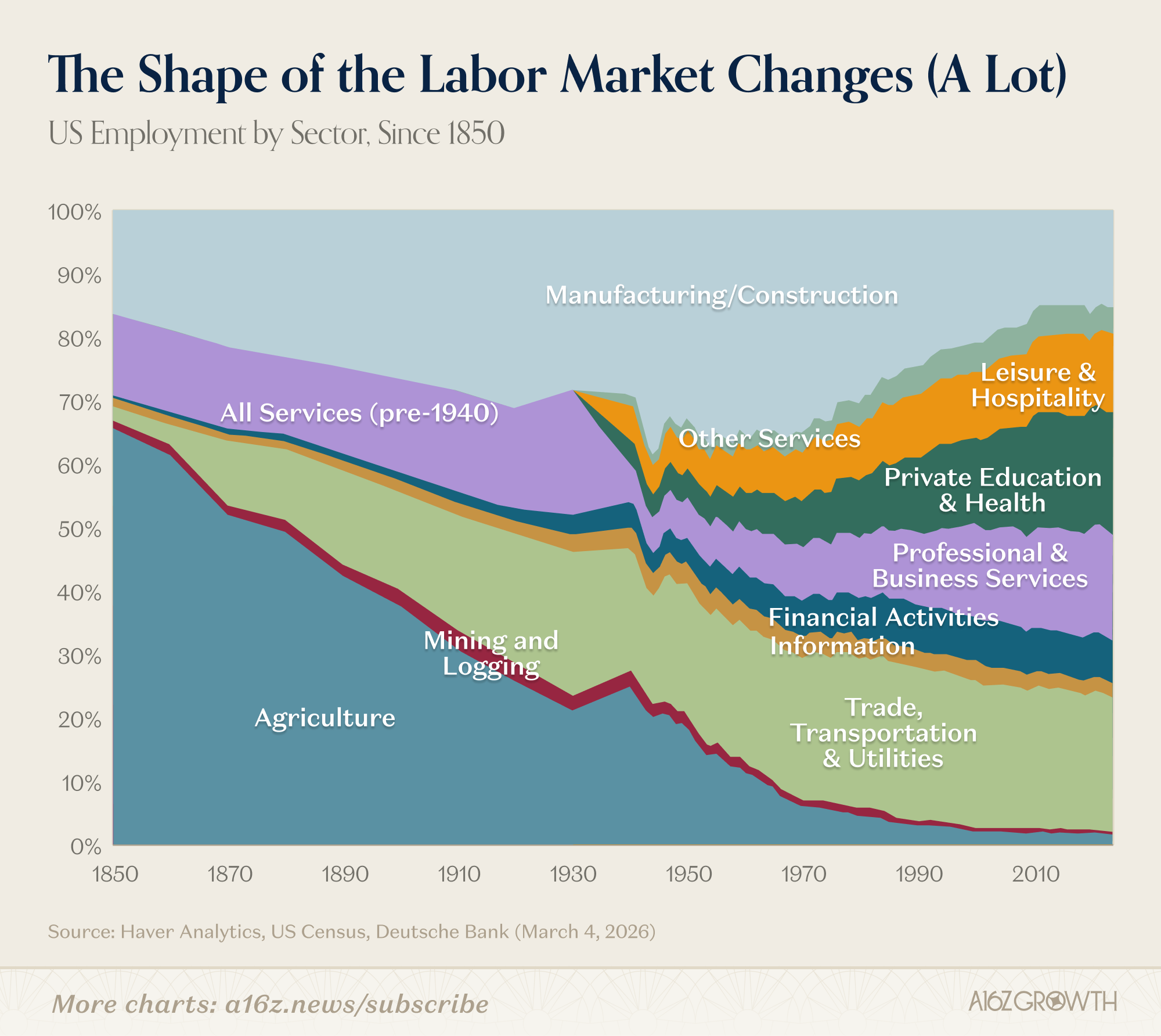

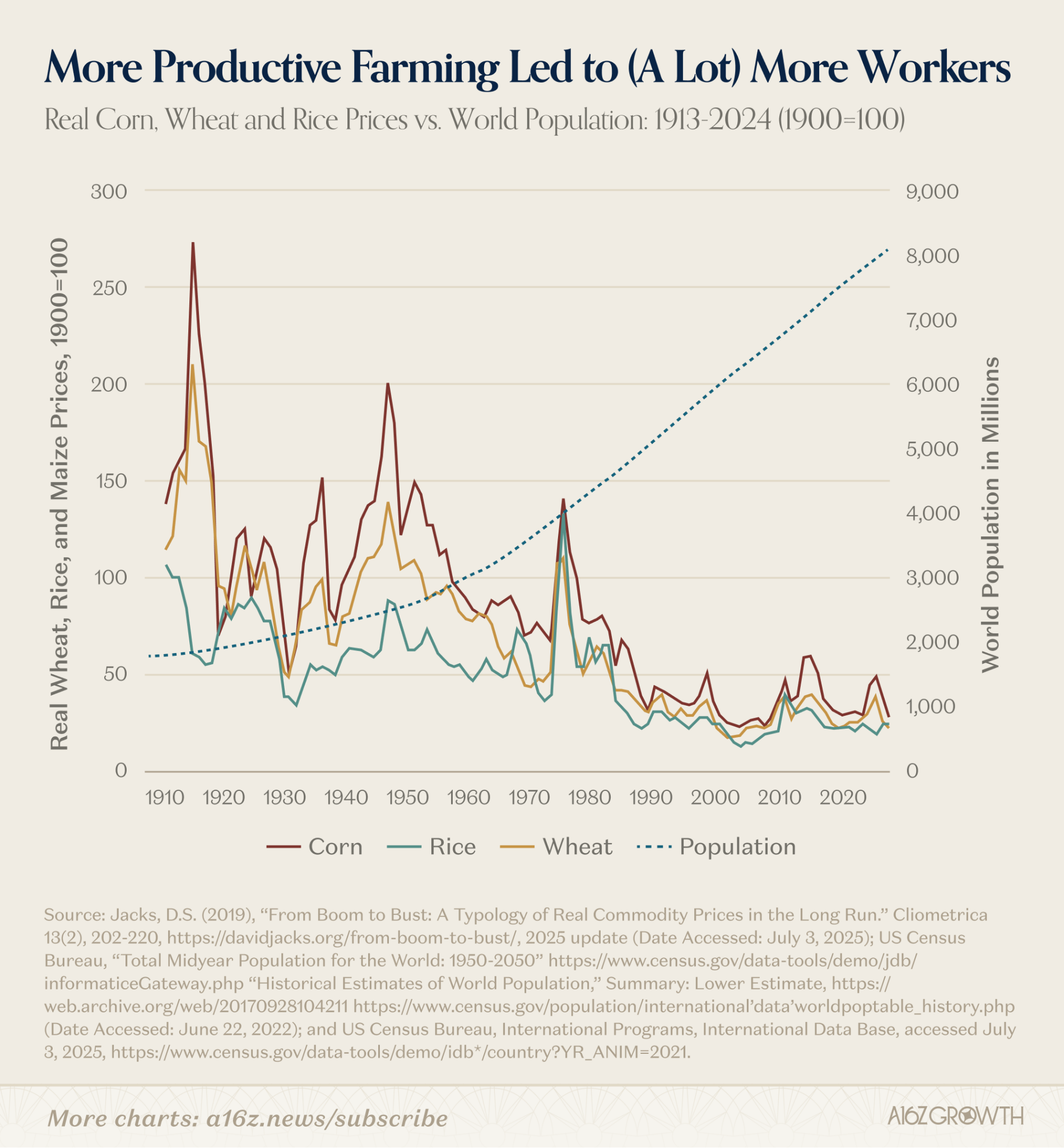

Agriculture

At the beginning of the 20th century, before agricultural mechanization was widespread, about one-third of the U.S. workforce was employed in agriculture. By 2017, this had fallen to about 2%.

If automation led to permanent unemployment, the tractor should have utterly destroyed the labor market. But it didn't. Agricultural output nearly tripled, supporting a much larger population, and those workers, far from being permanently unemployed, flowed into previously unimaginable industries, factories, stores, offices, hospitals, laboratories, and eventually the service and software sectors.

So, yes, one can argue that technology disrupted the career prospects of the average farm worker, but it simultaneously unlocked a surplus of global labor (and resources) and gave birth to an entirely new economic system.

Electrification

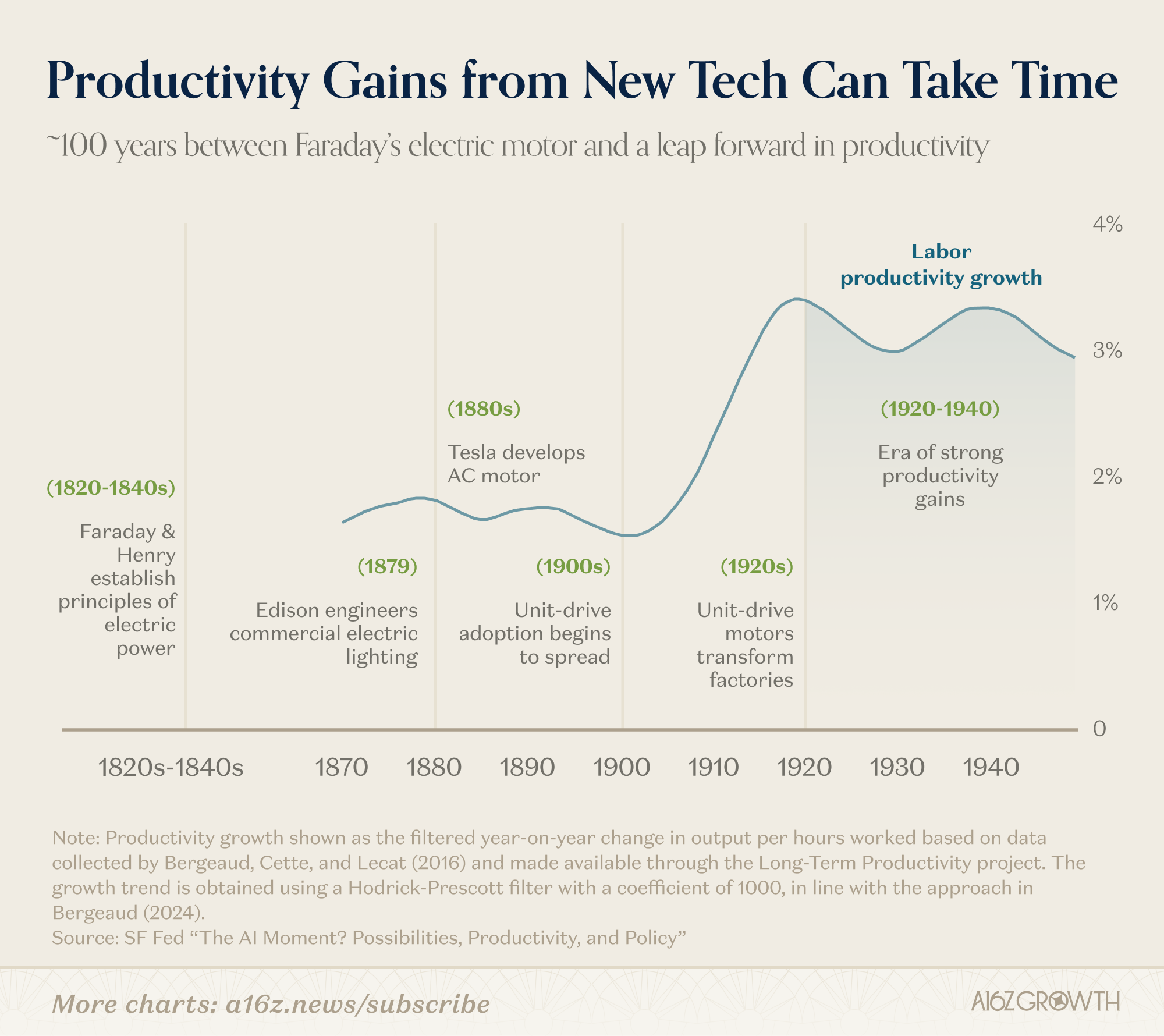

The story of electricity development follows a similar pattern.

Electrification wasn't just swapping one energy source for another. It replaced drive shafts and belts with independent motors, forcing factories to reorganize around entirely new workflows, and spawned entirely new categories of consumer and industrial goods.

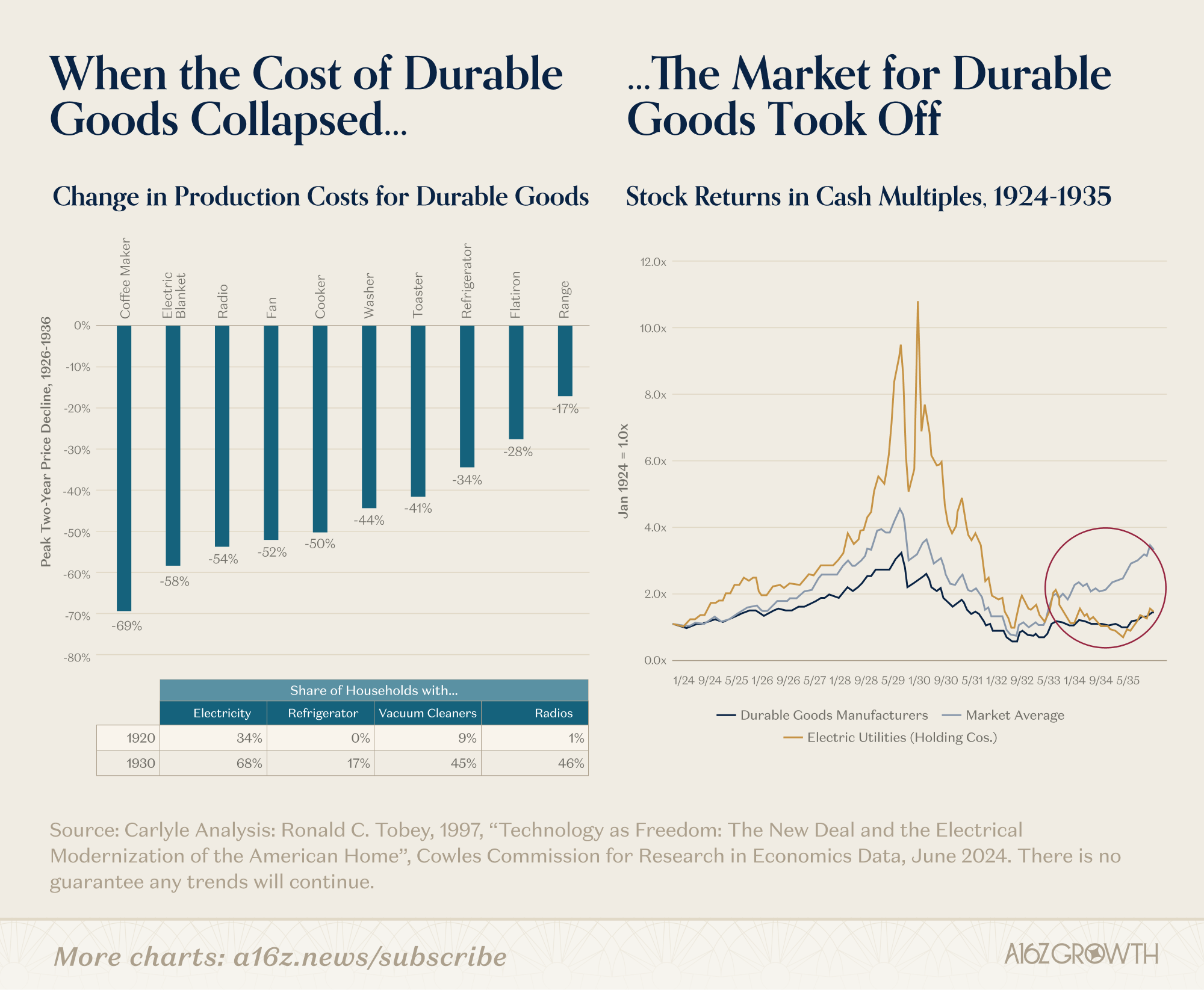

This is exactly what we expect during different phases of a technological revolution, as documented by Carlota Perez in "Technological Revolutions and Financial Capital": massive upfront investment and financial interests, a sharp decline in the cost of durable goods, and the subsequent generational prosperity of durable goods manufacturers.

Electricity didn't realize its productivity advantages overnight. In the early 20th century, only 5% of U.S. factories used electricity to power machinery, and fewer than 10% of homes had electricity.

By 1930, electricity supplied nearly 80% of manufacturing power, and labor productivity doubled over the following decades.

Far from diminishing the demand for labor, increased productivity led to more manufacturing, more salespeople, more credit, and more business activity, not to mention the knock-on effects of labor-saving devices like washing machines and cars. These devices enabled more people to engage in higher-value work previously unattainable.

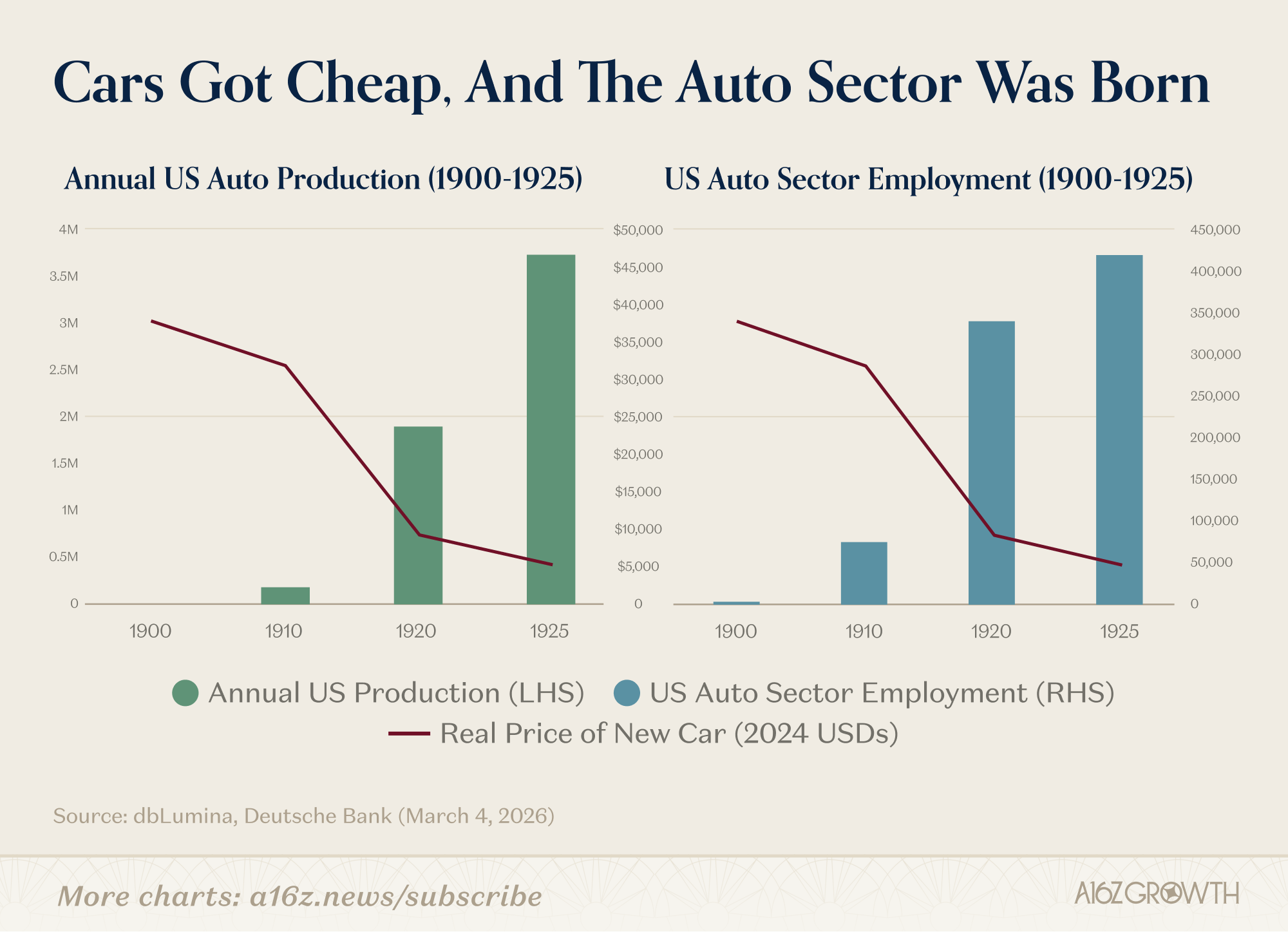

As car prices fell, both car production and employment exploded.

This is what a truly general-purpose technology does: it reorganizes the economy and expands the frontier of useful work.

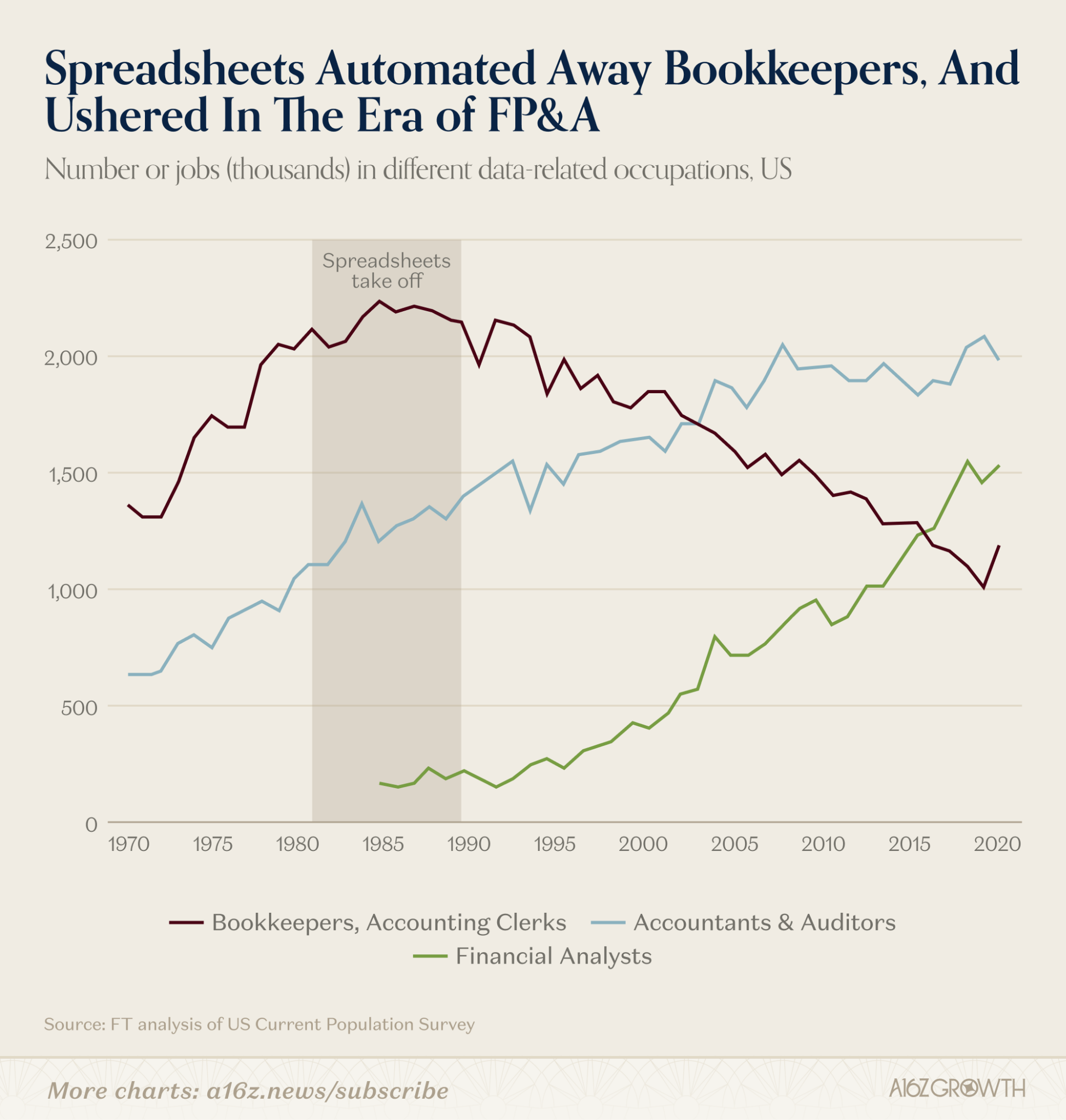

We see this time and time again. Did VisiCalc and Excel end the careers of bookkeepers? Absolutely not. The dramatically more efficient computing technology instead led to a surge in the number of bookkeepers and birthed the entire Financial Planning & Analysis (FP&A) industry.

We lost about 1 million "bookkeepers" but gained about 1.5 million "financial analysts."

New Service Sector Jobs

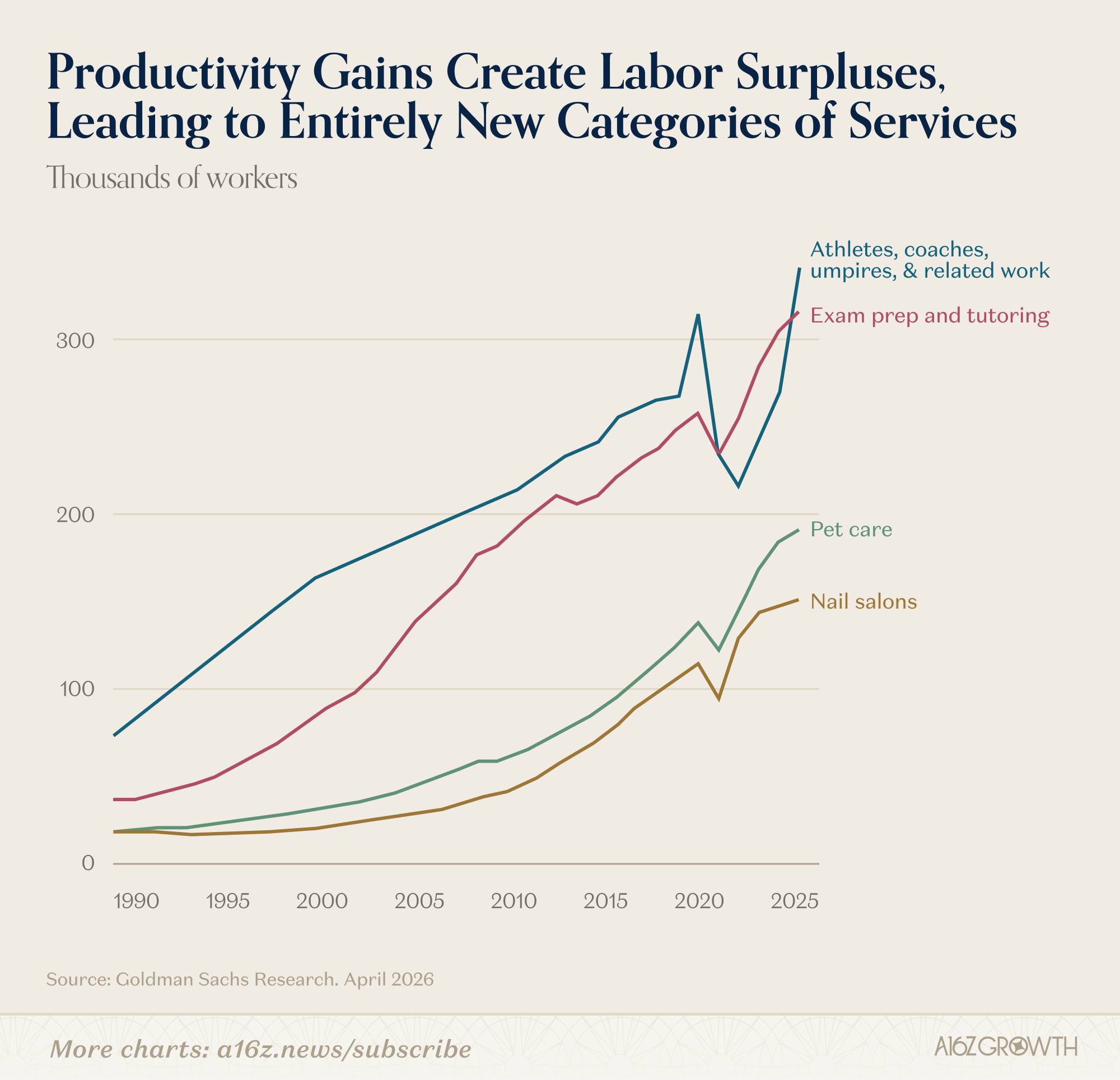

Of course, job displacement doesn't always lead to job growth in related economic sectors. Sometimes, productivity gains translate into new jobs in entirely unrelated industries.

But what if AI means some people become incredibly wealthy while others are left far behind?

At the very least, those ultra-rich will have to spend their money somewhere, just as they have in the past, creating entirely new service industries from scratch:

Massive productivity gains and the resulting wealth creation spawned entirely new fields of work that might never have emerged without income growth and an increased labor supply (even though they were technically feasible as early as the 1990s). Whatever one thinks of the service industries catering to the wealthy, the ultimate outcome benefited everyone, as increased demand led to significant rises in median wages (thus creating more "affluent" people).

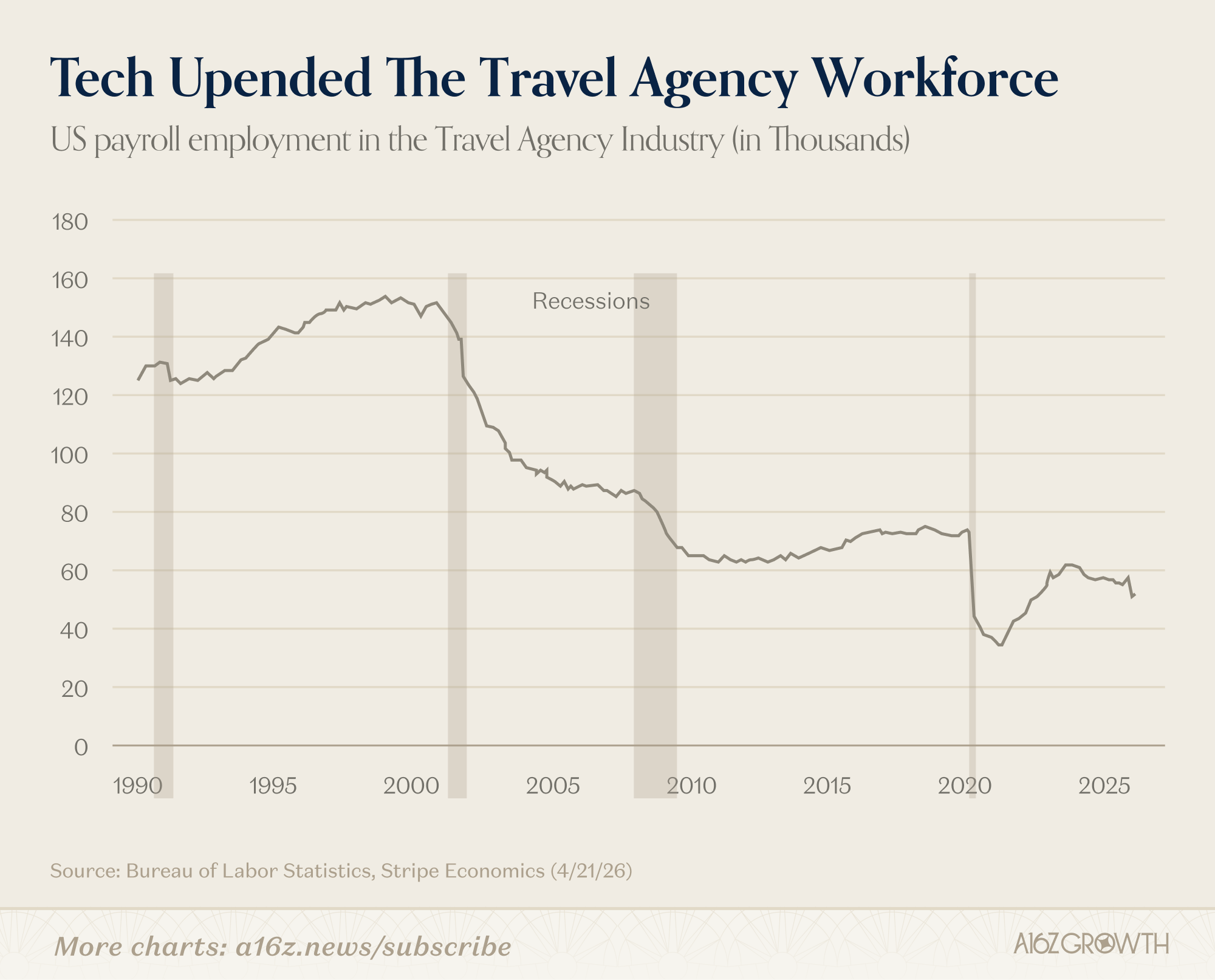

Stripe's in-house economist, Ernie Tedeschi, provides a comprehensive case study of how technology disrupted, transformed, and reshaped the profession of travel agents.

Did technology reduce demand for travel agents? The answer is yes.

Today, the number of travel agent employees is about half of what it was around 2000, almost certainly due to technological advancements.

So, does this mean technology killed jobs? The answer is no, because travel agent employees didn't become permanently unemployed. They found jobs in other sectors of the economy, and today's overall employment-to-population ratio for the economy is roughly the same as it was in 2000 (adjusted for an aging population).

At the same time, for those who remained in the now tech-enabled travel agent industry, increased productivity meant higher wages than ever before:

"At their peak in 2000, travel agents' average weekly wages were 87% of the overall average weekly wage. By 2025, this had reached 99%, meaning that travel agent wages have grown faster than the rest of the private sector during this period."

Therefore, even though technology did impact travel agent employment, the overall employment rate for the working-age population remained the same, and the remaining travel agents are better off than ever before.

Augmentation > Replacement (And Jobs That Don't Exist Yet)

This last point is crucial and demonstrates again that the "doomsayers" are only telling a small part of the story.

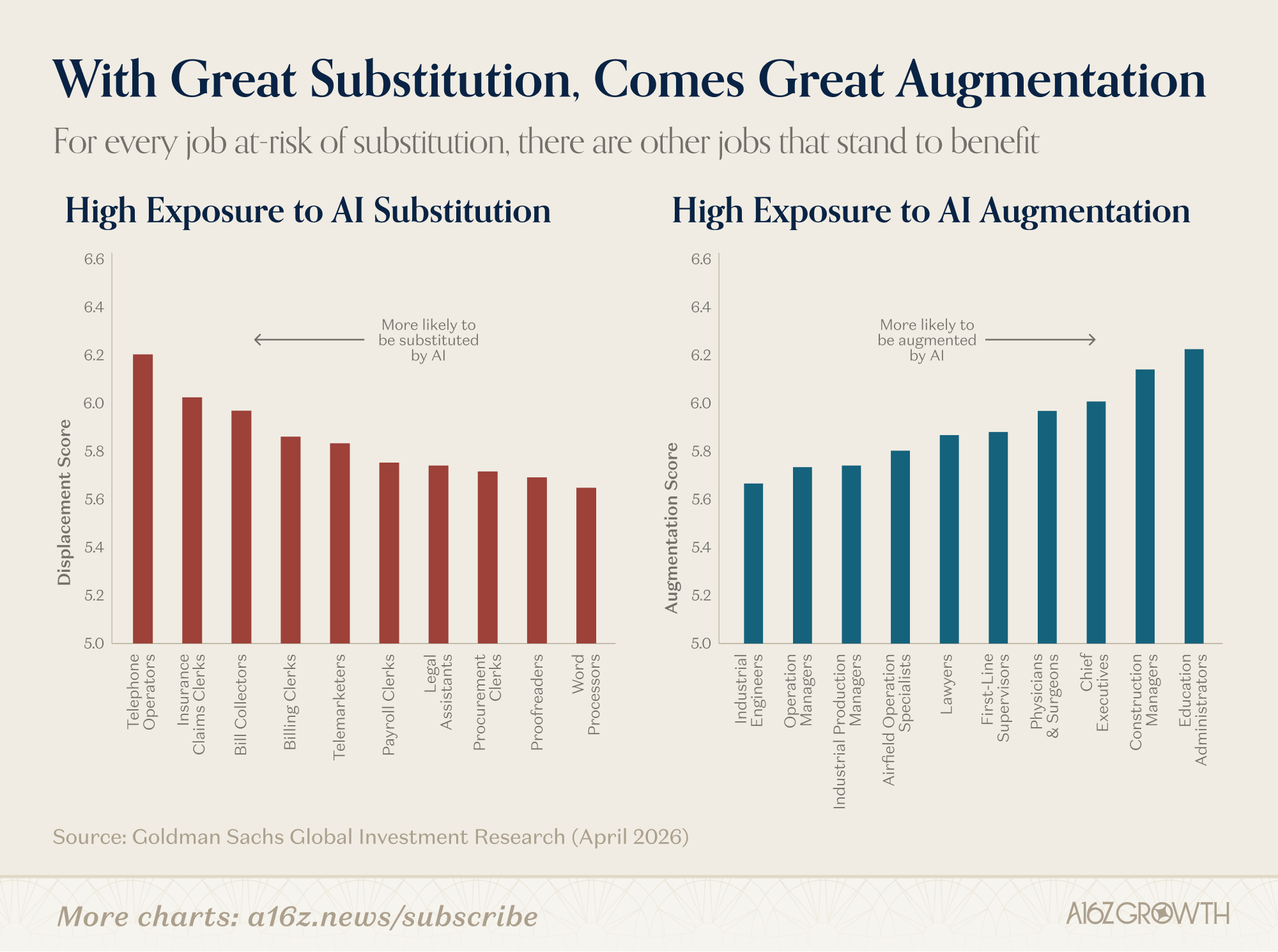

For some jobs, AI poses an existential threat. That's true. But for other jobs, AI acts as a multiplier: making those jobs more valuable. For every job at risk of AI replacement, there are other jobs poised to benefit:

Goldman Sachs estimates the "AI replacement" effect is far smaller than the "AI augmentation" effect.

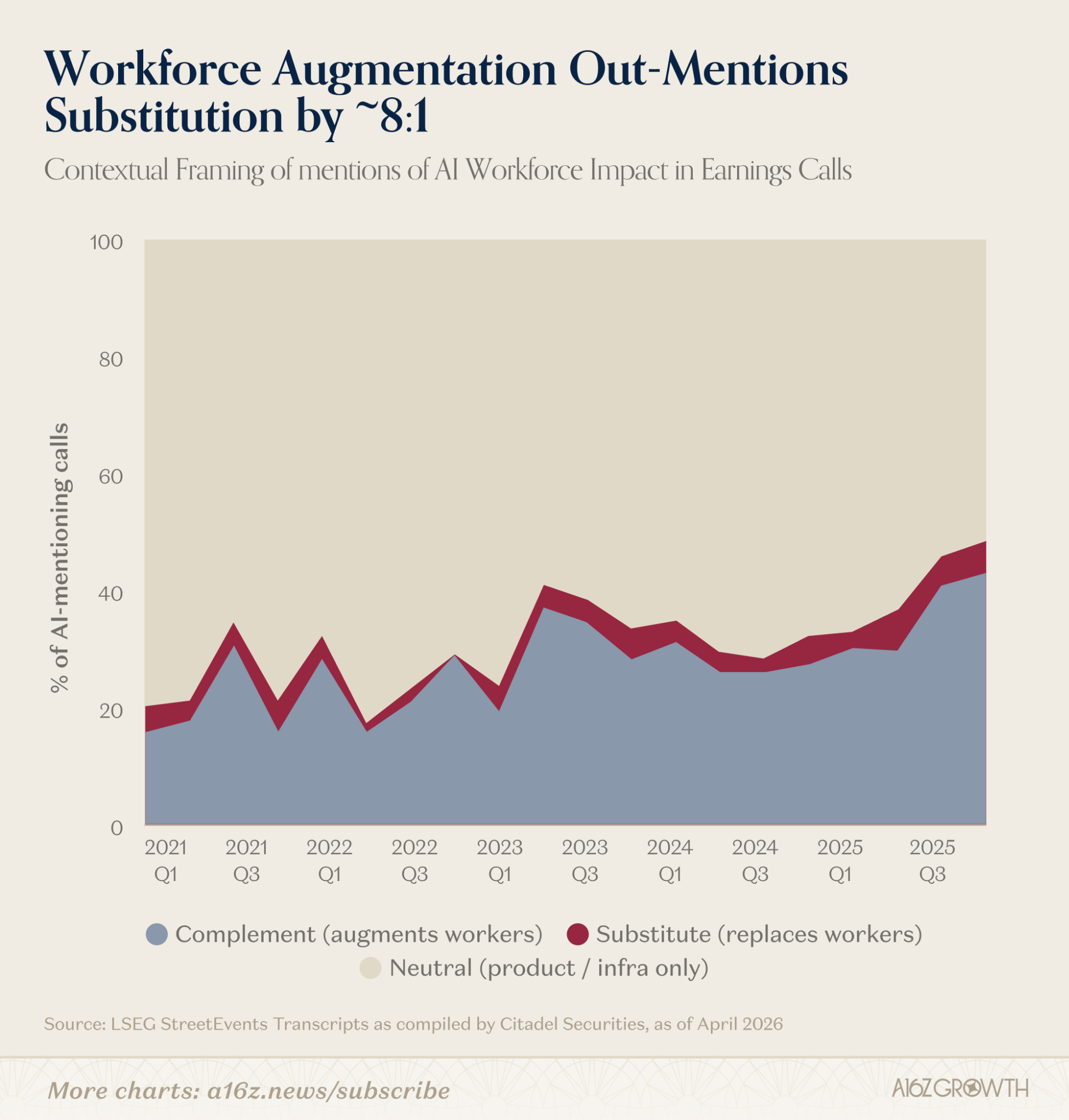

It's also worth noting that management teams seem more focused on augmentation than replacement:

To date, mentions of "AI as an augmenting feature" on earnings calls outnumber mentions of "AI as a replacement feature" by roughly 8 to 1.

Although Goldman Sachs didn't even include software engineers on its list of "augmented" talent, they might be the best example of AI-augmented talent.

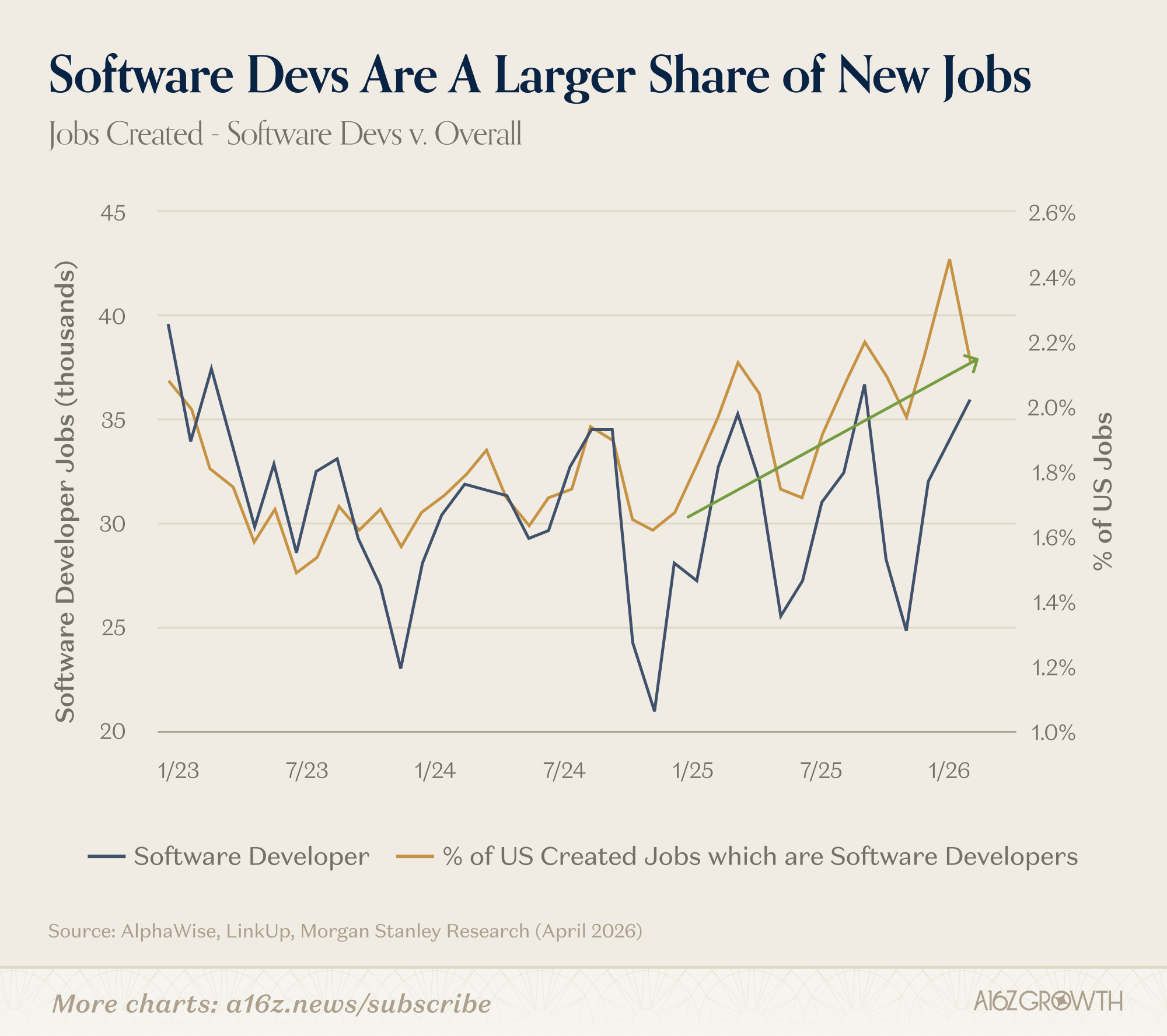

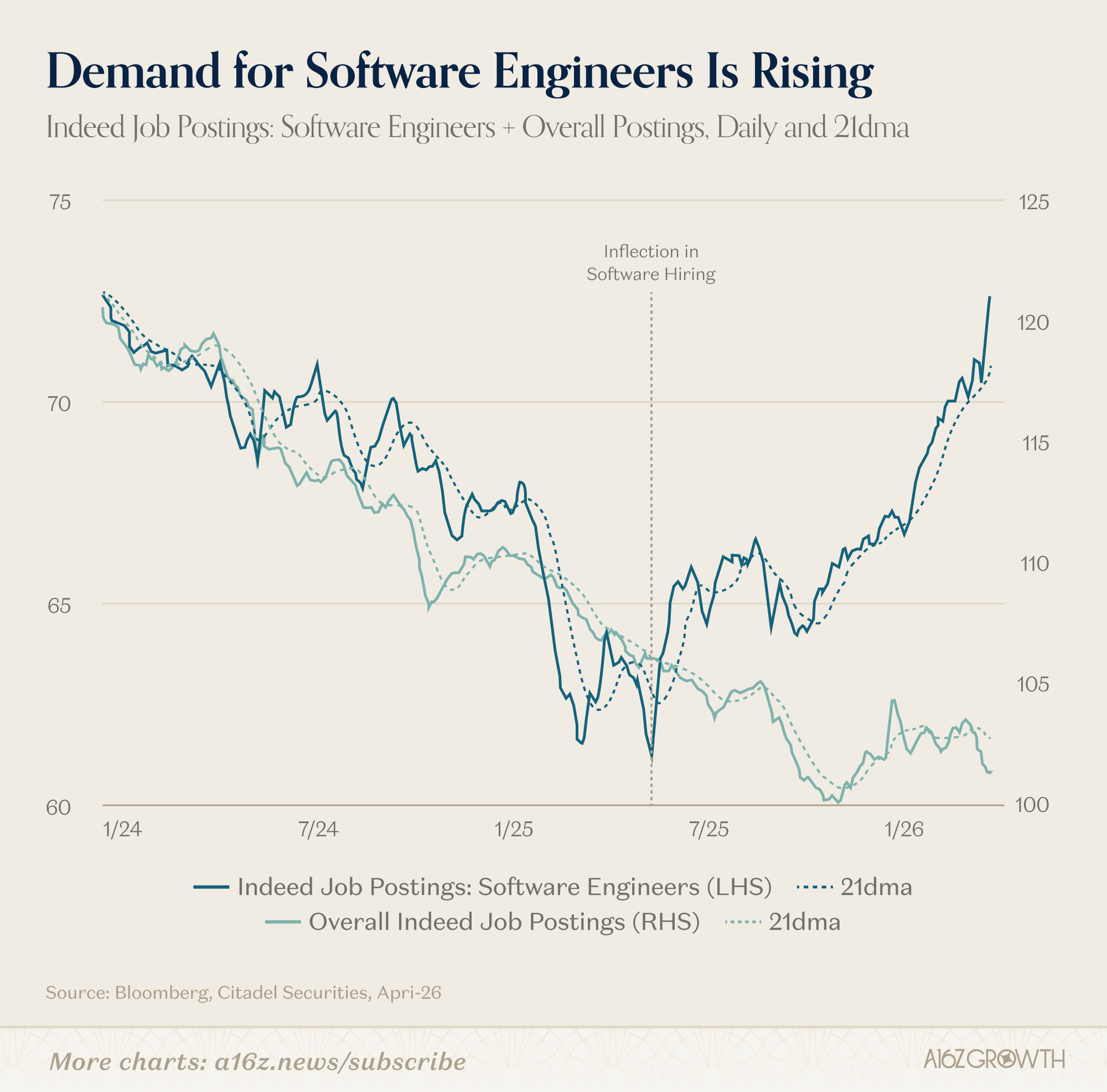

AI is a multiplier for coding. Not only have git pushes surged (along with the creation of new applications and businesses), but the demand for software engineers also appears to be rising:

Since the beginning of 2025, software development jobs (both in absolute numbers and as a percentage of the overall job market) have been steadily increasing.

Is this related to AI? Frankly, it might be too early to say for sure, but AI certainly enhances the productivity of software engineering, not to mention that AI is now a focal point for executives at every company.

Given everyone is trying to figure out how to integrate AI into their own businesses, it's not surprising that companies are hiring aggressively, which inevitably raises the value of some employees rather than lowering it.

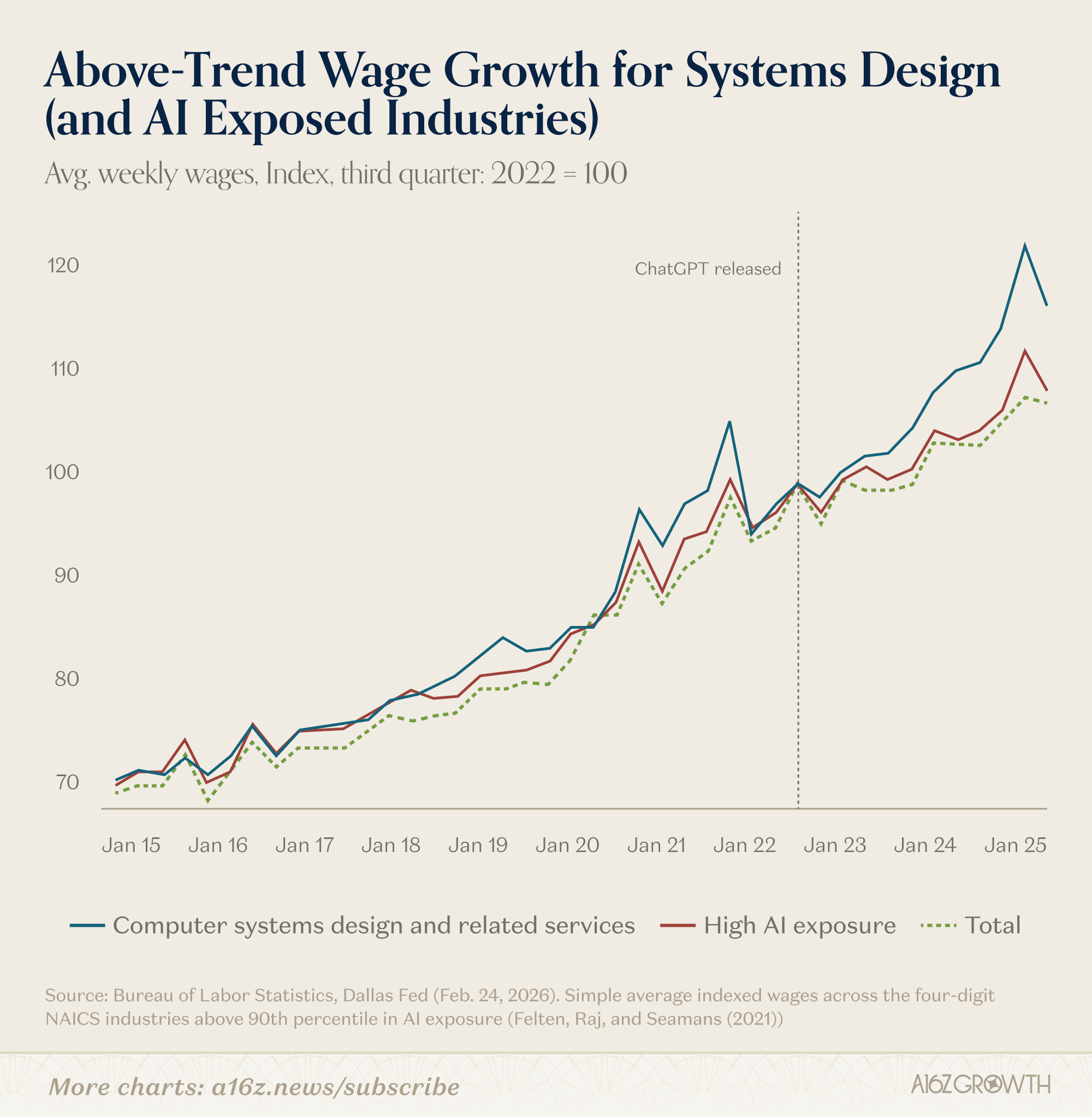

The proliferation of AI seems to be driving above-average wage growth (particularly in systems design).

Currently, these gains might be limited, but it's still early days. As expertise expands, opportunities will also increase. Regardless, this is not the data the "doomsayers" want you to see.

Meanwhile, according to Lenny Rachitsky (founder of Lenny's Newsletter, a platform for the tech community), the number of open project manager positions continues to climb (after a significant drop due to interest rate fluctuations) and is now higher than at any point since 2022:

The growth in hiring for both software engineers and product managers is strong evidence supporting the "lump of labor fallacy." If AI fully replaced human thinking, you might expect "engineers would need fewer product managers," or you could argue "product managers would need fewer engineers," but that's not happening. We see demand for both types of talent continuing to rebound because the key is that people are becoming more efficient.

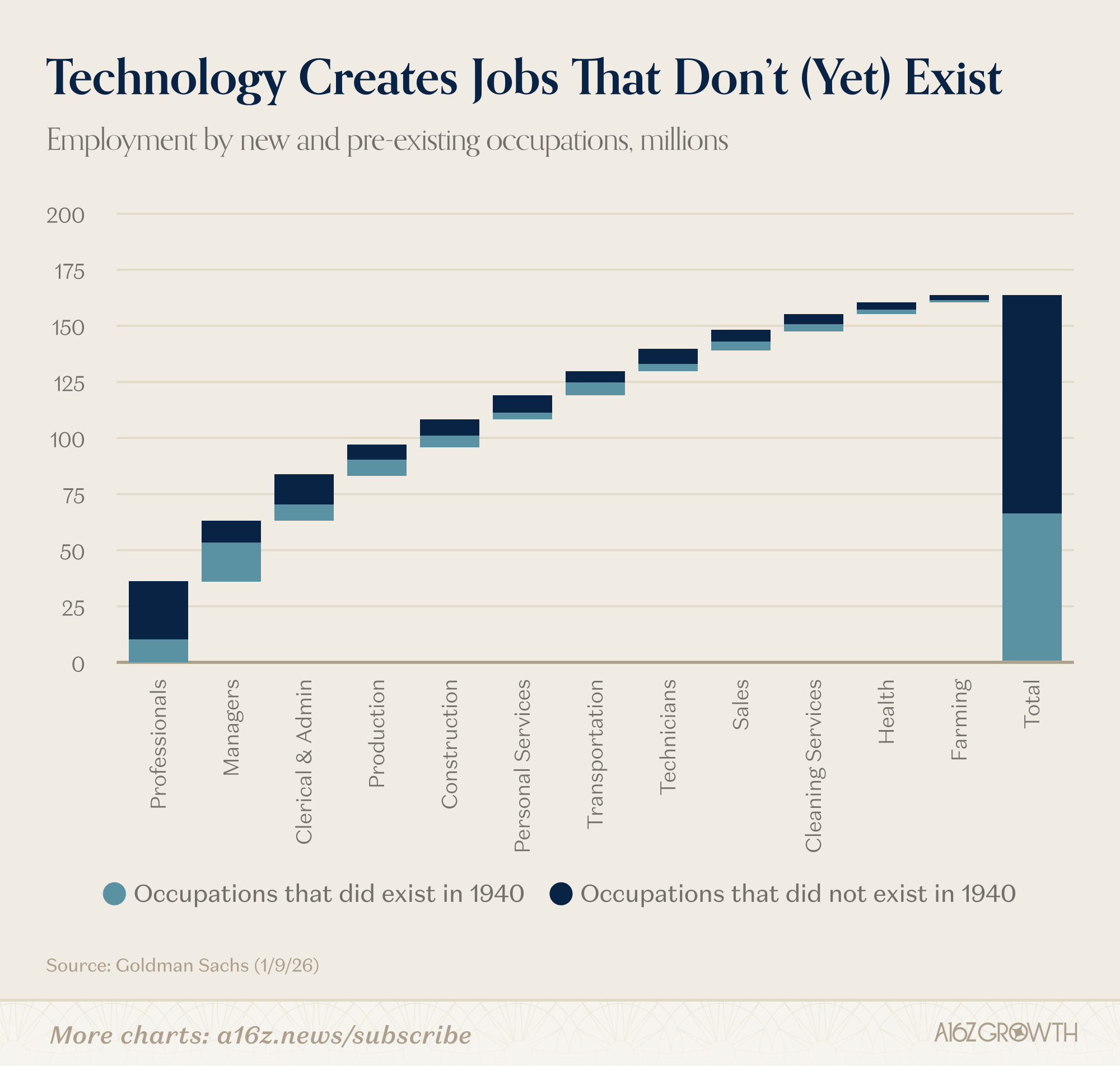

This is why the "doomsayers'" claims are fundamentally a failure of imagination. They focus only on the jobs that will be automated away, ignoring the emerging demand for entirely new job roles we haven't even conceived yet:

Most jobs added since 1940 didn't even exist in 1940. By 2000, it was easy to imagine travel agents losing their jobs, but much harder to envision a mid-market tech service industry built around "cloud migration," because widespread cloud adoption was still over a decade away.

What Does the Current Situation Look Like?

So far, we've mainly discussed theory and precedent, as both support the optimists:

That's right. Every productivity increase leads to demand growth or the reallocation of surplus resources to other parts of the economy. This means more jobs, many of which are significantly more valuable, including ones we've never heard of. If this time is different, then the "doomsayers" need to provide stronger arguments than just empty rhetoric.

The idea that "job displacement" is an end-of-civilization scenario (in fact, it's quite the opposite) makes a lot of sense. Human nature is to be restless. We finish one task and look for another.

But, setting aside theory and precedent, what do the actual data say about AI and employment? While it's still early days (for better or worse), existing data does not support the "doomsayers'" view. If anything, the data shows 'no significant change,' but there is emerging data pointing in the opposite direction: AI is creating more jobs than it is taking away.

First, let's start with some academic research. This is not an exhaustive literature review, just a few examples of recent papers:

- "AI, Productivity, and the Labor Force: Evidence from Business Executives" (NBER Working Paper 34984): "In summary, these results suggest that while AI adoption has not yet led to significant changes in total employment,