The worst storage shortage in history propels Samsung to second place globally

- Core Insight: AI infrastructure demand is pushing storage chips into a "super boom cycle," with bottlenecks spreading from GPUs to storage hardware such as HBM, DRAM, and NAND. This has driven record profits for manufacturers like Samsung, SK Hynix, and Micron, and is prompting a shift in supply chains from "handshake agreements" to long-term, high-prepayment binding contracts.

- Key Elements:

- Samsung's first-quarter net profit exceeded $30 billion, with approximately 94% of its operating profit coming from semiconductors, far surpassing its previous single-quarter record.

- Storage chip prices rose nearly 100% quarter-over-quarter in Q1 2026, roughly double the expected increase, with DRAM profit margins reaching 80%.

- Priority production of HBM squeezes supply of traditional DRAM and NAND. New wafer fabs will not come online until the end of 2027, exacerbating the supply gap.

- To secure capacity, customers have accepted five-year contracts, 30% upfront payments, or co-investment in fab construction, replacing past "handshake agreements."

- The rise of inference demand is driving the expansion of general-purpose servers, further boosting demand and profitability for traditional storage chips.

Original Title: AI Has Made Memory Chips One of the World's Most Profitable Products

Original Author: Jiyoung Sohn, Andrew Barnett, The Wall Street Journal

Translation & Compilation: Peggy, BlockBeats

Editor's Note: Nvidia was the most prominent winner in the AI infrastructure cycle, but the latest wave of chip market trends indicates that AI's bottleneck lies not only in GPUs but also in memory.

Over the past year, global capital expenditure has continuously flowed towards AI, first driving up demand for High Bandwidth Memory (HBM) and subsequently squeezing the supply of traditional DRAM and NAND flash memory. As large model training requires pairing GPUs with HBM, and inference demand drives the expansion of general-purpose servers, memory chips have transformed from a cyclical industry into one of the scarcest and most profitable segments in the AI supply chain.

This explains the collective surge in earnings for Samsung, SK Hynix, and Micron. Memory chip prices rose nearly 100% quarter-over-quarter in the first quarter, propelling Samsung's net profit to over $30 billion, with its semiconductor business contributing the vast majority of profits. Memory chip prices in the first three months of 2026 increased nearly 100% quarter-over-quarter, far exceeding market expectations. More importantly, this is not merely a short-term price surge but a repricing of the supply-demand structure: new fab construction cycles are lengthy, HBM consumes more production capacity, further compressing the supply of traditional memory.

In this context, memory chips are evolving from "supporting components" into "strategic resources." Server, PC, and smartphone manufacturers are paying premiums to secure capacity, even accepting five-year contracts, advance payments, and co-investing in new fabs. The supply relationships once maintained by handshake agreements are shifting towards more tightly bound, long-term commitments. In other words, AI competition is no longer just about models, computing power, and cloud platforms; it is also becoming a battle for control over the underlying supply chain.

The most noteworthy point is not how much money memory chip companies made this year, but that the bottleneck in AI infrastructure is spreading from a single computing resource to a broader hardware ecosystem. GPUs determine whether a model can be trained, HBM determines the speed of data exchange, and DRAM and NAND flash influence the cost structure of inference and server expansion. As more companies believe that "whoever controls memory supply controls AI," the period of extraordinary profitability for memory chips is also a signal that AI infrastructure has entered a phase of resource competition.

The following is the original text:

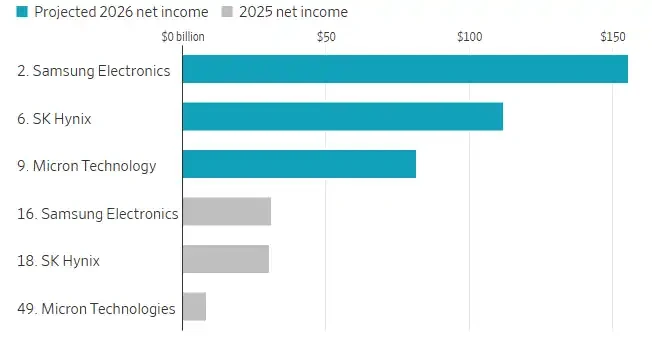

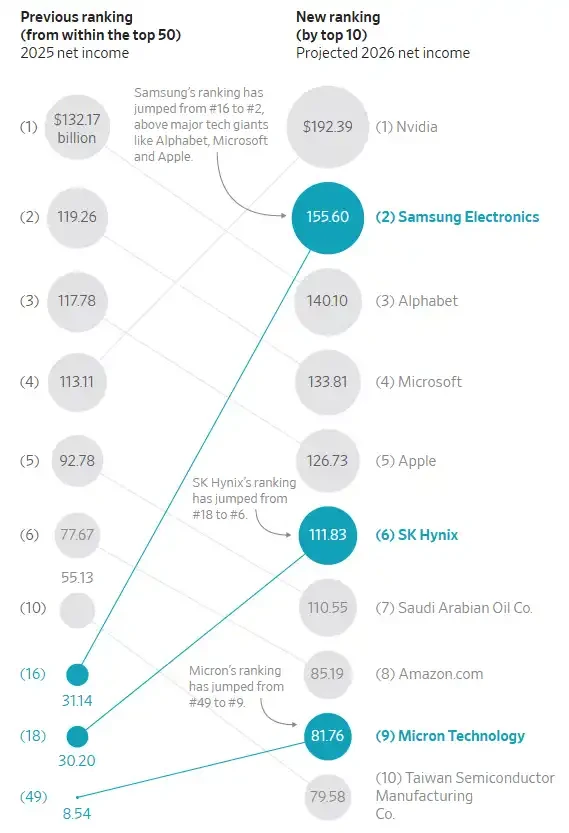

Ranked by Net Profit: Chip Makers in the Global Top 20.

By the end of last year, global investment in artificial intelligence had pushed the memory chip industry into a "superboom cycle." Profits hit record highs, and prices were expected to rise another 50% in the first three months of 2026 compared to the previous quarter.

But things didn't stop there. The reality turned out to be even better, much better.

On Thursday, Samsung Electronics reported a net profit exceeding $30 billion for the first quarter. This not only far surpasses its previous single-quarter profit record but also nearly approaches the company's past annual profit peak. Approximately 94% of Samsung's operating profit in the first quarter came from its semiconductor business.

Samsung's main rivals in the memory chip space – South Korea's SK Hynix and U.S.-based Micron Technology – have also recently reported similarly surprising results. These three companies dominate the global memory market, and memory chips, along with Nvidia's processors, are being used for AI computing.

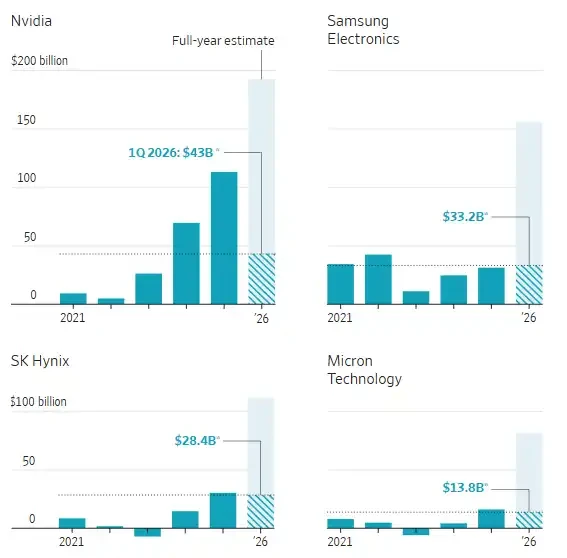

Annual Net Profit of Semiconductor Companies

In the first-quarter data, Samsung and SK Hynix correspond to the fiscal quarter ending March 2026 (actual results); Micron corresponds to the fiscal quarter ending February 2025 (actual results); Nvidia corresponds to the fiscal quarter ending April 2026 (estimated results).

Note: Samsung data includes all its businesses, but the semiconductor business contributed the majority of profits. Exchange rate calculated at 1 USD to 1,421.22 KRW. Source: FactSet / Andrew Barnett, The Wall Street Journal

Despite growing concerns about whether AI services will ultimately generate significant profits, companies involved in building the related infrastructure have already reaped an epic windfall.

And this historic rally shows no signs of ending soon. Jaejune Kim, Executive Vice President of Samsung's memory business, stated during Thursday's earnings call that based on orders already booked, the supply shortage is expected to worsen further next year. He said, "Currently available production capacity is far from sufficient to meet customer demand."

Samsung's stock price has risen 72% since the start of the year. SK Hynix's stock is up 90%, and Micron's is up 65%.

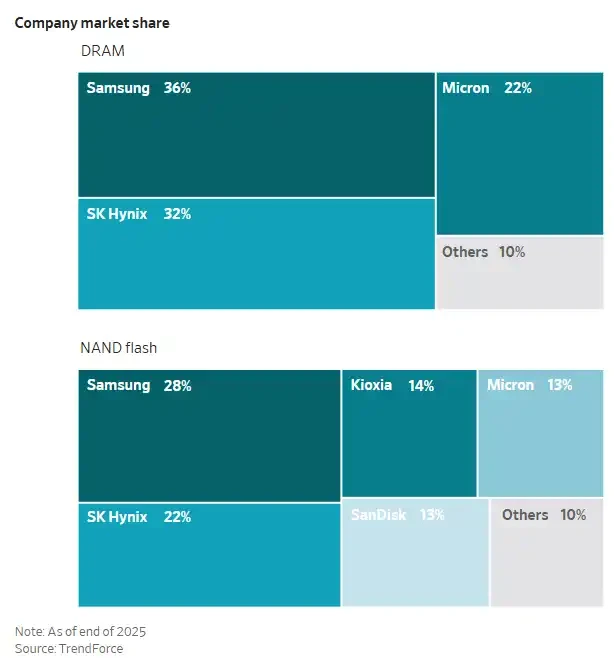

Company Market Share

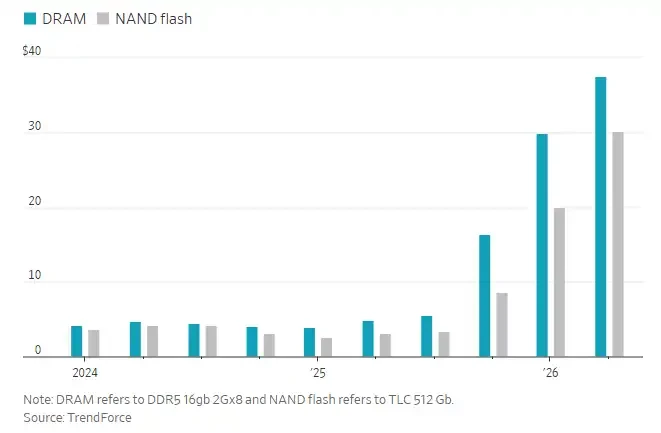

According to data from tech market research firm TrendForce, memory chip prices in the first three months of 2026 rose nearly 100% from the previous quarter, roughly double the initial expected increase.

In recent years, memory chip manufacturers have prioritized production of specialized memory chips required for AI, namely High Bandwidth Memory (HBM). This, in turn, has constrained the supply of traditional memory chips used in smartphones, personal computers, and general-purpose servers. Training large language models typically requires pairing Nvidia's graphics processing units (GPUs) with HBM.

More recently, demand for inference has been rising. Inference refers to the computational process required for a trained AI model to respond to user queries. This has driven demand for general-purpose servers, which use traditional memory chips, thereby boosting the profitability of Samsung, SK Hynix, and Micron to new heights.

According to estimates from FactSet, these three companies are expected to generate a combined net profit of approximately $350 billion in 2026. Each is poised to rank among the world's top ten most profitable listed companies, with Samsung projected to leap to second place, surpassing Alphabet, Microsoft, and Apple. A year ago, none of these memory chip makers were in the top ten.

Ranking of Select Chip Manufacturing Companies by Net Profit

A chip manufacturing plant, or fab, can cost over $20 billion and take years to build. Industry analysts say Samsung, SK Hynix, and Micron are all constructing new fabs, but capacity is unlikely to be fully available until late 2027 or 2028. Meanwhile, many production lines are already allocated to HBM, which consumes more capacity compared to traditional memory chips.

Memory chips primarily fall into two categories: DRAM, used for temporary storage in servers, PCs, and other electronic devices to support faster data processing; and NAND flash memory, used for long-term data storage, such as storing photos on a phone.

HBM is made by stacking layers of DRAM chips and is then packaged together with processors from companies like Nvidia to accelerate AI computing. Nvidia works closely with Samsung, SK Hynix, and Micron.

MS Hwang, a semiconductor research analyst at Counterpoint, stated that the operating profit margins for both types of memory have roughly doubled from usual levels, with DRAM margins reaching around 80% and NAND flash margins up to 60%.

Memory Chip Contract Prices

Counterpoint's Hwang added that many large companies in the server, PC, and smartphone sectors are paying premiums and purchasing memory chips in large volumes to lock in more supply and limit the capacity available to competitors. He said, "The logic is that whoever controls memory supply can dominate AI."

Marcus Chen, Executive Vice President at global electronic components distributor Fusion Worldwide, commented, "What we are seeing today is the most severe memory shortage the market has ever experienced." Most of Chen's clients are currently receiving only 30% to 50% of the memory chips they need. "Some clients are getting even less," he said.

For a long time, customers and memory chip manufacturers relied primarily on "handshake agreements" to secure long-term supply; now, in some cases, they are shifting to binding formal contracts. Peter Lee, a semiconductor analyst at Citigroup, noted that some contracts have terms of up to five years and require customers to prepay around 30% of the cost or share the investment cost of building new memory chip fabs. Lee said, "We have already seen customers willing to go this far."