上市即熔斷,單日暴漲超108%,Cerebras真是「下一個輝達」?

- 核心觀點:Cerebras(CBRS)作為AI晶片新貴,憑藉晶圓級晶片技術宣稱比輝達快20倍,IPO首日股價暴漲超100%。其核心優勢在於超大規模晶片架構和與OpenAI的深度綁定(包括200億美元訂單及潛在股權),但短期內無法取代輝達,市場地位更偏向高端小眾玩家。

- 關鍵要素:

- 上市表現:Cerebras於昨夜開盤,從185美元發行價一度衝高至385美元,漲幅超108%,目前回落至311美元,仍有超68%漲幅。

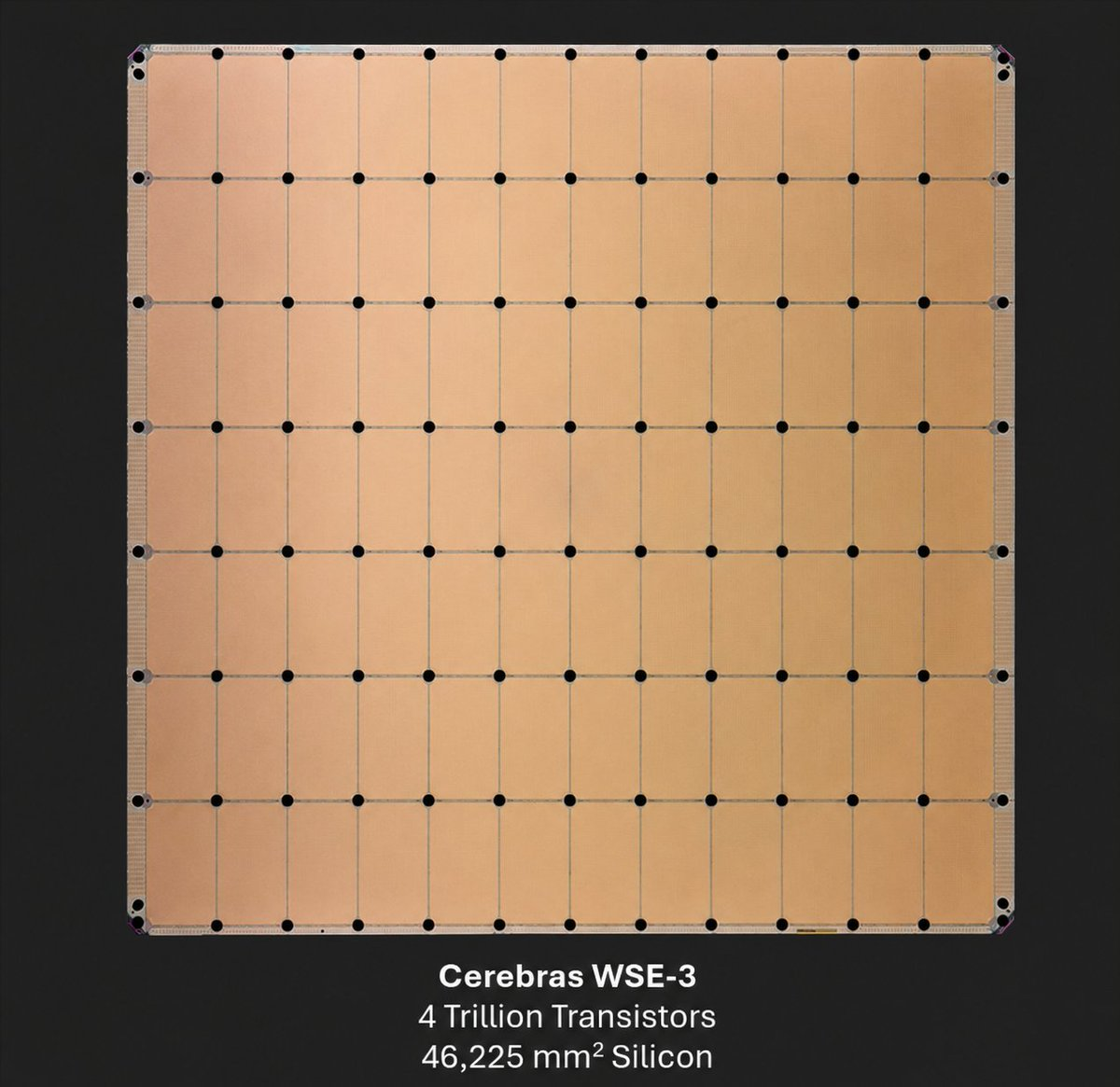

- 技術差異:核心產品WSE-3晶片面積達46,225 mm²(餐盤大小),含4兆個電晶體、90萬個AI核心,提供125 petaflops計算力,透過單晶圓設計避免多GPU互連瓶頸。

- 業務實績:2025年收入5.1億美元(年增76%),已實現獲利;與OpenAI簽署超過200億美元算力框架合約,OpenAI計畫分三年採購其伺服器並獲得股權作為交易的一部分。

- 與OpenAI關係:OpenAI創辦人Sam Altman、Greg Brockman是Cerebras早期天使投資者;2025年12月OpenAI提供10億美元營運貸款;招股書顯示OpenAI可能以極低行權價獲得約3344萬份認股權證,若行權將持股10%-11%。

- 競爭局限:Cerebras無法短期內取代輝達的主因有四:CUDA生態差距巨大(開發者難以切換)、規模效應弱(輝達2025年收入是數百億美元,Cerebras僅5.1億美元)、晶片製造成本高(晶圓級晶片良率挑戰大、單價高)、面臨Groq、AMD、Google TPU等直接競爭。

- 股價展望:受惠於AI熱潮和算力缺口,短期仍有上行空間;後續2-3年訂單轉化和推理需求變化將是關鍵變數,若不如預期則股價承壓。

Original|Odaily Planet Daily (@OdailyChina)

Author|Wenser (@wenser 2010)

Last night, Cerebras (CBRS), touted as the "next Nvidia," officially began trading. Shortly after opening at an IPO price of $185, the stock surged to $350, hitting an intraday high of $385—a gain of over 108%. Although the price has since retreated to around $311, it still holds a gain of over 68%. Previously, Cerebras CEO Andrew Feldman told CNBC, "Our chip is the size of a dinner plate and is 20 times faster than Nvidia's chips."

What gives this chipmaker, which has raised $5.5 billion, the confidence to claim it's "faster than Nvidia"? And how did it secure a $20 billion order from OpenAI amid fierce competition? In the short term, will its stock price continue its rally? Odaily Planet Daily will address these questions in this article.

Cerebras's Confidence in Taking on Nvidia: Opening a New AI World with Wafer-Scale Chips

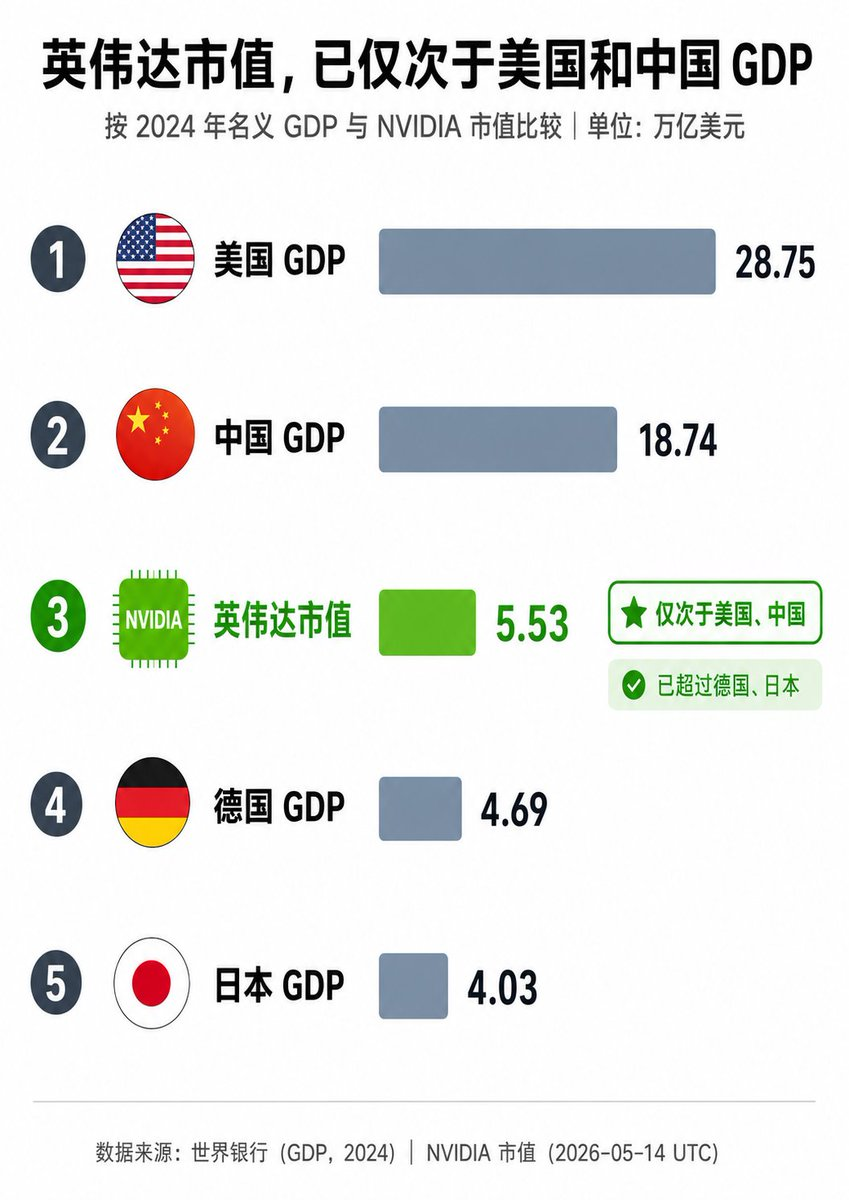

As the gap in AI computing power continues to widen, robust market demand has propelled Nvidia to become the world's most valuable publicly listed company.

Recently, Nvidia's stock price hit new highs, briefly pushing its market cap past $5.5 trillion. In terms of market capitalization, it has become an economy second only to the GDPs of the United States and China, far surpassing other major global economies like Germany and Japan—truly "too big to fail."

But unlike the decades-old "established giant" Nvidia, Cerebras (CBRS) is a relative newcomer in chip manufacturing.

Founded in 2016 by industry veterans Andrew Feldman, Gary Lauterbach, Sean Lie, Michael James, JP Fricker, and others, Cerebras Systems is headquartered in Sunnyvale, California. Unlike Nvidia's strategy of building general-purpose GPUs to maximize market demand, Cerebras's core innovation is the Wafer Scale Engine (WSE), currently the world's largest AI chip.

Cerebras founding team of five (2022)

Its core products include:

- WSE-3: An area of approximately 46,225 mm² (about the size of a dinner plate), containing 4 trillion transistors and 900,000 AI-optimized cores, delivering 125 petaflops of computing power. Compared to traditional GPUs, it treats the entire wafer as a single giant processor, avoiding the bottlenecks of multi-GPU interconnectivity, with on-chip SRAM reaching 44GB and extremely high memory bandwidth.

- CS-3 System: An AI supercomputer based on the WSE-3, supporting both training and inference; Currently, Cerebras doesn't just sell chips; it also offers cloud services (Cerebras Inference), dedicated data centers, and technical support for on-premise deployment.

In terms of business model, Cerebras primarily provides ultra-low latency inference for clients like OpenAI, Meta, Perplexity, Mistral, GSK, and Mayo Clinic. In 2025, Cerebras generated $510 million in annual revenue (up 76% year-over-year), achieved profitability, and is backed by a substantial order book (including a multi-year, hundreds-of-megawatt compute contract with OpenAI).

Diagram of the Cerebras WSE-3 chip

On the day of its IPO, May 14th, Cerebras CEO Andrew Feldman responded positively on CNBC's "Squawk Box" regarding the company's operational status, technology moat, and future market direction:

- First, Feldman stated that the IPO is "the right way to fund our growth," emphasizing that the company is mature and the public market can support significant growth opportunities. He called it the culmination of a decade of work, expressed great pride, and noted the market "understood our story and responded positively."

- Second, he repeatedly stressed that Cerebras is the only company in 70 years to successfully manufacture a "giant chip," with all other attempts having failed, hence "the technical moat is wide and deep." It was here he mentioned that Cerebras's chip is 58 times larger than competitors like Nvidia and runs 15-20 times faster, significantly accelerating AI inference and training.

- Finally, addressing concerns about the sustainability of AI spending, Feldman said demand is "huge and growing." The company's chip is transforming the AI experience (faster responses, real-time agents, etc.). He highlighted key partnerships with OpenAI, AWS, and others, and is optimistic about the overall AI hardware landscape.

As an aside, echoing Musk and Anthropic's bets on "space data centers" (recommended reading: "Musk and Anthropic Go to Space to Find Electricity"), Feldman boldly predicted, "Data centers in space could very well become a reality within 15 years," showcasing his unwavering confidence in the long-term buildout and rapid expansion of AI infrastructure.

Thus, as a "speed geek" in the AI chip field, Cerebras has successfully broken through by focusing on the extreme performance of ultra-large models, emerging as a strong challenger to Nvidia in areas like large model inference and large-scale training applications.

In this regard, the $20 billion order from OpenAI provides ample confidence for its development, and the cooperation between the two goes far beyond the relationship of "chip manufacturer" and "chip buyer."

The Complex Relationship Between Cerebras and OpenAI: Customer, Creditor, and Potential Major Shareholder

The connection between Cerebras and OpenAI has a long history. Beyond corporate cooperation, OpenAI founder Sam Altman, co-founder Greg Brockman, and others were early angel investors in Cerebras, holding small stakes. This might be a significant reason for the deep, multi-faceted binding between the two companies.

In December 2025, OpenAI provided Cerebras with a $1 billion Working Capital Loan, establishing a creditor relationship between the two parties.

In January of this year, the "750MW Inference Compute Procurement Agreement" between Cerebras and OpenAI was officially unveiled, with an option to expand the collaboration to 2GW. This was confirmed again in April. According to media reports, OpenAI plans to spend over $20 billion in the next three years to purchase servers powered by Cerebras chips and will receive equity in the company as part of the deal. This instantly made OpenAI Cerebras's largest client, bar none.

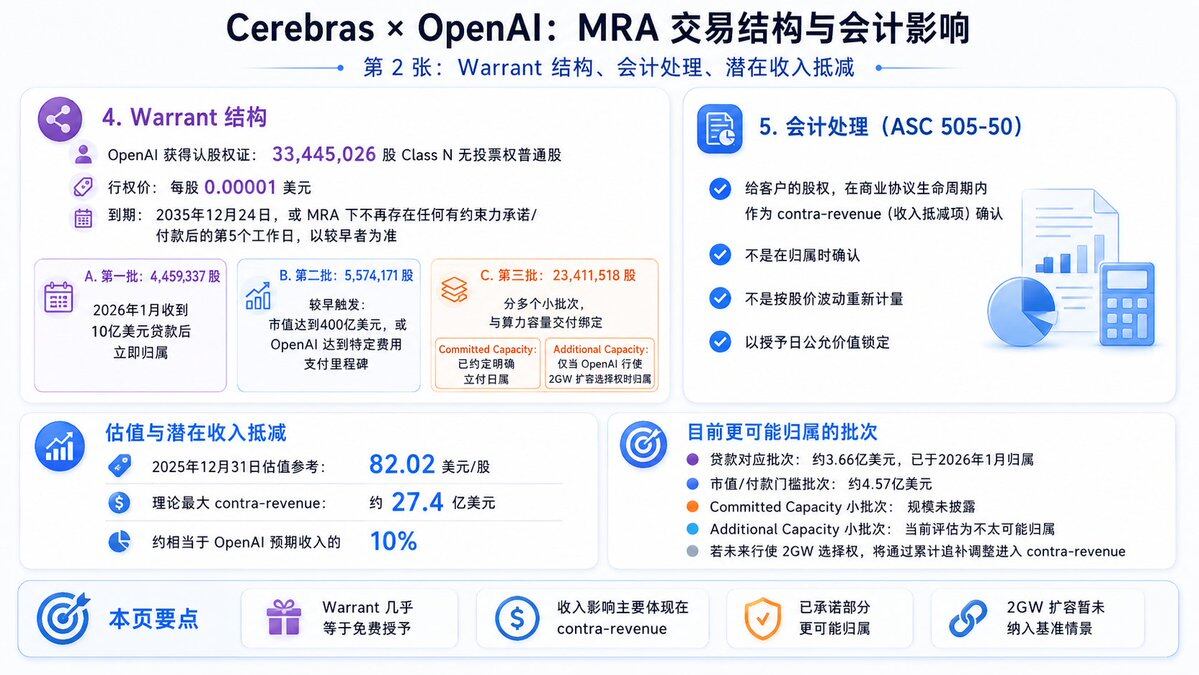

Image source: @Xingpt

Cerebras's subsequent S-1 filing and IPO documents indicate that OpenAI is expected to acquire approximately 33.44 million Cerebras warrants at a very low exercise price of $0.00001 per share. Some of these warrants have vesting conditions, including compute delivery dates and milestone requirements such as Cerebras's market cap exceeding $40 billion.

If all warrants are exercised and conditions met, OpenAI could obtain approximately 10%-11% of the equity (the exact percentage depends on the total shares outstanding post-IPO). Based on the roughly $56 billion valuation at the IPO pricing, this stake would be worth around $5-6 billion. At the current market cap (approaching $95 billion after the first day of trading), it would be worth over $10.3 billion. Although not fully exercised yet, calling OpenAI a "potential major shareholder of Cerebras" is beyond doubt.

Image source: @Xingpt

Whether Cerebras Can Become the Next Nvidia Remains Unknown, But the Stock Price May Continue to Rise in the Short Term

Let's return to the third initial question: Can Cerebras become the next Nvidia?

From an industry landscape perspective, the answer is likely no. There are four main reasons:

- Firstly, the massive gap in ecosystems: As the absolute hegemon in chip manufacturing, Nvidia's CUDA software stack is the undisputed industry standard, with countless developers, frameworks, and toolchains built upon it. While Cerebras has its own software stack, it's far from the maturity and compatibility of CUDA, making the switching cost prohibitively high for many developers and enterprises.

- Secondly, the difference in scale and diversification strategies: In 2025, Nvidia's revenue reached tens of billions of dollars, with GPUs covering all scenarios: training, inference, graphics, automotive, and data centers. Jensen Huang boldly stated at CES 2026, "The market size for AI chips and infrastructure could reach $1 trillion by 2027," with Nvidia poised to take the largest slice. In contrast, Cerebras's revenue in 2025 was only $510 million, with its customer base relatively concentrated on a single giant like OpenAI, making it less resilient to risks.

- Thirdly, differences in chip manufacturing and cost control: The ultra-large AI chip brings not only faster speeds but also higher manufacturing difficulty and cost. Each of Cerebras's wafer-scale chips requires an entire wafer from TSMC, leading to low yield rates, significant yield challenges, and high unit costs (a single CS-3 system costs far more than a single GPU). Meanwhile, Nvidia can cut dozens of GPUs from a single wafer, benefiting from greater economies of scale and higher economic returns.

- Fourthly, different competitive pressures in the chip industry: Unlike Nvidia's dominant position, Cerebras faces direct competition from several industry players like Groq, AMD, Google TPU, and AWS Trainium. Although its development momentum is currently strong, it is constrained by time, capital, and resources, positioning it more as a "high-end niche player" rather than a "market dominator."

Based on the above, Cerebras is unlikely to grow into an industry giant like Nvidia in the short term, let alone disrupt the current competitive landscape. However, in terms of stock price comparison, its per-share price has already surpassed Nvidia's. Furthermore, bolstered by the booming AI craze and the ever-growing computing power gap, and with OpenAI and Anthropic not yet going public this year, Cerebras's stock price and market cap may still have some room for upward movement.

In the next 2-3 years, if it can successfully convert orders from OpenAI, AWS, etc., into actual revenue, Cerebras's stock price could potentially explore higher levels. Conversely, if order performance falls short of market expectations or if demand for AI model inference changes, its stock price could face significant downward pressure.

In summary, within 1-3 years, Cerebras is unlikely to replace Nvidia, but it can carve out a significant share in the AI infrastructure niche market, potentially becoming the "King of AI Chip Speed." As for the longer-term competitive landscape, it will require more time to unfold.

Recommended Reading

A Decade of Betting on Cerebras: How 'Wafer-Scale AI Chips' Landed on Nasdaq

Cerebras AI Chips Break Nvidia's Monopoly: An In-depth Look at Cerebras's Technological Design