OpenAI goes left, DeepSeek goes right

- Core Insight: The China-US AI competition has shifted from a model capability race to a divergence in business models: DeepSeek, through strategies of open-sourcing, low pricing (nearly free), and heavy asset investment (self-built data centers), is transforming AI into an inclusive "water, electricity, and gas" infrastructure, challenging the "furnished luxury apartment" model of OpenAI, which relies on high-priced subscriptions (e.g., GPT-5.5 standard edition charges $30 per million output tokens).

- Key Elements:

- The DeepSeek V4 launch includes a free version with a million-token context window (Pro with 1.6 trillion parameters / Flash with 284 billion parameters), capable of offline operation, positioning it as a "super brain"; while GPT-5.5 is expensive, with the standard edition costing $30 per million output tokens and the Pro version costing up to $180.

- To counter the US chip embargo (H100/H800/H20 supply cuts), DeepSeek has transformed from a light-asset algorithm company into a "heavy asset" player, building its own data center using domestic Ascend chips in Ulanqab, Inner Mongolia. It leverages local cheap green electricity (50% cheaper than the east) and natural cooling (annual average of 4.3°C) to reduce energy consumption by 20-30%.

- DeepSeek has launched a 300 billion RMB (approximately $44 billion) financing round to combat talent poaching by giants like Tencent and Alibaba (at least 5 core R&D members have left). This marks its shift from "pure technological idealism" to accepting external shareholders and commercialization pressure.

- China counters computing power restrictions through on-device AI (e.g., embedding distilled models in smartphones), compressing large models to 1.2-2.5 GB for local operation. This enables offline usability without subscription fees, serving 1.4 billion mobile phone users.

- Chinese open-source models (e.g., DeepSeek, Qwen) promote digital equality in the "Global South": In regions like Africa and Southeast Asia, localized systems fine-tuned from these models (e.g., Uganda's Sunflower supports 31 languages) are replacing expensive Silicon Valley giants. OpenRouter data shows Chinese models account for approximately 61% of global token consumption.

Original Author: Sleepy.md

On April 24, 2026, the preview version of DeepSeek V4 was officially released.

This domestic large model, featuring a Pro version with 1.6 trillion parameters and a Flash version with 284 billion parameters, launched its core selling point into the market: a million-token context window, now a free standard feature across all official services. Almost simultaneously, OpenAI across the Pacific also unveiled GPT-5.5, which boasts greater computing power, richer agent functionalities, but comes with a much heftier price tag.

Translating "a million-token context" into plain language means AI is no longer a "goldfish" that can only remember your last few sentences. Instead, it has become a "superbrain" capable of devouring three volumes of "The Three-Body Problem" in one go, instantly understanding a two-hour movie, and even helping you pick out typos along the way.

To give a direct example: you could throw all the contracts, emails, and financial reports from your company over the past three years at V4 and ask it to find that breach of contract clause hidden in an appendix on page 47. In the past, this would require a team of lawyers; now, it's free.

GPT-5.5 puts a clear price tag on this superbrain: $5 per million input tokens and $30 per million output tokens for the standard version. The GPT-5.5 Pro version, designed for high-level tasks, sells at a staggering price of $30 per million input tokens and $180 per million output tokens.

However, according to DeepSeek's official pricing, V4-Flash charges only 0.2 RMB per million input tokens (cache hit) and 2 RMB for output. Even the top-tier V4-Pro, comparable to leading closed-source models, charges 1 RMB for cache-hit input, 12 RMB for cache-miss input, and just 24 RMB for output.

Many people think the US-China AI competition is a race of model capabilities. In reality, it has long since become a divergence in business models.

OpenAI, once the young dragon slayer who shouted about "benefiting all humanity," is now selling expensive, luxurious condos. Meanwhile, DeepSeek is using near-free computing power to turn AI into water, electricity, and gas.

When OpenAI becomes a shrewd contractor, why is DeepSeek so determined to turn premium AI into free, running tap water at seemingly any cost? What undercurrents are hidden behind this shift in pricing power?

The Cold Wind of Ulanqab

The decisive battle for large models is fought in server rooms in Inner Mongolia at minus 20 degrees Celsius.

Just before the release of V4, a surprising new position appeared in DeepSeek's job postings: Senior Delivery Manager and Senior Operations Engineer for Data Centers, offering a monthly salary of up to 30,000 RMB (14-month pay), stationed in Ulanqab, Inner Mongolia.

This was a company once known as an "asset-light" firm, priding itself on "minimalism, purity, focusing solely on algorithms." Over the past two years, their proudest label was "achieving a lot with a little," creating DeepSeek-R1, which sent US AI stocks tumbling, with a training cost of under $6 million.

But V4's immense demand for computing power, coupled with increasingly tight US chip sanctions, has completely shattered this asset-light pastoral idyll.

In 2025, the US Department of Commerce further tightened export controls on AI chips to China. Nvidia's H100 and H800 are already banned, and even the downgraded H20 has been added to the control list. This means DeepSeek's future expansion of computing power must fully transition to the Huawei Ascend ecosystem. In V4's release notes, the company explicitly stated that the new model benefits from the "Huawei Ascend" platform and hinted that after the volume production of Ascend 950 supernodes in the second half of the year, the price of the Pro version will be significantly reduced.

This transition is not just a matter of rewriting a few lines of code in an adaptation layer. It requires building a complete domestic computing infrastructure from scratch at the physical level.

V4's trillion-parameter scale (pre-trained on a massive 33 trillion tokens), combined with the huge computational demands of a million-token context, means you need tens of thousands of Ascend chips, server rooms to house them, power grids to supply those rooms, and an operations team willing to keep these machines running in the freezing minus 20-degree Celsius wind.

Liang Wenfeng has shifted his methodology from the bit world to the atom world. Computing power ultimately needs to take root in steel, concrete, and power lines.

On one side, you have AI elites coding in silicon valley wearing plaid shirts and sipping pour-over coffee; on the other, you have operations staff bundled in military greatcoats guarding server rooms deep in the Inner Mongolian grasslands. This contrast forms the backdrop of China's AI resistance against chip embargoes. The cold wind of Ulanqab has become China's most powerful physical "power-up."

Transforming from a pure algorithm company into an "asset-heavy" player with its own server rooms means DeepSeek has bid farewell to the guerrilla warfare era of "small force works miracles" and officially donned the armor of a heavy infantryman. The cost of this transformation is enormous: building server rooms, buying chips, laying network cables – each item is a bottomless pit. More importantly, this asset-heavy model means operational costs will rise exponentially, while DeepSeek's commercial revenue remains extremely limited. This pricing strategy essentially means trading losses for an ecosystem, giving away free access for a say in infrastructure.

A tough guy who once rejected all tech giants and funded AI out of his own pocket through quantitative trading, how long can he hold out in the face of this bottomless pit?

A $20 Billion Compromise

In April, news emerged that DeepSeek had initiated its first external fundraising round, targeting a valuation of up to 300 billion RMB (approximately $44 billion), planning a capital increase of 50 billion RMB, of which 30 billion would be externally raised. Rumors of Tencent and Alibaba competing to join the round ran rampant.

Many believe this is purely because building server rooms is too expensive. But in reality, the core driver for DeepSeek's fundraising, besides buying graphics cards, is that "pure technological idealism" crumbles easily against the talent war machines of big tech companies.

During the critical sprint phase of V4 development, domestic tech giants launched a frenzied poaching campaign targeting DeepSeek. From the second half of 2025 to the present, at least 5 core R&D members have confirmed their departure. Wang Bingxuan, a core author of the first-generation model, went to Tencent. Luo Fuli, a core contributor to V3, was poached by Xiaomi with a multi-million-dollar salary offer from Lei Jun. Guo Daya, a core author of R1, joined ByteDance's Seed team.

This is the most naked operation of a market economy: when your competitors have unlimited ammunition, and you insist on operating with your own funds, the talent market becomes your weakest link. You can ask geniuses to work overtime for lower pay in the name of changing the world, but when a big tech company slaps a check for millions of cash and stock options on the table, along with promises of unlimited computing resources, the pricing power of idealism is no longer in your hands.

Liang Wenfeng's dilemma is one faced by every entrepreneur trying to build a "slow company" in China. In a market where big tech companies can buy anyone with money, the path of "no fundraising, no commercialization, just technology" is extremely luxurious. Its price is accepting that your team could be emptied by competitors with cash at any moment.

This $30 billion valuation fundraising isn't a compromise by Liang Wenfeng to capital. It's a ransom war he launched against big tech companies to secure the V4 development team. He had to sit at the capital table, using real money to give the remaining talent enough reason to stay.

The potential entry of Tencent and Alibaba means DeepSeek is no longer that lonely, pure technological idealist. It has become a company with external shareholders and commercial pressure. The cost of this transformation is the inevitable dilution of what Liang Wenfeng once prided himself on most: "research freedom free from external pressure."

But he had no choice.

When idealism is forced to don the armor of capital, where does the confidence come from to keep this massive machine running, to keep the server room in Ulanqab humming day and night?

Another Kind of "Massive Force Works Miracles"

The answer isn't in the algorithms; it's in the power grid.

What Silicon Valley is most anxious about right now isn't a shortage of chips, but a shortage of electricity. Musk is frantically building a super data center in Memphis, Tennessee. OpenAI has started discussing investing in nuclear power plants. Microsoft announced the restart of the Three Mile Island nuclear plant in Pennsylvania to power its AI data centers. The endgame of computing power is electricity – an incredibly cold, hard physical reality.

In the US, a large AI data center consumes as much electricity as a medium-sized city. And the US power grid is an old network built in the 1950s, with slow expansion, regional fragmentation, and unable to keep pace with the speed of AI computing expansion.

What supports China's AI in catching up with the US isn't just the algorithm geniuses earning multi-million-dollar salaries, but also the unheralded ultra-high-voltage power transmission lines.

The data center in Ulanqab could be built thanks to Inner Mongolia's abundant green electricity and China's world-leading grid dispatch capabilities. Public data shows that Ulanqab's green electricity installed capacity is 19,402 MW, accounting for approximately 65.9% of total capacity. Local low-cost green electricity is about 50% cheaper than in eastern regions. Combined with an average annual temperature of only 4.3 degrees Celsius, providing natural cooling for nearly 10 months, this allows equipment to save 20% to 30% on energy.

When DeepSeek V4 runs, what truly powers it is China's vast and incredibly cheap electricity infrastructure. This is another dimension of "massive force works miracles."

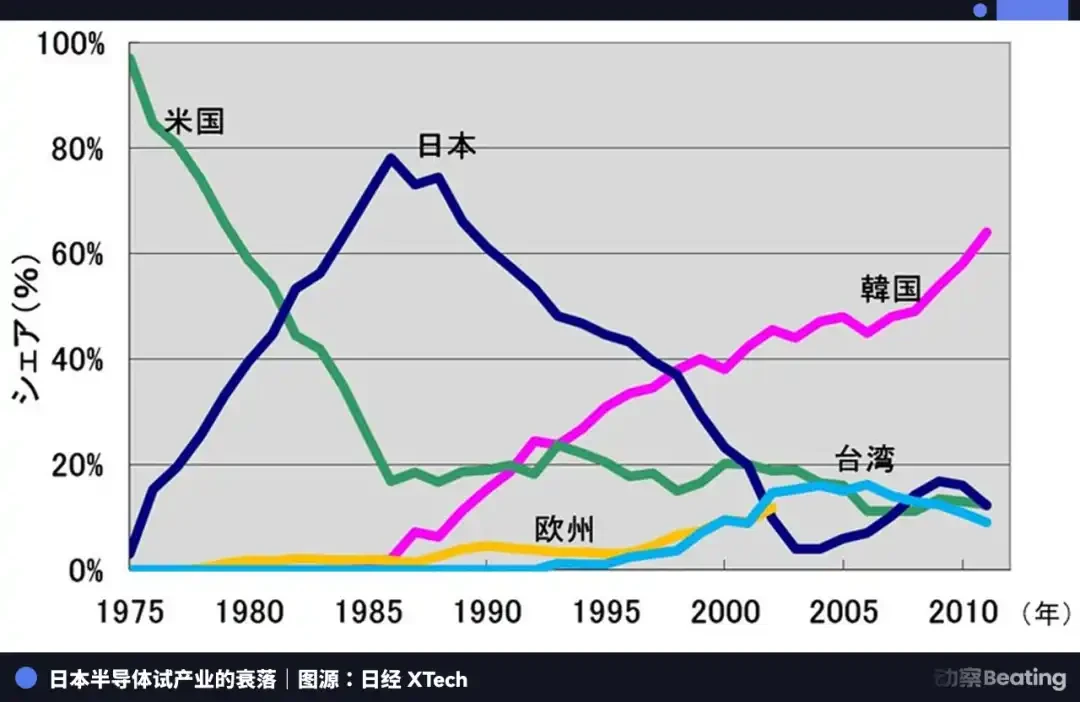

There is a very interesting and cruel historical parallel here. In 1986, the US crushed Japan's semiconductor industry with the US-Japan Semiconductor Agreement, forcing Japan to open its markets and accept price controls. Japan's global semiconductor market share plummeted from 40% in 1986 to 15% in 2011. Japan hasn't recovered in thirty years.

Today, the US is trying to use the same logic to lock down China's AI – blocking chips, limiting computing power, cutting off technology supply chains. But China's path of counterattack is completely different from Japan's. Japan's failure back then stemmed from its semiconductor industry being highly dependent on US technology licenses and market access. Once cut off, it lost its ability to survive independently. China's AI counterattack, however, starts by rebuilding from the most fundamental physical infrastructure: making its own chips, building its own server rooms, laying its own power grids, and open-sourcing its own models.

This is an extremely cumbersome, extremely expensive path, but also one incredibly difficult to "strangle." While Silicon Valley builds its magnificent Tower of Babel in the cloud, China digs trenches in the mud.

If the battle for computing power in the cloud is a brutally costly war of attrition, besides building server rooms and laying power lines in Inner Mongolia, is there another path to escape cloud hegemony?

Escaping the Cloud

As Silicon Valley giants build ever larger data centers, even planning trillion-dollar computing clusters like OpenAI, China's line of counterattack has quietly moved underground.

The ultimate weapon against the US computing power blockade isn't creating a chip stronger than the H100, but stuffing the large model into everyone's smartphone.

Since we can't outgun the heavy firepower in the cloud server rooms, we shift the battlefield back to 1.4 billion smartphones and edge devices. This is a classic guerrilla warfare tactic, and one extremely difficult to block. You can ban the export of high-end GPUs, but you can't confiscate the phone in every Chinese person's pocket.

In 2026, fueled by the computing power anxiety sparked by DeepSeek, Chinese phone makers like Xiaomi, OPPO, and vivo launched a frenzied "edge transfer." They are no longer satisfied with just being a monitor that calls cloud APIs. Through extreme model distillation and compression, they are forcibly stuffing a miniaturized superbrain into affordable domestic smartphones.

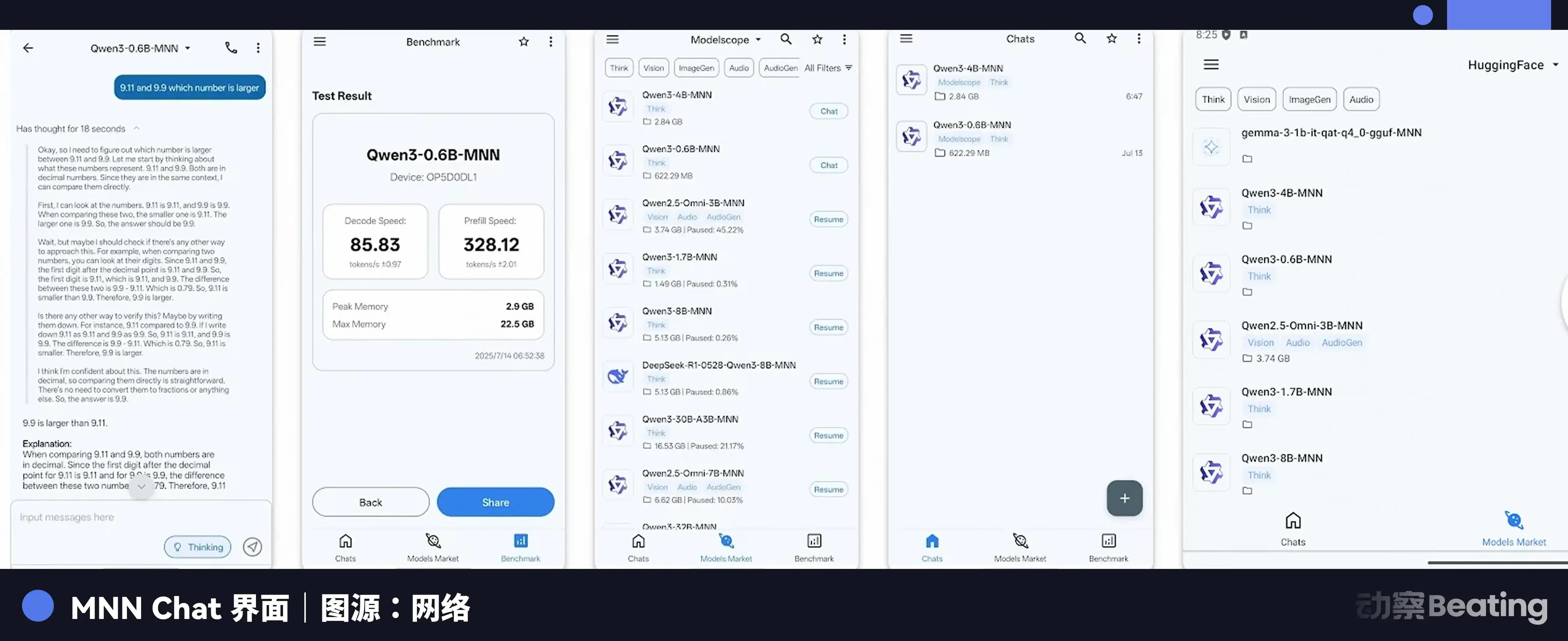

The core of this technical path is "distillation." Simply put, it uses a large "teacher" model to train a smaller "student" model, teaching the student the teacher's "way of thinking" rather than having it memorize all the teacher's "knowledge." Through extreme distillation and quantization compression, a large model that originally required hundreds of GPUs to run can be compressed to a size of 1.2 to 2.5 GB and run smoothly on a single phone chip.

Mobile AI applications like MNN Chat already allow users to run the distilled DeepSeek R1 model locally on their phones. The significance of this edge AI is that you don't need to be constantly connected to 5G, nor do you need to pay a subscription fee to Silicon Valley giants every month. The large model is in your pocket. It works even offline, without spending a single cent on cloud computing.

Since I can't afford to build a central heating super boiler room, I'll give every household their own small stove.

Of course, edge AI isn't perfect. Limited by the computing power and memory of phone chips, the upper limit of capabilities for edge models is far lower than the ultra-large cloud models. It can help you write an email, translate a piece of text, or summarize an article. But if you ask it to derive a complex mathematical theorem or analyze a several-hundred-page legal contract, it will still struggle.

But this is already enough. Because for the vast majority of ordinary people, the AI they need has never been that superbrain capable of deducing mathematical theorems. What they need is a "personal assistant" to handle their daily chores.

When large models become incredibly cheap and can even fit in your pocket, how will they change the corners forgotten by Silicon Valley?

Digital Equality for the Global South

If you're sitting in a panoramic glass office in Manhattan, you'd probably think GPT-5.5's price increase to $100 is worth it, as it can help you draft a perfect merger report in seconds.

But if you're standing in a cornfield in Uganda, East Africa, facing crops withered by climate anomalies, no one can afford the $100 subscription fee, because the average monthly income in Uganda is less than $150.

While Silicon Valley giants discuss how to rule the world with AI, farmers in Uganda and poor students in Southeast Asia, thanks to DeepSeek's open-source, have entered the digital age for the first time.

GPT-5.5 serves those who can pay, and its training data is almost entirely in English. If you ask it a question in Swahili or Javanese, it not only answers haltingly but also consumes several times more tokens than English. Silicon Valley giants, deeming these fringe markets unprofitable, have voluntarily abandoned them.

China's open-source models, on the other hand, have become the digital infrastructure for the Global South.

In Uganda, the local NGO Sunbird AI used the Sunflower system, fine-tuned from the Chinese open-source model Qwen, to expand the number of supported local languages from 6 to 31. This system is now deployed in the Ugandan government's agricultural extension service, sending planting advice to farmers in Swahili.

In Malaysia, tech companies fine-tuned an AI model compliant with Islamic law using an open-source base. It not only supports Malay and Indonesian but also ensures its output aligns with the religious and cultural standards of the Muslim market. From Indonesia's digital identity system to Kenya's Swahili medical Q&A, Chinese technology is penetrating the social infrastructure of these countries.

Data released by OpenRouter, the world's largest AI model API aggregation platform, in early 2026 shows that the token consumption of Chinese AI models on the platform surpassed that of American competitors for the first time. In one statistical week, the world's top 10 most popular models consumed a total of 8.7 trillion tokens, with Chinese models accounting for approximately 61%.

Open source has broken the US monopoly on AI discourse power, allowing resource-poor developing countries to leap over the digital divide. This isn't some grand narrative of a Sino-American rivalry; it's the true "besieging the cities from the countryside" of the AI era.

China's open-source AI strategy is objectively becoming an extremely effective "soft power" output. While Silicon Valley giants build high walls in the cloud, trying to become the digital landlords of the new era, those "tech refugees" who can't afford the rent have finally found their spark in the soil of open source and edge computing.

Tap Water

Technology should never be an inaccessible luxury.

Silicon Valley built