史上最悪のストレージ不足が、サムスンを世界第2位に押し上げた

- 核心的な見解:AIインフラ需要により、ストレージチップは「超好況サイクル」に突入しつつある。そのボトルネックはGPUからHBM、DRAM、NANDなどのストレージハードウェアにまで拡大しており、サムスン、SKハイニックス、マイクロンなどのメーカーは記録的な利益を上げ、サプライチェーンは「握手による合意」から長期・高額前払いの拘束力のある契約へと移行している。

- 重要な要素:

- サムスンの第1四半期の純利益は300億ドルを超え、営業利益の約94%を半導体が占め、過去の四半期記録を大幅に上回った。

- ストレージチップの価格は2026年第1四半期に前期比で約100%上昇し、予想の約2倍となり、DRAMの利益率は80%に達した。

- HBM優先生産により従来型DRAMとNANDの供給が圧迫され、新たなウエハー工場の稼働は2027年末までずれ込む見通しで、供給不足が深刻化している。

- 顧客は生産能力を確保するため、従来の「握手による合意」に代わり、5年契約、30%の前払い、または工場への共同投資を受け入れている。

- 推論需要の高まりにより汎用サーバー市場が拡大し、従来型ストレージチップの需要と収益性がさらに押し上げられている。

Original Title: AI Has Made Memory Chips One of the World's Most Profitable Products

Original Author: Jiyoung Sohn, Andrew Barnett, The Wall Street Journal

Original Translation: Peggy, BlockBeats

Editor's Note: Nvidia was once the most prominent winner of the AI infrastructure cycle, but the latest round of the chip market is showing that AI's bottleneck lies not only in GPUs but also in memory.

Over the past year, global capital expenditure has continued to flow into AI, first driving up demand for HBM and other high-bandwidth memory, and subsequently squeezing the supply of traditional DRAM and NAND flash memory. When large model training requires GPUs paired with HBM, and inference demand drives the expansion of general-purpose servers, memory chips have transformed from a cyclical industry into one of the most scarce and profitable segments of the AI supply chain.

This is also the reason behind the collective surge in performance for Samsung, SK Hynix, and Micron. Memory chip prices rose nearly 100% in the first quarter, resulting in Samsung's net profit exceeding $30 billion for Q1, with the semiconductor business contributing the vast majority of profits; memory chip prices surged nearly 100% quarter-over-quarter in the first three months of 2026, far exceeding market expectations. More importantly, this is not merely a short-term price hike but a repricing of supply and demand dynamics: the long construction cycles for new fabs, coupled with HBM consuming more capacity, are further constricting traditional memory supply.

Against this backdrop, memory chips are transitioning from "supporting components" to "strategic resources." Server, PC, and smartphone manufacturers are paying premiums to lock in capacity, even accepting five-year contracts, prepayments, and joint investments in fabrication plants. The supply relationships previously maintained by handshake agreements are shifting towards more binding long-term commitments. In other words, the AI competition is no longer just a contest between models, computing power, and cloud platforms; it has also become a battle for the underlying supply chain.

What is most noteworthy is not how much money memory chip companies are making this year, but that the bottlenecks of AI infrastructure are spreading from a single type of computing power to a broader hardware ecosystem. GPUs determine whether a model can be trained, HBM determines whether data can be exchanged at high speed, and DRAM and NAND flash influence the cost structure of inference and server expansion. As more companies believe that "whoever controls memory supply will control AI," the profit bonanza for memory chips is also a signal that AI infrastructure has entered a resource competition phase.

Below is the original text:

Ranked by Net Profit, Chip Manufacturers Entering the Global Top 20.

By the end of last year, global investment in artificial intelligence had pushed the memory chip industry into a "super boom cycle." Profits hit new records, and prices were expected to rise another 50% in the first three months of 2026 compared to the previous quarter.

But things didn't stop there. The reality is even better, and much better.

On Thursday, Samsung Electronics reported a first-quarter net profit equivalent to over $30 billion. This not only far exceeds its previous single-quarter profit record but also nearly approaches the company's past annual profit highs. Approximately 94% of Samsung's first-quarter operating profit came from its semiconductor business.

Samsung's main competitors in the memory chip space—South Korea's SK Hynix and US-based Micron Technology—have also recently reported equally surprising results. These three companies dominate the global memory market, and memory chips, alongside Nvidia's processor chips, are used for AI computing.

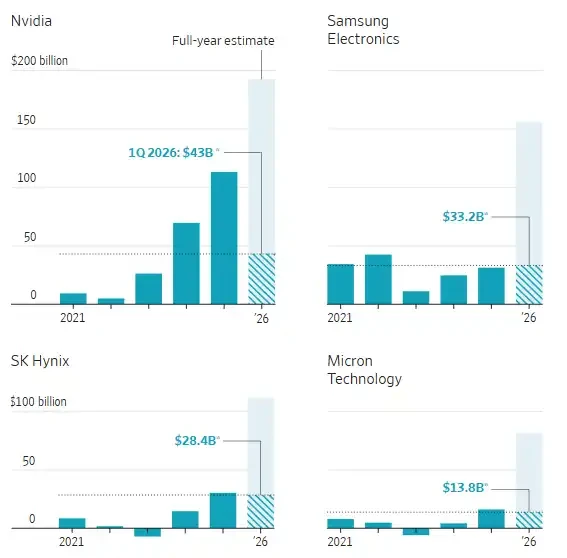

Annual Net Profit of Semiconductor Companies

In Q1 data, Samsung and SK Hynix correspond to the fiscal quarter ending March 2026 (actual results); Micron corresponds to the fiscal quarter ending February 2025 (actual results); Nvidia corresponds to the fiscal quarter ending April 2026 (estimated results).

Note: Samsung data includes all its businesses, but the semiconductor business contributed the majority of profits. Exchange rate is calculated at 1 USD to 1421.22 KRW. Source: FactSet, Andrew Barnett / The Wall Street Journal

Although there are growing concerns about whether AI services will ultimately generate substantial profits, the companies involved in building the related infrastructure have already reaped an epic windfall.

And this historic surge shows no signs of ending soon. Jaejune Kim, Executive Vice President of Samsung's memory business, stated during Thursday's earnings call that based on orders already booked, the supply shortage is expected to worsen further next year. "The currently available production capacity is far from sufficient to meet customer demand," he said.

Since the beginning of this year, Samsung's stock price has risen 72%. SK Hynix's stock is up 90%, and Micron is up 65%.

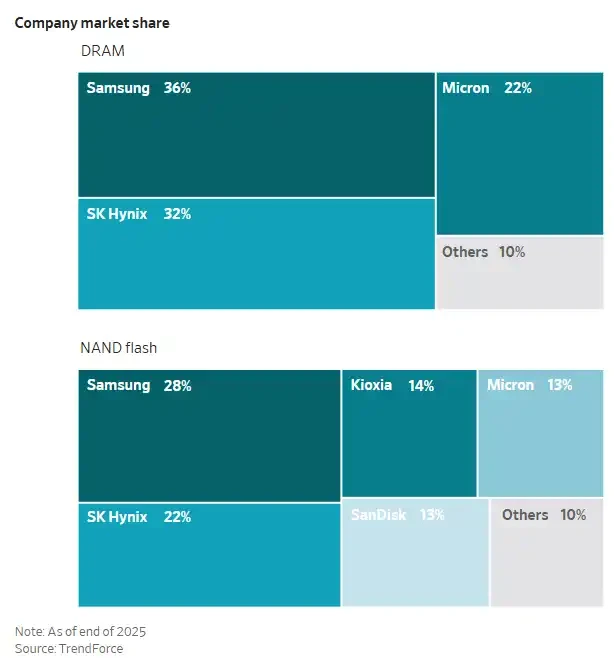

Company Market Share

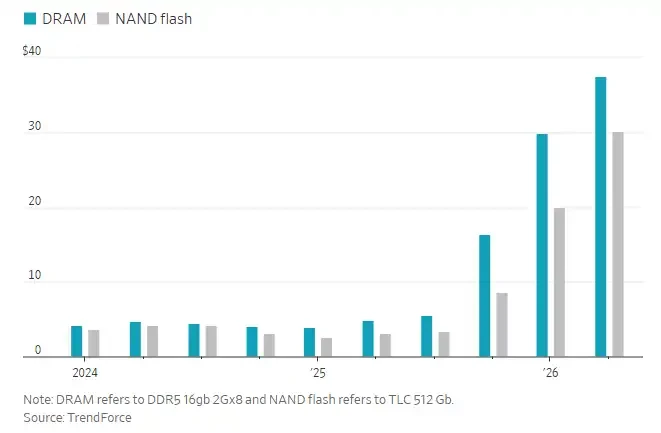

According to data from tech market research firm TrendForce, memory chip prices in the first three months of 2026 rose nearly 100% compared to the previous quarter, approximately double the initial expected increase.

In recent years, memory chip manufacturers have prioritized the production of specialized memory chips required for AI, namely High Bandwidth Memory (HBM). This, in turn, has limited the supply of traditional memory chips used in smartphones, personal computers, and general-purpose servers. Training large language models typically requires pairing Nvidia's Graphics Processing Units (GPUs) with HBM.

More recently, demand for inference has started to rise. Inference refers to the computational process required for a trained AI model to respond to user queries. This has driven growth in demand for general-purpose servers, which use traditional memory chips, thereby pushing the profitability of Samsung, SK Hynix, and Micron to a new level.

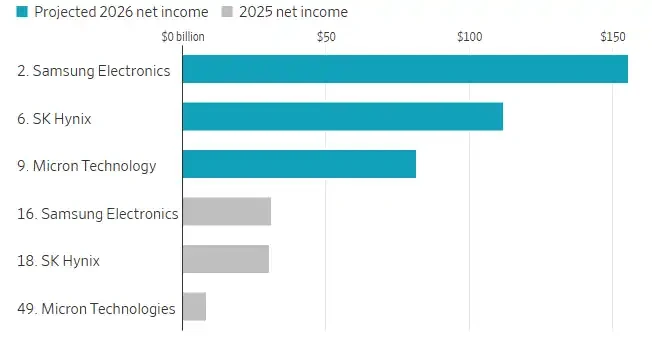

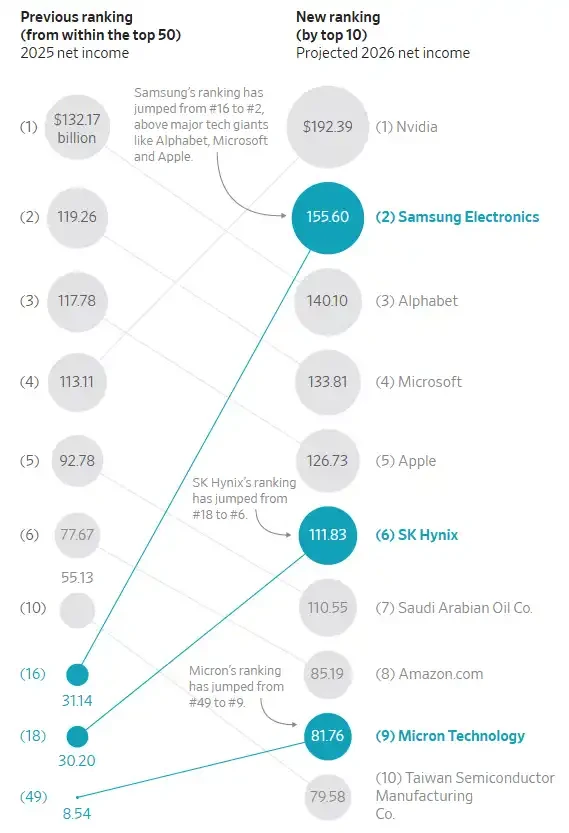

According to estimates from FactSet, these three companies are expected to achieve a combined net profit of approximately $350 billion in 2026. Each is poised to rank among the top ten most profitable listed companies globally, with Samsung projected to leapfrog Alphabet, Microsoft, and Apple to take second place. A year ago, none of these memory chip manufacturers were in the top ten.

Ranking of Selected Chip Manufacturing Companies by Net Profit

A chip manufacturing plant, or fab, can cost over $20 billion and take several years to build. Industry analysts say Samsung, SK Hynix, and Micron are all constructing new fabs, but capacity is unlikely to be fully released until late 2027 or 2028. Meanwhile, many production lines are already allocated to HBM, which consumes more capacity than traditional memory chips.

Memory chips are mainly divided into two categories: DRAM, used for temporary storage in servers, PCs, and other electronic devices to support faster data processing; and NAND flash memory, used for long-term data storage, such as storing photos in a mobile phone.

HBM is made by stacking layers of DRAM chips, which are then packaged together with processors from companies like Nvidia to accelerate AI computing. Nvidia works closely with Samsung, SK Hynix, and Micron.

MS Hwang, a semiconductor research analyst at Counterpoint, stated that the operating profit margins for both types of memory chips have roughly doubled compared to normal levels, with DRAM margins reaching around 80% and NAND flash margins reaching up to 60%.

Memory Chip Contract Prices

Hwang from Counterpoint added that many large companies in the server, PC, and smartphone sectors are paying premiums and purchasing memory chips in large volumes to lock in more supply and limit the capacity available to competitors. "The underlying logic is that whoever controls memory supply will dominate AI," he said.

Marcus Chen, Executive Vice President at global electronic components distributor Fusion Worldwide, stated, "What we are seeing today is the most severe memory shortage the market has ever experienced." Most of Chen's clients currently receive only 30% to 50% of the memory chips they need. "Some clients get even less," he said.

For a long time, clients and memory chip manufacturers primarily relied on "handshake agreements" to secure long-term supply; but now, in some cases, they are moving towards binding formal contracts. Peter Lee, a semiconductor analyst at Citigroup, said some contracts last for five years and require clients to pay approximately 30% of the cost upfront or co-invest in the construction of new memory chip fabs. "We have seen clients willing to go to this extent," Lee said.