How Ordinary People Can Get a Piece of the Pie in the AI Era's Capital Wave

- Core Viewpoint: The value chain of the AI industry is structured as a five-layer technology stack: energy, chips, cloud computing, models, and applications. Currently, the vast majority of capital is flowing into the underlying infrastructure (the first three layers), rather than the surface-level applications that capture public attention. Real profits are also concentrated in the infrastructure layer, creating a pattern of "revenue flowing upward, capital settling downward."

- Key Elements:

- Capital Flowing to Infrastructure: The capital expenditure of the four major cloud providers is projected to reach $650-700 billion by 2026, with approximately 75% ($450 billion) invested in AI infrastructure, such as data centers, chips, and power systems.

- High Growth, High Consumption at the Model Layer: OpenAI's annual revenue grew 10-fold in two years to $20 billion, but it is projected to burn through $17 billion in 2026. High inference costs squeeze profits, which are absorbed by underlying computing power expenses.

- Highly Concentrated and Profitable Infrastructure Layer: Nvidia holds roughly 92% of the AI GPU market share with a gross margin near 75%; TSMC monopolizes about 70% of chip manufacturing; ASML is the sole supplier of EUV lithography machines.

- Historical Pattern Analogy: The current development of AI is similar to the electricity revolution and the early internet era. The earliest wealth creators were the infrastructure builders "selling picks and shovels," not the end applications.

- Fierce Competition but Thin Profits at the Application Layer: The application layer has a massive market size but currently offers the thinnest profits and the most uncertain competition. Companies with unique data moats may ultimately prevail.

- Major Risks: Include capital misallocation (massive investments may not be supported by revenue growth), highly concentrated supply chains (risks from geopolitical shocks, etc.), and efficient open-source models (like DeepSeek) potentially undermining the logic of infrastructure investment.

Original Title: If you don't understand AI by the end of this, the next decade will confuse you

Original Author: Anish Moonka

Original Compilation: Peggy, BlockBeats

Editor's Note: When people talk about AI, attention is often focused on the most visible aspects: chatbots, AI assistants, and various new applications. However, behind these products, a deeper industrial restructuring is taking place. From electricity and chips to data centers, and then to models and applications, AI is essentially a technology stack composed of multiple layers of infrastructure, and the flow of capital and profits is far more complex than what appears on the surface.

This article, from the perspective of the "Five-Layer AI Structure," systematically outlines this value chain: why hundreds of billions of dollars are flowing towards energy, chips, and cloud infrastructure; why model companies are burning through massive amounts of cash despite rapid growth; and where the real value in this technological revolution might be concentrated first.

By comparing AI to historical cycles like the electricity revolution and internet infrastructure development, the author attempts to answer a key question: in this technological wave that could reshape the global industrial structure, where is capital flowing, and how can ordinary people participate in this round of AI wealth opportunities.

The following is the original text:

Most people think AI is just a chatbot.

I can understand that. You open ChatGPT, ask it to revise an email, and it does it instantly. It feels like magic. Then you close the tab, thinking you've got AI figured out. But that's like swiping a Visa card once at a restaurant and then thinking you understand how Visa makes money. You've just used the product; you haven't seen the system.

For most of last year, I've been trying to figure out where the real profits in AI actually flow. And the slightly awkward truth is: it took me a long time to realize I was looking at the wrong layer. I was staring at ChatGPT, Claude, Gemini—the things you can actually touch.

Meanwhile, $700 billion was quietly flowing into another set of infrastructure I couldn't even name: chips I'd never heard of, packaging technology acronyms that sound made up, cooling systems, power plants. In Texas, Iowa, and Hyderabad, concrete is being poured for data centers.

A year ago, almost no one around me was talking about this. Now, everyone is.

This article will be long. If you don't have time to read it now, you can save it for later.

I want to walk you through the entire AI value chain: starting from the electricity that powers data centers, all the way to the app on your phone.

And I'll do it in a way that makes sense even if you've never read a public company's annual report in your life. I'll explain every term; I'll back every claim with real data; and for the parts I'm still unsure about, I'll be honest about that too, because there are a few.

Let's begin.

1. The Five-Layer Cake (Why No One Talks About the Bottom Four)

AI is infrastructure. Like the internet, like electricity, it needs factories. —Jensen Huang

Most people understand AI like this: a smart computer that answers questions.

That's like saying the internet is "a place to watch videos." Technically true, but completely misses the point.

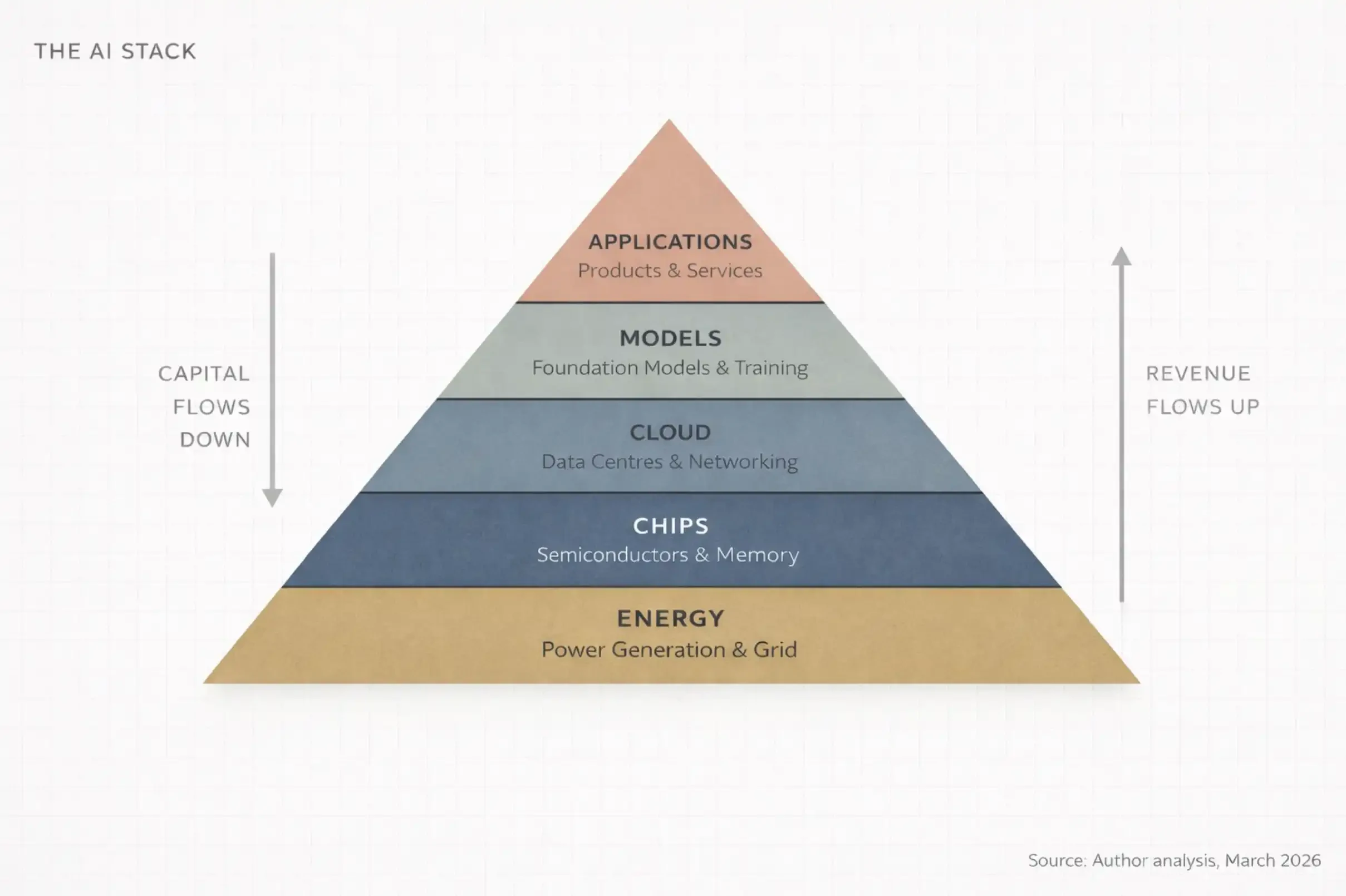

At the World Economic Forum in January 2026, Jensen Huang described AI as a five-layer system:

Energy

Chips

Cloud

Models

Applications

He called this entire system: "The largest infrastructure buildout in human history."

Think about that word first: Infrastructure.

Roads. Power grids. Water systems. These things make modern civilization run, but people usually only notice them when they break.

AI is becoming the same thing—invisible, indispensable, and incredibly expensive to build. I call this entire structure the AI Stack. It's made of five layers, stacked on top of each other, each supporting the one above, with money flowing both ways between them.

The simplest version I can give you is this:

Energy: You need electricity to run computers, and a lot of it.

Chips: You need specialized processors for computation. These aren't the CPUs in your laptop.

Cloud: You need massive, warehouse-sized data centers filled with these chips, connected by extremely high-speed networks.

Models: You need the actual AI software—the "intelligent brain" that learns patterns from data.

Applications: You need the products people actually use, like ChatGPT, Google Search, or a bank's fraud detection system.

Any discussion of AI that only talks about the fifth layer (applications) is missing 80% of reality. And if you're an investor, an entrepreneur, or just someone trying to understand where the world is headed, the crucial point is that money doesn't distribute evenly across these five layers. It concentrates, compounds, and flows to a very few critical nodes.

And right now, that concentration is happening in places most people aren't even noticing.

2. Tracking the Money Flow (The Answer Isn't Where You Think)

People's attention almost always focuses on the application layer. ChatGPT, GitHub Copilot, Claude, Perplexity.

These are products you can directly use, so it's easy to think the AI story is probably about these apps.

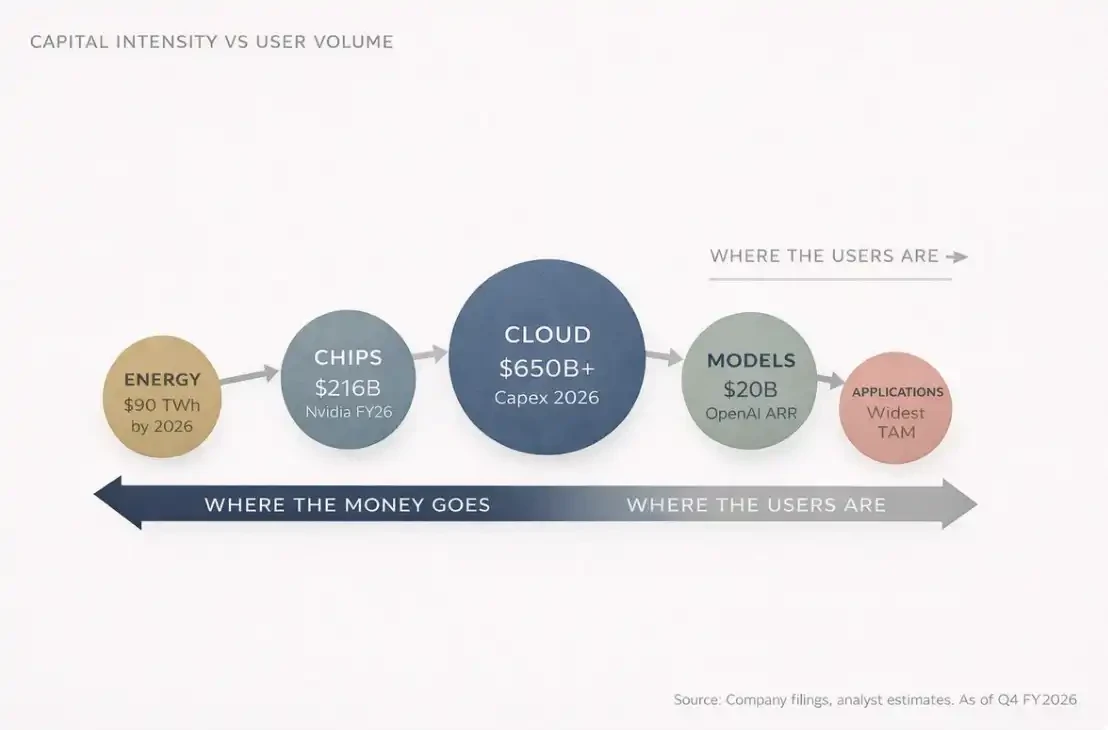

But most people miss one thing. By 2026, the combined annual capital expenditure (CapEx) of the world's four largest cloud computing companies (Amazon, Microsoft, Google, Meta) is projected to reach $650 to $700 billion.

That's for one year, for four companies combined.

That number is roughly equivalent to Switzerland's entire annual GDP. And about 75% of that, around $450 billion, will go directly into AI infrastructure.

Not chatbots, not applications. But buildings, chips, fiber optics and networking, cooling systems—things almost no one talks about at cocktail parties. That's precisely where the money is.

Because think about it: before anyone can use ChatGPT, someone must first do one thing: build a shopping-mall-sized data center, install tens of thousands of specialized processors inside, connect them with networking equipment worth more than the market cap of most companies, and power the whole system with enough electricity for a small city. And run it like that, every day.

That's layers one to three: Energy, Chips, Cloud Infrastructure. These are the invisible layers, and where truly massive capital is being deployed.

Someone might ask: "What about OpenAI? Haven't they made billions already?"

True.

By the end of 2025, OpenAI's annualized recurring revenue (ARR) had reached $20 billion. A year earlier it was $6 billion, and the year before that, $2 billion.

A 10x growth in two years. In human business history, few companies have grown revenue this fast at this scale.

The problem is, the costs are equally staggering.

2025: OpenAI burned roughly $9 billion in cash

2026: Projected to burn $17 billion

Just the inference cost—the cost of actually running the model when you ask it a question:

2025: $8.4 billion

2026 projected: $14.1 billion

Based on current projections, OpenAI might not reach cash flow breakeven until 2029 or 2030.

So the question is: where does all that burned money go?

The answer: it flows down the AI stack.

To:

Microsoft Azure (OpenAI is contractually obligated to pay Microsoft 20% of revenue until 2032)

Nvidia's GPUs

Engineering companies building data centers

And energy companies providing the power

If you stare at this system long enough, you see an almost circular structure:

Microsoft invests in OpenAI

OpenAI uses that money to buy Azure cloud services

Azure uses the revenue to buy Nvidia chips

Nvidia reports record profits

Everyone applauds

And the money keeps flowing down.

There's an important structural fact in the AI stack:

Most users are at the top (application layer)

Most profits are at the bottom (infrastructure layer)

And this misalignment between where users are and where profits are is the core of the entire AI investment thesis.

This is the first law of the AI value chain: Revenue flows up, capital settles down.

3. You've Seen This Movie Before

All of humanity's problems are essentially engineering problems, and engineering problems can eventually be solved. —Buckminster Fuller

If you want to truly understand what's happening with AI, look back at the history of the electricity revolution between 1880 and 1920.

In 1882, Thomas Edison built the first commercial power station on Pearl Street in Manhattan, New York. At the time, most people thought electricity was just a novelty, a "fancier" way to light things. After all, gas lamps worked fine. Who really needed this?

But in just 40 years, electricity completely reshaped almost every industry: manufacturing, transportation, communication, healthcare, entertainment.

The real winners of that revolution weren't the people who invented the light bulb, but those who built the infrastructure: General Electric, Westinghouse Electric, power companies, copper mining companies, engineering and construction firms.

Today, AI is repeating the same pattern, just compressed into years instead of decades.

Compare the two chains:

AI System: AI → Data Centers → Chips → Raw Materials → Energy

Electricity System: Electricity → Factories → Machines → Raw Materials → Coal/Hydropower

The paths are almost identical. And the winners, once again, are not primarily at the application layer, but at the infrastructure layer.

I call this phenomenon Infrastructure Gravity. Whenever a new computing platform emerges, the earliest wealth is always created by the "shovel sellers."

Applications catch up later. Applications get all the media attention. But infrastructure takes most of the profits.

For example, Nvidia's full-year revenue for fiscal year 2026 (ending January 2026) was $215.9 billion, up 65% year-over-year. Its Data Center business alone generated $62.3 billion in revenue in the last quarter, up 75% year-over-year. This business now accounts for 91% of Nvidia's total revenue.

In other words, one company, $68 billion in quarterly revenue, 90% from the same business line.

Look at chip manufacturing. TSMC held about a 70% share of the global foundry market in 2025, with sales of $122.5 billion. The second-place, Samsung Electronics, had only 7.2%. This level of monopoly would even make Standard Oil look less extreme.

Infrastructure always wins first. The real question is just how long this window lasts.

Ask anyone what the internet revolution was, they'll say Google, Amazon, Facebook.

But if you ask where the earliest money was made, the answer is actually Cisco Systems, Corning, the companies laying the fiber optic networks.

The same story, just a different era.

4. The Part No One Wants to Hear

The stock market is a device for transferring money from the impatient to the patient. —Charlie Munger

I have to confess something. When I first looked at AI as an investor, I made the same mistake as most people: I looked at the application layer. I saw ChatGPT's growth. I saw Anthropic raising billions. So I thought, AI companies will win, so invest in AI companies.

Later, three things changed my mind, and they happened in sequence.

Thing One: The Hottest Companies Are Burning Cash

I found that almost all "AI companies" are burning cash like crazy. OpenAI, Anthropic, Mistral AI, xAI. All spending money far faster than they make it. The reason isn't a bad business model, but that compute costs are structural.

Every time you ask an AI a question, the system has to do real computation. Computation needs GPUs, GPUs need electricity. And as models get stronger, compute demands increase, so running costs only get higher.

In other words: the perceived winners in AI are actually the biggest spenders.

Thing Two: The Most Profitable Are at the Bottom

I noticed infrastructure companies are printing money. Nvidia's gross margin is near 75%. TSMC is expanding capacity while raising prices because demand far exceeds supply.

These companies don't have a "when will they be profitable" problem. Their problem is, we simply can't build fast enough. These are two completely different problems.

Thing Three: Don't Think Like a "Consumer" (The Most Uncomfortable One)

I realized I had been thinking about AI like a consumer.

Consumers see applications. Engineers see the stack. Once you see the whole stack, you can't unsee it.

Every AI launch becomes a capital expenditure (CapEx) announcement. Every model upgrade becomes a new chip order. Every new feature becomes a new data center lease.

The entire industry starts to look like concentric circles: the closer to the center, the more concentrated the profits.

Maybe you are: a software engineer focused on AI models, a retail investor who bought Nvidia at $300, or someone watching this revolution from afar in India (or maybe you're all three—that's the most interesting position).

Wherever you are, the principle is the same. Consumers see products. Investors see supply chains. And the best investors see the supply chain that forms before the product is even announced.

5. Investor Map: A Layer-by-Layer Breakdown of the AI Stack

The article is already long, so I'll pick up the pace.

Below is the structure, key players, and potential opportunities for each layer of the AI Stack.

Layer 1: Energy

AI data centers are extremely power-hungry. One large model training run can consume a small town's annual electricity. By 2026, global AI data centers are projected to consume about 90 terawatt-hours of electricity annually. That's roughly a 10x increase from 2022.

This creates a very simple investment logic: whoever can provide stable power to data centers will benefit. This includes nuclear power companies, natural gas companies, renewable energy companies, grid operators, especially those near data center clusters.

Jensen Huang said in October 2025: The speed at which data centers build their own power generation might be faster than connecting to the grid. In fact, many tech companies are already building power generation facilities right next to their data centers, bypassing the grid.

This shocked me. These tech companies are becoming their own power companies.

Beneficiaries include utility companies, independent power producers, power equipment manufacturers (transformers, switchgear, etc.). In Asia, for example India, power equipment and transmission companies will benefit as hyperscaler data centers expand.

Layer 2: Chips

This is the layer the public is most familiar with, because of Nvidia. But it's far more complex than one company.

The chip layer can be subdivided into several sub-layers:

Design Companies

Nvidia (GPU), AMD, Broadcom, Qualcomm

And increasingly, Cloud Provider In-House Chips: Google TPU, Amazon Trainium, Microsoft Maia

Manufacturing Companies

Almost monopolized by TSMC, ~70% market share, second place Samsung Electronics (7.2%). Intel is trying to rebuild its foundry business, but that will take years.

Equipment Companies

The machines that make chips come from ASML (the only company producing EUV lithography machines), and Applied Materials, Lam Research, Tokyo Electron.

Memory Companies

AI models require massive amounts of High Bandwidth Memory (HBM). Key players: SK Hynix, Samsung, Micron Technology.

Packaging Technology

Advanced packaging technologies (like TSMC's CoWoS) have become a new bottleneck.

The most shocking thing about this layer is actually the concentration:

Nvidia: ~92% share of AI GPU market

TSMC: Manufactures almost all AI chips

ASML: Sole supplier of EUV equipment

One company designs. One company manufactures. One company makes the manufacturing machines. This concentration is both an investment opportunity and a geopolitical risk.

Layer 3: Cloud & Data Centers

This is where chips actually run.

Massive warehouse-like facilities:

Tens of thousands of servers

High-speed network connections

Liquid cooling systems (have gone from optional to standard)

The market is dominated by the three major cloud providers:

Amazon Web Services (31%)

Microsoft Azure (24%)