十年押注Cerebras:「晶圓級AI晶片」如何登上納斯達克

- 核心觀點:本文由Cerebras早期投資人撰寫,回顧了從2014年醞釀到2025年IPO長達十九年的合作歷程,揭示了Cerebras的成功源於押注AI計算架構底層重構的遠見、解決晶圓級晶片系統性工程難題的決心,以及投資人與創始團隊之間超越交易的長期信任關係。

- 關鍵要素:

- Cerebras於2025年5月14日登陸納斯達克,首日收盤較發行價上漲約68%,是2026年以來最受關注的AI硬體IPO之一。

- 在2014年AI和GPU並非共識的背景下,團隊從第一性原理出發,認為記憶體頻寬才是限制AI算力的核心瓶頸,GPU架構並非最優解。

- Cerebras選擇了與行業慣性相反的方向,開發了面積達46,000平方毫米(傳統晶片的58倍)的晶圓級晶片,並為此重新解決了供電、散熱和電氣連續性等一系列工程難題。

- 團隊由SeaMicro原班人馬(Andrew Feldman等)組成,擁有數十年晶片與系統經驗,以「乘數效應」般的能力組合應對從半導體到軟體的全面挑戰。

- 團隊文化強調紀律、堅持與信任,早期員工中約100人跟隨創始人穿越多家公司,體現了長期、非交易型的工作關係。

- 創始人的驅動力來自解決值得實現「1000倍躍遷」的問題,而非漸進式疊代,其成長環境(如與諾貝爾獎得主共處)塑造了「聰明且善良」的價值觀。

- 投資過程基於長期觀察與深度信任,投資人甚至在愚人節翻過後院圍欄親手遞交term sheet,體現了資本耐心和夥伴關係的重要性。

Original title: Reflections on a decade with Cerebras

Original author: Steve Vassallo

Original translation compiled by: Peggy, BlockBeats

Editor's note: On May 14, Cerebras officially listed on Nasdaq under the ticker CBRS, closing its first day up approximately 68% from the offering price, becoming one of the most closely watched AI hardware IPOs since 2026.

This article was written by Steve Vassallo, an early investor in Cerebras. It recounts his partnership with Andrew Feldman spanning nineteen years, from SeaMicro to Cerebras. On the surface, it's a venture capital story from term sheet to IPO, but it actually documents how a cutting-edge hardware company, during a period when consensus was unfavorable, bet on a fundamental restructuring of AI computing architecture: from wafer-scale chips and memory bandwidth bottlenecks to a series of engineering challenges including power delivery, cooling, and electrical continuity. Cerebras faced not just a single-point technical challenge, but a complete reinvention of a modern computing system.

What's most noteworthy isn't that Cerebras ultimately created a wafer-scale chip 58 times larger than traditional chips, but that the company chose a direction opposite to industry inertia from the very beginning: when GPUs became the default answer for AI training, it sought to redefine "what a computer built for AI should be." This required not only technical judgment and patient capital, but also a long-term, non-transactional trust relationship between investors and the founding team.

For today's AI hardware competition, Cerebras's significance lies in reminding the market that the computing revolution isn't just about stacking more GPUs; it can also come from reimagining the computing architecture itself.

Below is the original text:

On Friday, April 1, 2016, I sent an email to Andrew Feldman telling him I would climb over his backyard fence to personally deliver the term sheet for our investment in Cerebras.

It was April Fools' Day, but I wasn't joking.

Strictly speaking, this wasn't standard operating procedure for a venture capital firm. But by then, I had known Andrew for nine years and had been discussing his next company with him for nearly two. I wasn't about to miss this deal over some clause we were still rewriting on a Saturday afternoon.

I first met Andrew in October 2007. He and Gary Lauterbach had just founded SeaMicro. I didn't invest in that round, but we hit it off immediately. I particularly admired their approach to thinking from first principles. I've kept an eye on them ever since.

Truly valuable relationships take time to build. Truly valuable companies are the same. From the outside today, Cerebras is a ten-year-old company about to go public. But from my perspective, this is a nineteen-year-long relationship that has finally reached the milestone of ringing the bell.

In August 2019, Andrew and I at the Hot Chips conference on Stanford's campus. At that time, Cerebras released the first-generation Wafer-Scale Engine.

Deep Relationships and Unreasonable Ambition

When AMD acquired SeaMicro in 2012, I had a feeling Andrew wouldn't stay long at a large company. He has a strong sense of competitiveness and a rebellious spirit. By early 2014, he was already looking for an exit, and we began meeting frequently to discuss what he might do next.

At the time, two things were far from being consensus: first, that AI would actually become useful; second, that GPUs were not the optimal computing architecture for AI.

On the first point, many smart people I knew disagreed. After AlexNet emerged in 2012, some corners of the research community were already achieving near-magical results with convolutional neural networks. But in the broader software industry, AI still hovered somewhere between a marketing buzzword and a research project.

The second issue, the hardware problem, had barely been raised seriously. GPUs had become the default choice for neural network training, mainly because researchers accidentally discovered they were "less bad" than CPUs. Building a new computing system specifically for AI workloads meant challenging the mainstream architecture used by researchers worldwide.

But Andrew, Gary, and their co-founders Sean, Michael, and JP saw a different path. They each had decades of experience in chips and systems: Gary's background came from pioneering work in dataflow and out-of-order execution in the 1980s; Sean specialized in advanced server architecture; Michael handled software and compilers; JP focused on hardware engineering. They were an exceptionally rare group: individually, each was brilliant; together, their capabilities were multiplicative. They could envision a completely new kind of computer.

They believed that if AI truly realized its potential, the resulting market size would far surpass the sum of all existing computing forms.

They also saw the essential nature of GPUs: a chip originally designed for graphics processing, temporarily promoted to an AI training tool on a new battlefield. It was indeed better than CPUs at parallel processing, but if designing from scratch for AI workloads, no one would architect a GPU like this. The real bottleneck limiting neural networks wasn't raw compute power, but memory bandwidth. This meant they needed to create a chip optimized not for matrix multiplication in isolated cores, but for how data flows efficiently through the entire computing structure.

Internally, investing in Cerebras was far from a consensus decision. Some of my partners had seen the previous round of semiconductor investments generate mostly losses, and they frankly expressed their concerns. But ultimately, we reached an agreement as a team. That weekend in April 2016, we told Andrew clearly: We wanted to be the first investor to give him a term sheet.

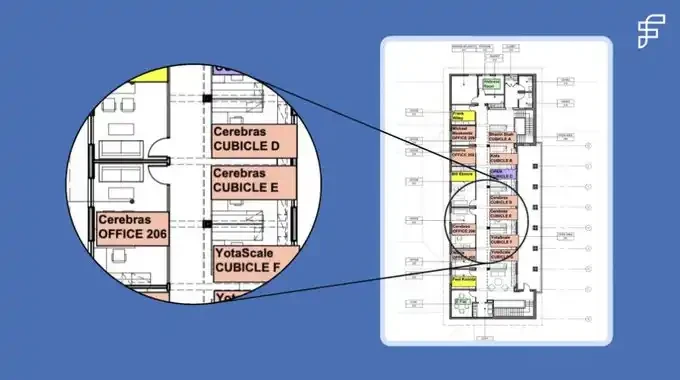

A few weeks later, Andrew, Gary, Sean, Michael, and JP moved into our EIR office space on the second floor at 250 Middlefield. I still have the floor plan our office manager drew at the time. On that map, Cerebras sat next to a founder of Foundation, just a few doors away from Bhavin Shah, who later founded Moveworks. It was a good floor for a startup to grow.

Cerebras's first headquarters, on the second floor of our old office at 250 Middlefield.

Knowing Which Rules to Bend and Which to Break

Before Cerebras, the largest chip in computing history was about 840 square millimeters, roughly the size of a postage stamp. The chip Cerebras made was 46,000 square millimeters, 58 times larger.

Choosing a wafer-scale chip meant accepting all the downstream design challenges that came with it. In nearly 80 years of computing history, no one had truly succeeded in making this work. It also meant no one had systematically solved these problems: How do you power such a massive chip? How do you cool it? How do you maintain electrical continuity across tens of thousands of connection points?

To achieve wafer-scale computing, Cerebras had to reinvent almost every aspect of modern computing simultaneously: semiconductors, systems, data structures, software, and algorithms. Each direction alone would be enough for a startup. Andrew and his team chose to tackle the thorniest technical problems first. With their intense, tireless effort, they pushed through these problems one by one.

Every six to eight weeks, we had board meetings. They would update us on their attempts since the last meeting: a new system design variant, a new power delivery scheme, or a thermal management adjustment. Having repeatedly confronted systemic challenges from every angle, they developed hard-won clarity of communication. They would explain what they thought went wrong and what they planned to try next.

We would ask questions, then dive deep with the team, mobilizing the people, resources, and relationships needed to help them find new breakthroughs. Six to eight weeks later, when we met again, the story would repeat on another technical problem: another frontier to explore. Each solution revealed the next problem that had to be solved.

Their first prototype wafer smoked on its first power-up. The team called it a "thermal event" — the term you use for a fire when you don't want to alarm the board or the landlord.

At the time, I was constantly calculating the power consumption per square millimeter, partly out of curiosity and partly because the numbers looked too high to be real. So we brought in engineers from Exponent, a failure analysis firm whose former company name was literally Failure Analysis. They confirmed the power numbers were as bold as they looked and helped us think through a series of approaches that didn't challenge the second law of thermodynamics. After all, that was one law Andrew was smart enough not to argue with.

An engineer's discipline lies in knowing which rules can be broken, which can be bent, and which must be respected. Andrew and his team had a proven knack for this distinction. They knew when they were challenging conventions — that's what they intended to do; and when they were challenging physical laws — that's what they wouldn't do.

When you're building cutting-edge technology, failure is inevitable. The only way to get through failure is discipline, persistence, and most importantly, trust: trust in the mission, trust in each other, and trust in the fact that after the first prototype self-destructs, you'll still be back in the lab the next morning to start the next iteration.

There's no transactional version of this work. There's only the long-term version: staying in the room through incomplete solutions and patient explanations. That way, when it finally succeeds, you'll be there to witness it.

That moment came in August 2019. Andrew, Sean, and their team stood in the lab, watching a completely new computer they had designed run for the first time. To an outsider, it looked like it wasn't doing anything interesting. According to Andrew, it was probably as exciting as watching paint dry. But this time was different: no such "paint" had ever actually dried before. They stood there watching for 30 minutes, then went back to work.

Who You Build With Matters

Some people choose problems based on what they know they can solve. Andrew chooses problems based on what he believes is worth solving. Incremental iterations don't excite him; he wants a 1000x leap. From day one, he wanted to build Cerebras into a generational, one-of-a-kind company.

Part of this drive comes from his personality. Andrew describes it as a "disease" of computer architects — being haunted by an idea for decades. But in my view, it's more broadly a founder's "disease." He looks at a problem and first asks himself: Can I make something that creates a step-change improvement? Then he asks: If I succeed, will anyone care? If the answers are yes, he pours the next ten years of his life into it.

Another part comes from his upbringing. Andrew grew up surrounded by geniuses, as naturally as most kids grow up watching TV. His father was a pioneering evolutionary biology professor who played doubles tennis every Sunday with six people. Among those six, three later won Nobel Prizes, and one won a Fields Medal.

As Andrew tells it, these giants would patiently explain their work in physics, mathematics, and molecular biology in language a child could understand. He formed a deep impression of what genuine intelligence looks like, while also understanding, as his mother said, that being smart doesn't give you an excuse to be an asshole.

I later realized this is one of Andrew's core traits, as important as his rebellious ambition and almost phototropic instinct for truly worthy problems. He firmly believes that the most remarkable people he has encountered are also often exceptionally kind.

This belief shaped how his team came together to accomplish extremely difficult tasks. The first 30 people Cerebras hired had all worked with him before; some had been following him since 1996. Today, Cerebras has about 700 employees, and roughly 100 have followed him through multiple companies.

In August 2022, the Cerebras founding team at the Computer History Museum. From left to right: Sean Lie, Gary Lauterbach, Michael James, JP Fricker, and Andrew Feldman.

Importantly, kindness and competitiveness are not contradictory. Andrew deeply wants to win. He likes to say he's a professional David facing Goliath. Goliath is slow and always defending against a frontal attack, leaving room for every other tactic. David's advantage lies in appearing in ways and places where Goliath cannot.

At SeaMicro, Andrew's largest channel partner in Japan was NetOne. NetOne's primary supplier was Cisco, which entertained its partners with private jets and yachts worth more than most homes in Palo Alto. Andrew's budget was far more modest, so he invited NetOne's CEO to his backyard for a barbecue. Later, the CEO told him he had done business with Cisco for decades but had never been invited to anyone's home. This small, deeply human gesture — something a Goliath wouldn't even think of — cemented their relationship.

From the First Term Sheet to the IPO

This morning, Andrew rang the opening bell at the Nasdaq. I stood beside him. It has been ten years, and 2,600 miles, since everything began in our 250 Middlefield office.

Today, there are still rare founders doing what Andrew did back then: sketching on whiteboards at 3 AM, wrestling with unsolved technical problems. They share that same strong competitiveness and rebellious spirit. They are trying to find a partner who will truly fight alongside them: someone who will dive in to solve the problem when the first prototype doesn't power on, and who will stay until it finally runs.

These are exactly the founders I want to support: They choose problems worth solving, imagine solutions 1000x better than the status quo, and continuously refine and persist through the inevitable challenges along the way.

For founders like Andrew, Gary, Sean, Michael, and JP, I would climb over a backyard fence on a Saturday afternoon to deliver the term sheet myself.

Original link