Google AI Paper Accused of Experimental Fraud, Causing $90 Billion Plunge in Storage Stocks

- Core Viewpoint: A Google paper on AI memory compression technology, TurboQuant, has sparked academic controversy due to accusations of unfairness in comparative experiments, insufficient citation of prior research, and potential distortion of performance advantages. Its market promotion directly triggered severe volatility in the global storage chip sector.

- Key Elements:

- Core Controversy Allegations: The paper is accused of inadequately explaining its key technical relationship with the RaBitQ algorithm. In speed comparisons, it allegedly tested RaBitQ using a single-core CPU Python script while testing its own method on an A100 GPU, constituting an unfair comparison.

- Sharp Market Reaction: Following the promotion of the paper on Google's official blog, market concerns about reduced AI memory demand led to a single-day market value loss exceeding $90 billion for storage chip stocks like Micron and SanDisk.

- Technical Contribution Substantiated: Independent community verification indicates that the compression effectiveness of the TurboQuant algorithm is largely valid, and its mathematical contribution is genuine.

- Institutional Analysis Rebuttal: Analysts from institutions such as Morgan Stanley point out that the technology only compresses a specific cache (KV Cache), representing a normal efficiency improvement. Furthermore, efficiency gains might stimulate larger AI deployments, ultimately increasing memory demand.

- Transmission Chain Risk Highlighted: The incident reveals the systemic risk where repackaging existing academic papers into market narratives, if accompanied by experimental bias or presentation issues, can cause significant shocks to financial markets.

Original Author: TechFlow

A Google paper claiming to "compress AI memory usage to 1/6" triggered a market cap evaporation of over $90 billion for global memory chip stocks like Micron and SanDisk last week.

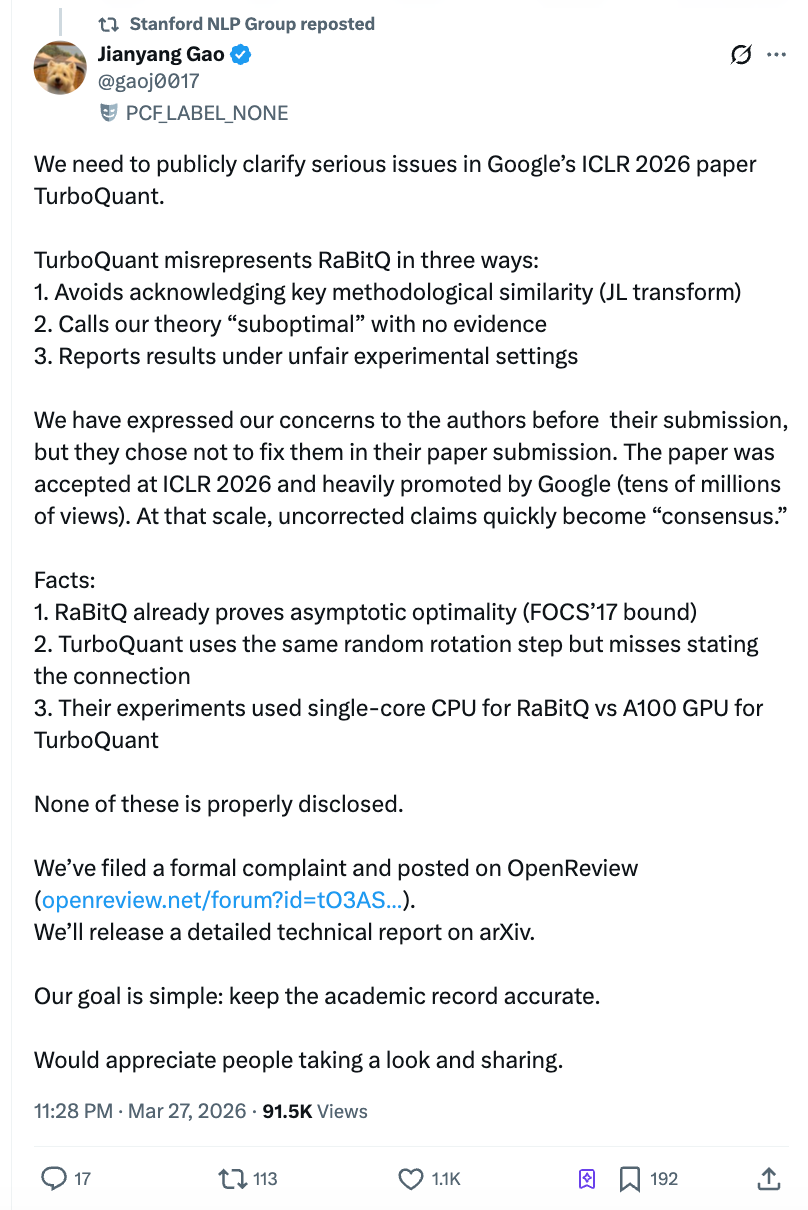

However, just two days after the paper's release, the allegedly "crushed" counterpart—Jianyang Gao, a postdoctoral researcher at ETH Zurich—published a lengthy open letter. He accused the Google team of testing the competitor's algorithm with a single-core CPU Python script while testing their own with an A100 GPU, and of refusing to correct the issue after being notified before submission. The post quickly garnered over 4 million reads on Zhihu, was reposted by the official Stanford NLP account, shaking both academia and the market.

The core issue of this controversy is not complex: did a top-tier AI conference paper, massively promoted by Google and directly triggering a panic sell-off in the global chip sector, systematically misrepresent a previously published work and, through deliberately created unfair experiments, craft a false narrative of performance superiority?

What TurboQuant Did: Thinning AI's 'Scratch Paper' to One-Sixth of Its Original Size

When a large language model generates a response, it needs to constantly refer back to previously computed intermediate results. These intermediate results are temporarily stored in GPU memory, known in the industry as "KV Cache" (Key-Value Cache). The longer the conversation, the "thicker" this "scratch paper" becomes, consuming more memory and increasing costs.

The core selling point of the TurboQuant algorithm, developed by Google's research team, is compressing this scratch paper to 1/6 of its original size, while claiming zero precision loss and up to an 8x inference speed improvement. The paper was first published on the academic preprint server arXiv in April 2025, accepted by the top AI conference ICLR 2026 in January 2026, and repackaged and promoted via the official Google blog on March 24.

Technically, TurboQuant's approach can be simply understood as: first using a mathematical transformation to "wash" messy data into a uniform format, then compressing it piece by piece using a pre-computed optimal compression table, and finally using a 1-bit error correction mechanism to fix computational deviations caused by compression. Independent community implementations have verified its compression effectiveness is largely genuine; the mathematical contribution at the algorithmic level is real.

The controversy is not about whether TurboQuant works, but about what Google did to prove it "far surpasses competitors."

Jianyang Gao's Open Letter: Three Accusations, Each Hitting the Mark

At 10 PM on March 27, Jianyang Gao published a long post on Zhihu and simultaneously submitted a formal comment on the ICLR official review platform OpenReview. Gao is the first author of the RaBitQ algorithm, published in 2024 at the top database conference SIGMOD, addressing the same class of problems—efficient compression of high-dimensional vectors.

His accusations are divided into three points, each supported by email records and timelines.

Accusation One: Using the core method of others without mention.

TurboQuant and RaBitQ share a crucial common technical step: applying a "random rotation" to the data before compression. This operation transforms irregularly distributed data into a predictable uniform distribution, significantly reducing compression difficulty. This is the most core and similar part of the two algorithms.

The TurboQuant authors themselves admitted this in their review response, yet the paper never explicitly states the connection between this method and RaBitQ. More critically, TurboQuant's second author, Majid Daliri, proactively contacted Gao's team in January 2025, requesting help debugging his Python version based on RaBitQ's source code. The email detailed reproduction steps and error messages—meaning the TurboQuant team was intimately familiar with RaBitQ's technical details.

An anonymous ICLR reviewer also independently pointed out the use of the same technique, requesting thorough discussion. However, in the final version, the TurboQuant team not only failed to add discussion but moved the (already incomplete) description of RaBitQ from the main text to the appendix.

Accusation Two: Unsubstantiated claim that the opponent's theory is "suboptimal."

The TurboQuant paper directly labels RaBitQ as "theoretically suboptimal," citing its mathematical analysis as "coarser." However, Gao points out that the extended RaBitQ paper has rigorously proven its compression error reaches the mathematical optimal bound—a conclusion published at a top theoretical computer science conference.

In May 2025, Gao's team explained RaBitQ's theoretical optimality in detail over multiple email exchanges. Daliri, the second author of TurboQuant, confirmed that all authors were informed. Yet the paper retained the "suboptimal" label without providing any counterarguments.

Accusation Three: "Tying one hand behind the opponent's back while holding a knife" in experimental comparisons.

This is the most damaging accusation. Gao points out that the TurboQuant paper stacked two layers of unfair conditions in its speed comparison experiments:

First, RaBitQ officially provides optimized C++ code (default support for multi-threaded parallelism), but the TurboQuant team did not use it, instead testing RaBitQ with their own translated Python version. Second, when testing RaBitQ, they used a single-core CPU with multi-threading disabled, while testing TurboQuant on an NVIDIA A100 GPU.

The combined effect of these conditions is: readers see the conclusion that "RaBitQ is several orders of magnitude slower than TurboQuant," without knowing this conclusion is based on Google's team racing against an opponent whose hands and feet were tied. The paper did not adequately disclose these differences in experimental conditions.

Google's Response: "Random rotation is a general technique; we can't cite every paper"

According to Gao's disclosure, the TurboQuant team responded in a March 2026 email: "The use of random rotation and Johnson-Lindenstrauss transform is already standard in the field; we cannot cite every paper that uses these methods."

Gao's team believes this is shifting the goalposts: the issue is not about citing every paper that uses random rotation, but that RaBitQ is the first work to combine this method with vector compression under the exact same problem setting and prove its optimality. The TurboQuant paper should accurately describe their relationship.

The official Stanford NLP Group X account reposted Gao's statement. Gao's team has published a public comment on the ICLR OpenReview platform, submitted a formal complaint to the ICLR conference chairs and ethics committee, and will later release a detailed technical report on arXiv.

Independent technical blogger Dario Salvati offered a relatively neutral assessment in his analysis: TurboQuant indeed has genuine mathematical contributions, but its relationship with RaBitQ is far closer than the paper suggests.

$90 Billion Market Cap Evaporation: Paper Controversy Amplifies Market Panic

The timing of this academic controversy is extremely delicate. Following Google's official blog promotion of TurboQuant on March 24, the global memory chip sector faced a severe sell-off. According to reports from CNBC and others, Micron Technology fell for six consecutive trading days, dropping over 20% cumulatively; SanDisk fell 11% in a single day; South Korea's SK Hynix fell about 6%, Samsung Electronics fell nearly 5%, and Japan's Kioxia fell about 6%. The market's panic logic was simple and crude: software compression reducing AI inference memory needs by 6x would structurally downgrade the demand outlook for memory chips.

Morgan Stanley analyst Joseph Moore refuted this logic in a March 26 research report, maintaining an "Overweight" rating on Micron and SanDisk. Moore pointed out that TurboQuant compresses only the specific KV Cache type of cache, not overall memory usage, and characterized it as a "normal productivity improvement." Wells Fargo analyst Andrew Rocha similarly cited Jevons paradox, suggesting that efficiency gains lowering costs could instead stimulate larger-scale AI deployment, ultimately increasing memory demand.

Old Paper, New Packaging: Risks in the Transmission Chain from AI Research to Market Narrative

According to analysis by technical blogger Ben Pouladian, the TurboQuant paper was publicly released as early as April 2025, not new research. Google's repackaging and promotion via its official blog on March 24 led the market to price it as a brand-new breakthrough. This "old paper, new release" promotion strategy, combined with potential experimental bias in the paper, highlights systemic risks in the transmission chain from academic papers to market narratives in AI research.

For AI infrastructure investors, when a paper claims "several orders of magnitude" performance improvement, the first question should be whether the benchmark comparison conditions are fair.

Gao's team has clearly stated they will continue to push for a formal resolution. Google has not yet issued an official response to the specific allegations in the open letter.