Xiaomi and MiniMax Simultaneously Make Major Moves, Agent Pricing War Officially Begins

- Core Viewpoint: The two Agent large models recently released by Chinese AI companies MiniMax and Xiaomi offer performance close to top international models at significantly lower API pricing, representing two distinct technological development paths: "self-iterative evolution" and "massive-scale parameters".

- Key Elements:

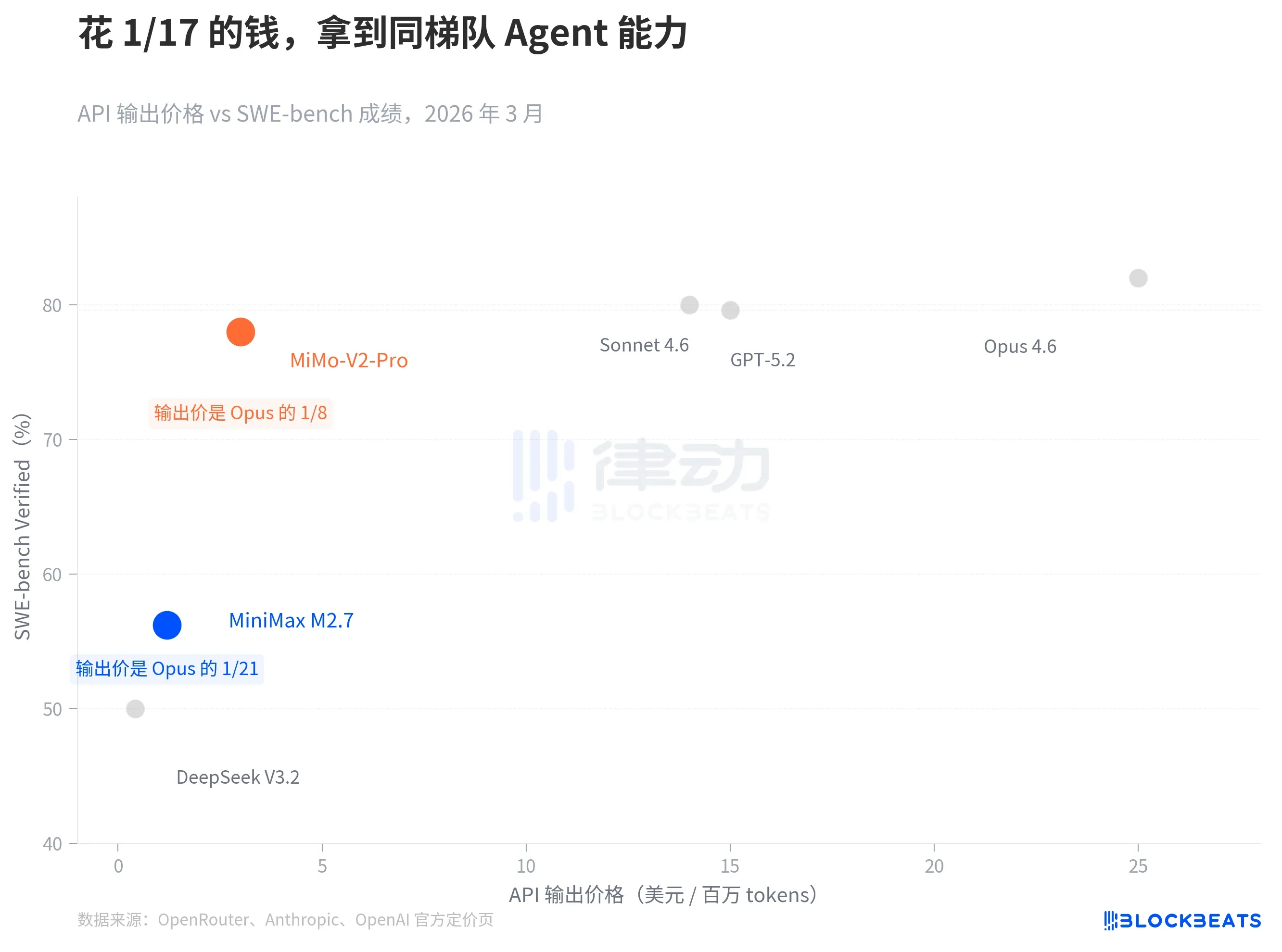

- Significant Price Advantage: The API output prices for MiniMax's M2.7 and Xiaomi's MiMo V2-Pro are $1.2 per million tokens and $3 per million tokens respectively, which is only 1/21 and 1/8 of Claude Opus's price ($25).

- Performance Reaching the Top Tier: In mainstream Agent evaluations like SWE-bench, the performance of these two models shows minimal gaps compared to top international models (e.g., Claude Sonnet, GPT-5.3-Codex), creating a "price-performance scissors gap".

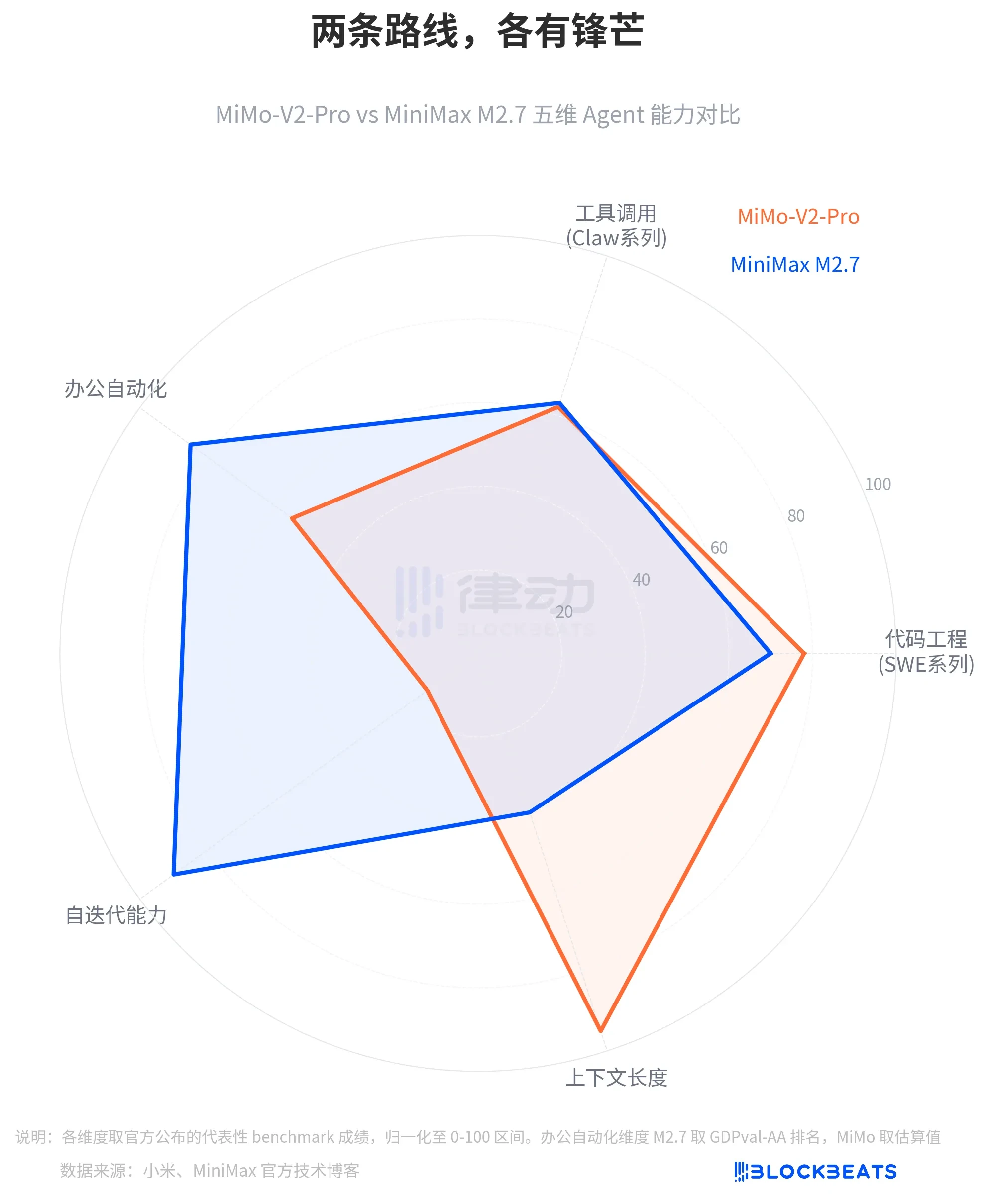

- Distinctly Different Technical Routes: MiMo V2-Pro adopts the "brute force" route with over a trillion parameters, enhancing long-context processing; M2.7 focuses on a "self-iterative evolution" mechanism, improving capabilities through autonomous optimization loops.

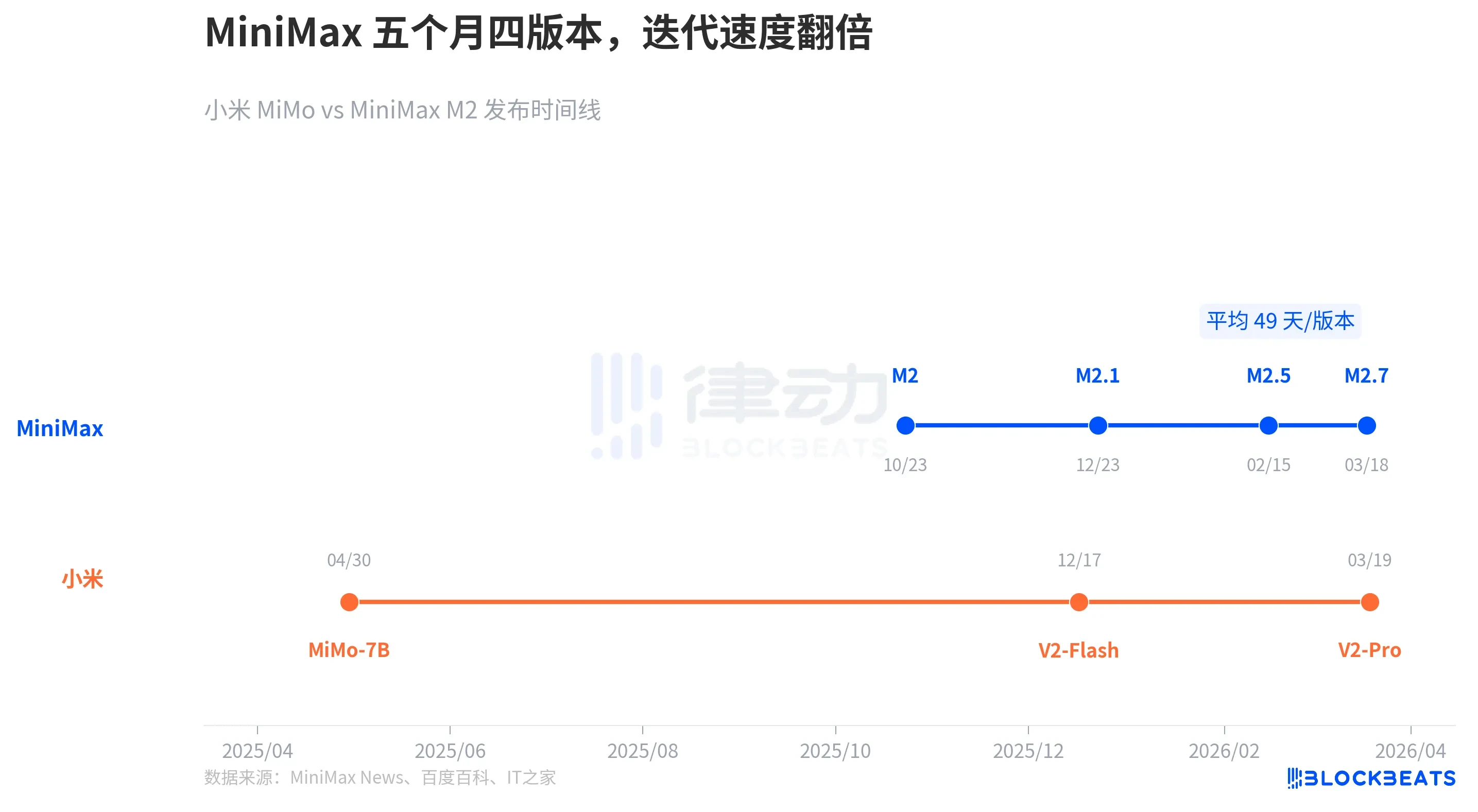

- Differentiated Iteration Strategies: MiniMax employs a high-frequency, small-step iteration approach (approximately one version every 49 days), while Xiaomi opts for long-cycle iterations with significant leaps in parameters and architecture.

- Innovative Release Strategy: MiMo V2-Pro was anonymously released as "Hunter Alpha" on the OpenRouter platform for an 8-day blind test. It attracted massive usage and topped the charts based on its performance and price before its identity was revealed.

On March 18th and 19th, two Chinese companies successively released their own large language models focused on the Agent direction. Domestic AI startup MiniMax launched M2.7, while Xiaomi's large model team, MiMo, launched V2-Pro. Both models entered the global top tier on Agent benchmarks, yet their API output pricing is 1/21 and 1/8 of Claude Opus 4.6's, respectively.

Both companies played their cards in the same week, but the cards in their hands are completely different. They represent two distinct technical paths, betting on two different futures for the Agent era.

The Same Exam, 1/17th the Tuition

First, let's look at the most direct comparison.

According to data from OpenRouter and the official pricing pages of each company, based on API output price (per million tokens), MiniMax M2.7 is $1.2, and MiMo-V2-Pro is $3. For reference, Claude Opus 4.6's output price is $25, GPT-5.2 is $14, and Claude Sonnet 4.6 is $15.

The price gap is an order of magnitude, but the capability gap is not. On SWE-bench Verified (currently the most mainstream benchmark for measuring code engineering capabilities), MiMo-V2-Pro scored 78%, while Sonnet 4.6 scored 79.6%, a difference of less than two percentage points. M2.7's SWE-Pro score is 56.22%, on par with GPT-5.3-Codex. On VIBE-Pro (end-to-end project delivery capability), M2.7 scored 55.6%, approaching the level of Opus 4.6.

The key point of this chart is not who is higher or lower—each company's benchmark systems are not fully aligned, so direct comparisons should be made cautiously. The key point is that "price-performance scissors gap": domestic Agent models have already squeezed into the same capability tier, but stand in completely different price brackets.

Trillion Parameters vs. Self-Evolution

Price is just the surface. The two companies have revealed two completely different underlying strategies.

MiMo-V2-Pro follows the "brute force" route. According to Xiaomi's official announcement, V2-Pro has over 1 trillion total parameters, 42B activated parameters, and supports an ultra-long context of 1 million tokens. Its core innovation is the Hybrid Attention mechanism, adjusting the ratio of Sliding Window Attention (SWA) to Global Attention (GA) to 7:1—the previous generation V2-Flash was 5:1. This architecture makes the model more stable when handling long documents and multi-tool parallel invocation in Agent scenarios. On PinchBench (Agent tool invocation capability evaluation), MiMo-V2-Pro scored 84%.

M2.7 takes a completely different path. According to the official technical blog released by MiniMax on March 18th, M2.7's parameter count is not disclosed, but it demonstrates a "self-iterative evolution" mechanism: the model autonomously runs over 100 rounds of optimization loops, including analyzing failure trajectories, planning modifications, modifying its own code architecture, running evaluations, and repeating the cycle, ultimately achieving a 30% performance improvement on an internal evaluation set. On the MLE Bench Lite (machine learning competition difficulty evaluation) with 22 high-difficulty problems, M2.7 secured 9 gold, 5 silver, and 1 bronze medals, with an average medal rate of 66.6%.

Looking from five dimensions, the strengths of the two paths point in completely different directions: MiMo-V2-Pro clearly has the advantage in context length and code engineering dimensions, while M2.7 creates distance in office automation and self-iteration capabilities. According to the same MiniMax technical blog, M2.7 scored ELO 1495 on GDPval-AA (office document processing evaluation), ranking first among open-source models, and maintained a 97% skill adherence rate in the MM-Claw test covering over 40 complex skills.

Four Versions in Five Months

The two companies differ not only in their technical paths but also completely in their iteration rhythms.

According to public release records, from MiniMax's release of M2 in October 2025 to the release of M2.7 in March 2026, they iterated four major versions in five months, averaging one major version every 49 days. The interval between M2.5 and M2.7 was only about 30 days.

Xiaomi MiMo's rhythm is different: they released MiMo-7B (a 7B parameter open-source inference model) in April 2025, V2-Flash (309B total parameters) in December of the same year, and V2-Pro (1T total parameters) in March 2026. The leap in parameter scale between each generation is larger, but the version intervals are also longer.

MiniMax chose a strategy of small, rapid steps, with each iteration having a modest scope but an extremely high frequency; M2.7's self-iteration mechanism is itself designed for "continuous evolution." Xiaomi chose a strategy of building up strength for a powerful strike, with each version representing a significant leap in parameter scale and architecture.

Anonymous for 8 Days, Topping OpenRouter

Beyond the technical path, Xiaomi's release strategy also broke industry conventions.

According to a Reuters report, on March 11th, an anonymous model named Hunter Alpha appeared on OpenRouter, the world's largest API aggregation platform. There was no brand endorsement, no launch event, no technical blog. Its API pricing was extremely low, yet its performance was surprisingly strong.

The community began speculating about its origin. According to Republic World and multiple tech media reports, the most mainstream speculation was DeepSeek V4, because MiMo team lead Luo Fuli previously conducted research at DeepSeek. The call volume rapidly climbed, with total calls during the anonymous period exceeding 1 trillion tokens, topping OpenRouter's weekly chart.

In the early hours of March 19th, Xiaomi revealed the answer: Hunter Alpha was MiMo-V2-Pro. According to the same Reuters report, Xiaomi's Hong Kong stock saw a surge of up to 5.8% after the reveal.

This is the first time a domestic large language model has proven itself on a global platform through a pure blind test. Without relying on brand or marketing, it let developers vote with their feet over 8 days.