The Truth Behind the Trump Administration's Ban on Anthropic: No Donations, No Entry

- Core Argument: An internal memo from Anthropic CEO Dario Amodei reveals that the core reason for the company's ban by the Trump administration was not technical safety disagreements, but rather a lack of political donations and posture. It also discloses deep-seated conflicts with defense contractor Palantir regarding security responsibility for military AI applications.

- Key Elements:

- The memo alleges the government ban stemmed from Anthropic's failure to make large political donations (like Brockman's $25 million) or publicly praise Trump, as OpenAI did, with safety clauses merely serving as a pretext.

- The breakdown in Anthropic's relationship with Palantir originated from Anthropic's post-hoc review of Claude's use in military operations (such as capturing Maduro), and Palantir's promotion to the military of its own in-house security solution, which Dario criticized as "80% showmanship," to bypass Anthropic.

- Claude is deeply integrated into core US military systems like Maven; replacing the model is not trivial and would require rebuilding numerous prompts and workflows, potentially delaying the military's intelligence automation timeline.

- Following the exposure of the incident, Anthropic attempted to reconcile with the Pentagon, but the memo leak may escalate tensions; OpenAI's Altman has publicly opposed labeling Anthropic as a supply chain risk.

- Market reaction is contradictory: Palantir's stock price rose due to the event and received analyst target price upgrades, yet its CEO and co-founders recently sold significant amounts of company stock.

Anthropic CEO Dario Amodei sent a 1,600-word internal memo to all employees last Friday. This memo was exposed today by the tech media The Information, immediately igniting the entire Silicon Valley.

The core of the memo is just one sentence: Anthropic was banned by the Trump administration not because of disagreements over security terms, but because it didn't donate money.

The $25 Million Gap

Dario named OpenAI in the memo.

He said the real reason the Trump administration dislikes Anthropic is: the company did not donate to Trump and did not offer "dictatorial praise." OpenAI, on the other hand, provided both money and the right posture.

In September 2025, OpenAI President Greg Brockman and his wife donated $25 million to Trump's MAGA Inc super PAC. According to Federal Election Commission filings, this was the largest single donation to MAGA Inc during that period, accounting for nearly a quarter of its fundraising total for the half-year. Brockman later posted on social media, stating the donation was to "support policies that promote American innovation," and praised the Trump administration for being "willing to engage directly with the AI community."

CEO Sam Altman took a different route. He didn't make a large direct donation to MAGA Inc, but donated $1 million to Trump's inaugural committee in December 2024. More importantly was the posture: The day after Trump took office, Altman stood behind the presidential seal in the White House Roosevelt Room, announcing the $500 billion AI infrastructure project Stargate, telling Trump on camera, "This wouldn't be possible without you." At the White House tech dinner in September 2025, he again told Trump face-to-face: "Thank you for being such a pro-business, pro-innovation president."

Interestingly, this same Sam Altman wrote publicly in 2016: "For anyone familiar with 1930s German history, watching Trump's actions is chilling." He also compared Trump to Hitler and discussed the "Big Lie." Before the 2024 election, he donated $200,000 to the Democratic Party to help Biden's re-election.

Anthropic did nothing. No donations, no attendance at dinners, no standing behind the presidential seal to say thanks.

This is the first time a CEO of a top-tier AI company has publicly stated this fact: your treatment in Washington depends on how much money you give to the White House.

Dario also directly criticized OpenAI's contract with the Pentagon. He said OpenAI accepted the contract because "they care about placating employees, while we care about actually preventing misuse," and called OpenAI's PR narrative around the contract "a bald-faced lie."

The White House's response indirectly confirmed Dario's claims. A government official told news site Axios: "You can't trust that Claude won't secretly execute Dario's personal agenda in a classified environment." Treasury Secretary Scott Bessent directly tweeted back, stating that no private company should dictate U.S. national security terms.

Not a single word in the response mentioned technical disagreements over security terms. It was all personal attacks.

After the memo leak, former Google CEO Eric Schmidt publicly backed Dario, saying "Dario is right, this is one of the most important decisions our society faces." Meanwhile, OpenAI CEO Altman himself admitted in an internal memo that signing the Pentagon contract just hours after Anthropic was banned "looks opportunistic and hasty."

The People Betting on Both Sides, Palantir's Awkward Position

The other half of the memo dismantles the position of Palantir, the U.S.'s largest defense data analytics company, with a market cap of about $350 billion.

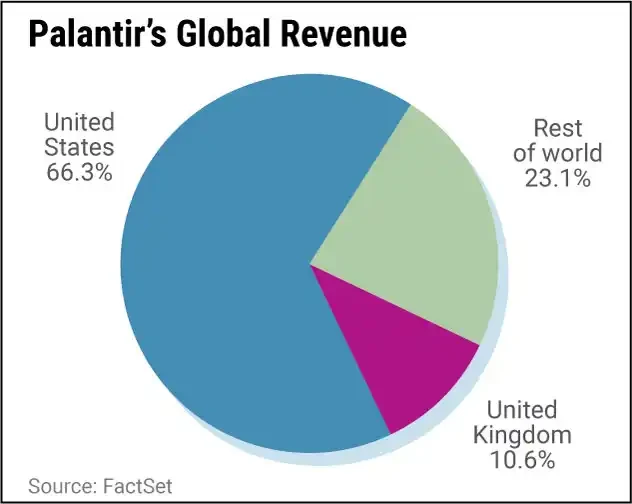

Palantir doesn't build models or chips. It's like a government-specific OpenClaw, acting as a middle layer, connecting large models like Claude and GPT into your chat tools and workflows. Palantir connects others' AI models into the military's classified data systems, allowing these models to read intelligence, run analysis, and perform target identification. 54% of its revenue comes from the government, and the U.S. market accounts for 74% of its total revenue. Its relationship with the Pentagon isn't cooperation; it's symbiosis.

At the end of 2024, Anthropic entered the Pentagon's classified network through Palantir, and Claude became the first cutting-edge AI model deployed in the U.S. military's classified system. In July 2025, the Pentagon gave Anthropic a $200 million contract. The contract ceiling for the Maven intelligent system (the Pentagon's flagship AI project for intelligence fusion and target identification), operated by Palantir, was raised to $1.3 billion. Claude is running underneath the U.S. military's six combatant commands and NATO.

Then things started going wrong. In January 2026, during the U.S. military operation to capture Venezuelan President Maduro, Claude participated in intelligence analysis through the Palantir platform. Anthropic later asked Palantir if Claude was used in the firing decision. Palantir forwarded this question to the Pentagon. The military determined this was an AI supplier conducting a post-hoc review of a military operation, and the relationship irreversibly fractured from then on.

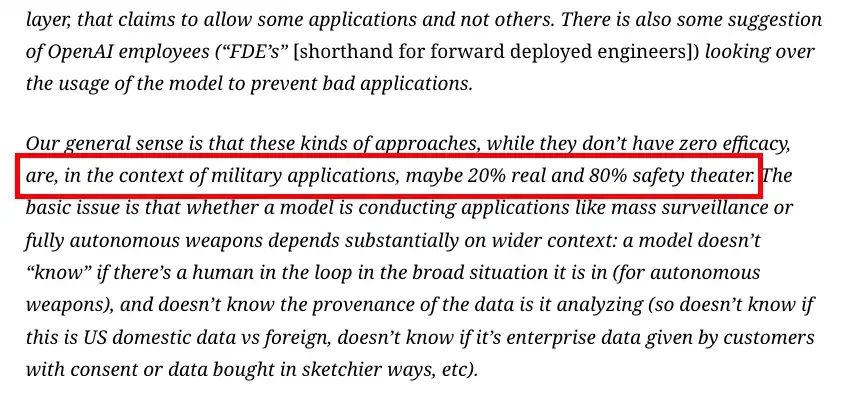

At the critical moment when Anthropic and the Pentagon's negotiations were deadlocked, Palantir did something to pour fuel on the fire: it pitched a self-developed "classifier" security solution to the Pentagon, claiming it could automatically judge through machine learning whether each use of Claude crossed a red line. The implication was: even if Anthropic refuses to sign an unrestricted contract, we can manage its model ourselves. This solution essentially gave the Pentagon an out—since Palantir says it can manage it, Anthropic's security terms are redundant.

Dario dismantled this solution in the memo. He said it's "about 20% real, 80% show." The reasons: a model cannot judge whether it is in the loop of an autonomous weapons system; it doesn't know if the data it's analyzing comes from foreign sources or U.S. citizen data; it doesn't know if the data was obtained with user consent or purchased through gray channels; jailbreak attacks are frequent and easy to implement. Four problems, and Palantir's classifier can't answer any of them.

Dario said Palantir's real understanding of Anthropic's position was: "You have some unhappy employees and need to give them something to placate them."

On March 3, Palantir CEO Alex Karp gave an unnamed broadside at the Washington Defense Tech Summit hosted by top Silicon Valley VC Andreessen Horowitz: "If Silicon Valley thinks it can take everyone's white-collar jobs and then screw the military, you're retarded." Everyone knew who he was talking about. But what he didn't mention was: the company he called "uncooperative" is precisely the core AI supplier on his own platform.

Palantir sold an AI security layer to the Pentagon. The AI running in that security layer is Anthropic's Claude. Anthropic's CEO says this security layer is a show. Palantir helped the Pentagon find a reason to kick out Anthropic. As a result, after kicking out Anthropic, the one hurting the most is Palantir itself.

Dismantling the Engine Hurts More Than Imagined

Reading this, one naturally thinks: Claude is just the model base, can't they just switch to OpenAI's GPT or xAI's Grok? Like switching the default model in OpenClaw?

It's not that simple. Reuters today cited two sources familiar with the matter: a large number of prompts and workflows in the Maven intelligent system are built around Claude. This isn't just changing an API address. Prompt chains, workflows, output formats, and security audit processes are all tuned to Claude's behavior patterns. Changing models means rebuilding and testing a complete set of processes for military intelligence analysis and target identification. Sources said Palantir needs to "rebuild parts of the software."

The Maven contract ceiling is $1.3 billion, covering through 2029. Deployment spans the U.S. military's six combatant commands and NATO. The schedule for the U.S. National Geospatial-Intelligence Agency, a primary GEOINT agency, is for Maven to begin delivering "100% machine-generated" intelligence to combatant commanders by June 2026. Now the engine needs to be swapped, and this timeline is likely to slip. Analysts at Wall Street investment bank Piper Sandler said: Anthropic is deeply embedded in the military and intelligence systems, "accessing and negotiating alternative technologies takes time and resources, resources that could be used on growth opportunities."

Michael Burry, the inspiration for *The Big Short* and a well-known Palantir short-seller, added another jab: the six-month transition period precisely shows that the stickiness is in Claude's technology, not Palantir's platform. If Claude could really be switched as easily as changing models in OpenClaw, why would the Pentagon give a six-month transition period?

Wall Street doesn't care about this. After Anthropic was banned, boutique tech investment bank Rosenblatt raised Palantir's price target from $150 to $200, and UBS also upgraded its rating. On March 4, Palantir's stock rose 3.28%. During the same period, CEO Karp and co-founder Peter Thiel sold over $400 million worth of Palantir stock between February 20 and March 3. Analysts are shouting "buy," while the founders are selling.

On the same day the memo was exposed, things took another turn.

On March 4, Dario told investors at the Morgan Stanley Technology Conference that Anthropic is "trying to de-escalate and reach an agreement that works for both sides" with the Pentagon. He said Anthropic and the Pentagon have "far more in common than differences." According to sources familiar with the matter, during the five days of the ban, Anthropic executives have privately expressed regret to the Pentagon about the previous communication style.

But the leak of the memo may have stirred the pot again. Axios reported that White House officials believe Dario's attacks on the Trump administration in the memo "could ruin any chance of reconciliation." A government official's exact words were: "You can't trust that Claude won't secretly execute Dario's personal agenda in a classified environment."

Interestingly, OpenAI is also helping. Altman, when signing the Pentagon contract, proactively asked the government to "offer the same terms to Anthropic," and publicly stated his opposition to labeling Anthropic a "supply chain risk." He called it a "very bad decision."

The Pentagon gave Anthropic 48 hours to make a decision. It has been over a week since Palantir started dismantling the engine. And now, both sides have started talking again.