Banned for 48 hours, Claude tops the App Store charts

- Core Viewpoint: OpenAI's contract with the Pentagon triggered a massive user boycott of ChatGPT and a shift to Claude. This is not just commercial competition; it highlights a fundamental divergence in the safety philosophies of Anthropic and OpenAI regarding military AI applications: the former attempts to proactively set limits in legal gray areas, while the latter relies on the existing legal framework.

- Key Elements:

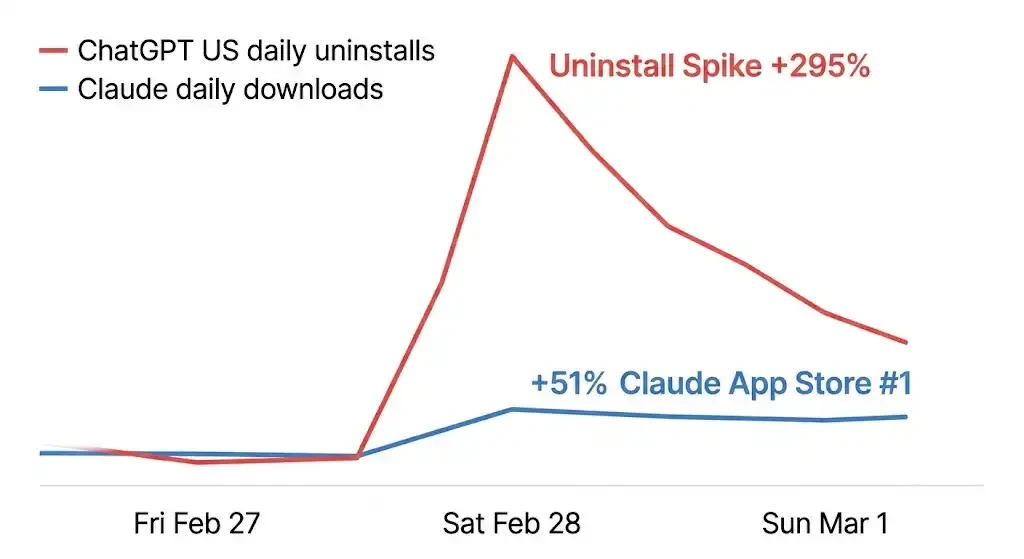

- Intense Market Reaction: The incident caused ChatGPT's daily uninstall rate in the US to surge by 295%, one-star reviews to increase by 775%, while Claude's downloads rose by 51%, topping the App Store's free charts.

- Precise Product Counterattack: Anthropic promptly launched a "memory migration tool" and made memory features free, significantly lowering the switching cost for users moving from ChatGPT.

- Contract Clause Divergence: The core of the controversy is the Pentagon's requirement that AI can be used for "all lawful purposes." Anthropic lost the contract by refusing this clause and insisting on writing in two specific prohibitions, while OpenAI accepted the clause but claimed the contract contained the same protections.

- Safety Philosophy Difference: OpenAI's bottom line is "not doing illegal things," while Anthropic's extends to "things not prohibited by law but deemed inappropriate," leading to different commercial choices and internal disputes.

- Two Sides of Brand Narrative: Anthropic's long-standing emphasis on a narrative of "preventing civilization-level risks" caused it to lose the military contract and be labeled a security risk, yet the same narrative unexpectedly won significant moral support from ordinary users, driving its user surge.

- Disparate Commercial Impact: The military contract Anthropic lost is worth about $200 million, but its annualized revenue reaches $14 billion, with a recent valuation of $380 billion, indicating the potential value from user growth far exceeds the contract loss.

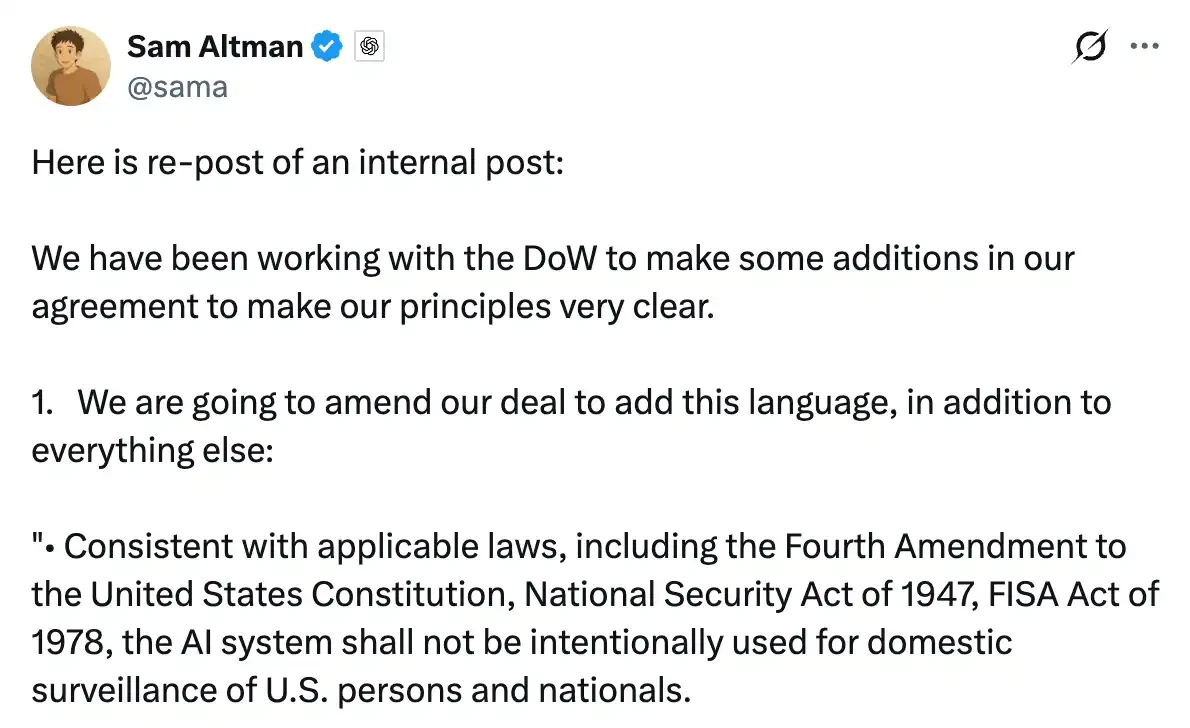

On Saturday morning, Altman reposted a screenshot of an internal letter on X.

The letter was written to OpenAI employees late Thursday night, stating that the company was in talks with the Pentagon and that he hoped to help "de-escalate the situation." He shared the letter with a few lines of explanation, essentially wanting to publicly explain what had happened over the past few days.

By the time he posted this tweet, Claude had already risen to the number one spot on the US App Store's free app chart. Just the day before, ChatGPT was sitting in that position.

Sensor Tower's data recorded what happened in the following hours: on Saturday alone, ChatGPT's uninstall rate in the US surged by 295% compared to usual days, and 1-star reviews skyrocketed by 775%. Meanwhile, Claude's downloads increased by 51% in a single day. A wave of posts titled "Cancel ChatGPT" appeared on Reddit, with users sharing screenshots of canceled subscriptions. Someone commented, "fastest install of my life." A website called QuitGPT.org went live, claiming 1.5 million people had already taken action.

On Monday, the influx of users was so massive that Claude experienced a large-scale outage. The company labeled as a "supply chain security risk" by the federal government saw its servers under pressure due to the user surge.

A Precise Product Counterattack

On the same day the uninstall wave was brewing, Anthropic launched a memory migration tool.

The feature itself is not complex. Users copy a prompt into ChatGPT, have it output all stored memories and preferences, then paste it into Claude. Claude imports it with one click, picking up from where you left off with ChatGPT. The official website copy is just one sentence: "switch to Claude without starting over."

The timing of this tool is its most crucial attribute.

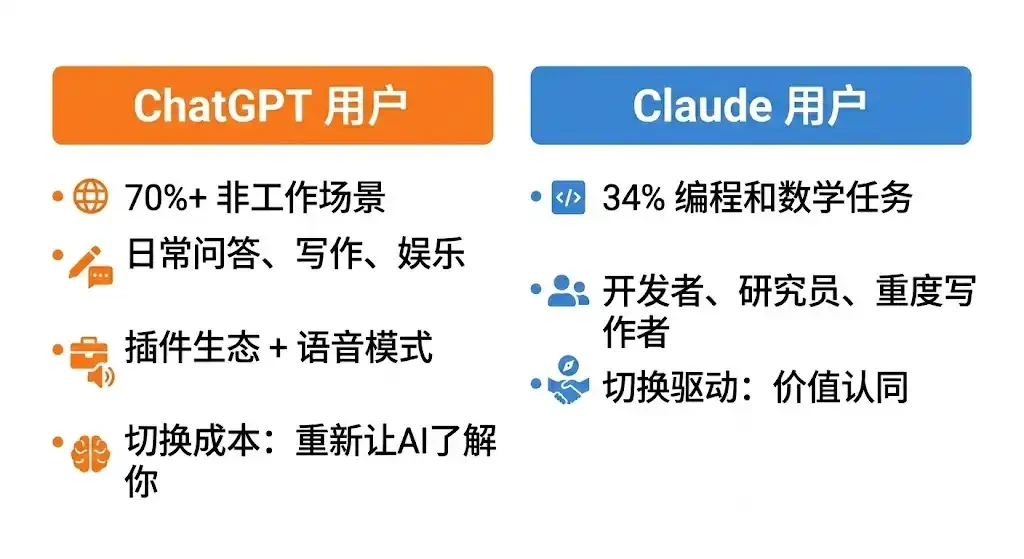

OpenAI's own data shows that by mid-2025, over 70% of ChatGPT usage was for non-work-related scenarios, including daily Q&A, writing, entertainment, and information seeking. For many, it was their first encounter with AI, becoming embedded in daily life through its vast plugin ecosystem, Voice Mode, and deeply integrated third-party applications. The switching cost for these users isn't just "downloading a new app"; it's about getting an AI that doesn't know you to start understanding who you are from scratch. The accumulation of memory was previously the strongest reason to stay.

Anthropic's own research data shows that Claude's usage is highly concentrated. Programming and math tasks account for 34%, the single largest category, with education and scientific research being the fastest-growing directions over the past year. The core users are developers, researchers, and heavy writers—a group that is more rational and more likely to switch tools based on a clear value judgment, as long as the migration cost is low enough.

The memory migration tool pushes this cost to the absolute minimum. Simultaneously, Anthropic announced it would fully open the memory feature to free users, a feature previously exclusive to paid subscribers.

However, a significant portion of this influx of users were not Claude's original target audience.

Judging from feedback on social media, many ordinary users migrating from ChatGPT often react upon first using Claude: "It's different." Some feel Claude's responses are more in-depth, actively pushing back rather than agreeing with everything. Some find its writing cleaner, but it doesn't generate images and lacks interactive experiences like Voice Mode.

Some were looking for a "more obedient ChatGPT replacement" but found Claude to have a stronger personality, requiring time to adapt. A migration guide by TechRadar was widely shared these past few days, titled "I wish someone had told me these things." The core message of the article is: Claude and ChatGPT have fundamentally different usage logics; the former is more like a colleague with a stance, the latter more like a universal assistant.

This difference was originally the respective positioning of the two products but was unexpectedly amplified by this event. Users flocked to Claude due to moral stances, only to discover a product different from their expectations—a more discerning, more boundary-conscious AI. This could have been a reason for churn, but at this special moment, it instead became a reason to stay: if you believe in a company's stance, you're more likely to accept the logic of its product.

A few days after the launch, Anthropic released data: free active users grew by over 60% compared to January, and daily new registrations quadrupled. Claude experienced an outage due to excessive traffic, with thousands of users reporting login failures, resolved within hours.

Three Words in the Contract: What OpenAI Said and What It Did

Anthropic was the first commercial company to deploy an AI model onto the US military's classified network, a cooperation completed through Palantir, with a contract value of approximately $200 million. However, over the past months, the relationship has deteriorated. The core dispute is a clause: the Pentagon demanded the AI model be open for "all lawful purposes," without any conditions. Anthropic insisted on writing in two exceptions: not to be used for mass surveillance of US citizens, and not to be used for fully autonomous weapon systems.

Around February 20th, it was reported that an Anthropic executive questioned the partner Palantir about Claude's usage in the US military's January operation to capture Venezuelan President Maduro, to which the military strongly objected. On Thursday, the Pentagon issued an ultimatum, giving Dario Amodei until 5 PM that day to respond.

Amodei issued a statement before the deadline, saying the company could not accept the current terms, "not because we oppose military use, but because in a small number of cases, we believe AI has the potential to undermine rather than defend democratic values." Trump subsequently announced a six-month suspension of all Anthropic products across federal agencies, and Hegseth labeled it a "supply chain security risk," a tag typically used for foreign adversary companies. The contract was terminated.

The vacated position was quickly filled. Later that same day, OpenAI announced a contract with the Pentagon. In his Thursday internal letter, Altman's stance was still clear. He wrote that this was already "an industry-wide problem," stating that OpenAI and Anthropic shared the same "red lines": opposition to mass surveillance and autonomous weapons. On Friday, an agreement was reached to deploy models on the military's classified network, restricted to cloud operation only, with engineers deployed for oversight, and claiming the same two restrictions were written into the contract.

Altman later opened up for questions on X, answering for several hours. Someone asked him: Why did the Pentagon accept OpenAI but ban Anthropic? His response was: "Anthropic seemed more focused on specific prohibitions in the contract rather than citing applicable law, and we are comfortable citing law."

This statement speaks to a methodological difference, but it opens up the real controversy of this matter.

The key point of Anthropic's breakdown was the phrase the Pentagon insisted on including: AI systems can be used for "all lawful purposes." Anthropic's reason for refusal was that this phrase is not a fixed boundary in a national security context. Existing laws haven't caught up with AI capabilities in many areas, and the scope of "lawful" would be determined by the government's own interpretation. OpenAI signed this phrase while claiming to have negotiated the same protections in the contract.

Legal experts later analyzed the publicly available contract terms from OpenAI, pointing out two specific phrasing issues.

The surveillance clause states the system shall not be used for "unconstrained" surveillance of US citizens' private information. Samir Jain, Vice President of Policy at the Center for Democracy & Technology, pointed out that the wording here implies that a "constrained" version of surveillance is permitted. Under the current legal framework, the government can legally purchase citizens' location records, browsing history, and financial data from data brokers and have AI analyze this data, which technically does not constitute "illegal surveillance." Amodei gave precisely this example in a subsequent interview with CBS.

The weapons clause states the system shall not be used for autonomous weapons "in circumstances where law, regulation, or departmental policy requires human control." This qualifier means the restriction only takes effect if other regulations already require human control, its binding force entirely dependent on existing policies. And the Pentagon has the authority to modify its internal policies at any time. Legal scholar Charles Bullock wrote on X that the weapons clause in the contract relies on DoD Directive 3000.09, which requires commanders to retain "an appropriate level of human judgment," and this "appropriate level" is a standard that can be flexibly interpreted.

OpenAI's response to these criticisms is: the models can only run in the cloud, which architecturally precludes direct integration into weapon systems. The contract also cites specific legal bases, which are more binding than textual prohibitions because laws are a tested framework. Altman himself admitted in the Q&A: "If we need to fight that battle in the future, we will, but it obviously exposes us to some risk."

This isn't a matter of one company being willing to compromise and another holding to principles; it's two fundamentally different safety philosophies. OpenAI's bottom line is: I won't do illegal things. Anthropic's bottom line is: I also won't do things that the law hasn't yet prohibited but I believe shouldn't be done.

This divergence has also created fissures within OpenAI. Last week, several OpenAI employees signed an open letter supporting Anthropic's stance and opposing its designation as a supply chain risk. Alignment researcher Leo Gao publicly questioned whether the company's contract provided sufficient protection. Sidewalks outside OpenAI's San Francisco office saw critical graffiti written in chalk. Outside Anthropic's office were supportive messages. Altman's hours-long X Q&A was, to a significant degree, aimed at those within his own company who originally sided with Anthropic.

Two Outcomes of the Same Narrative

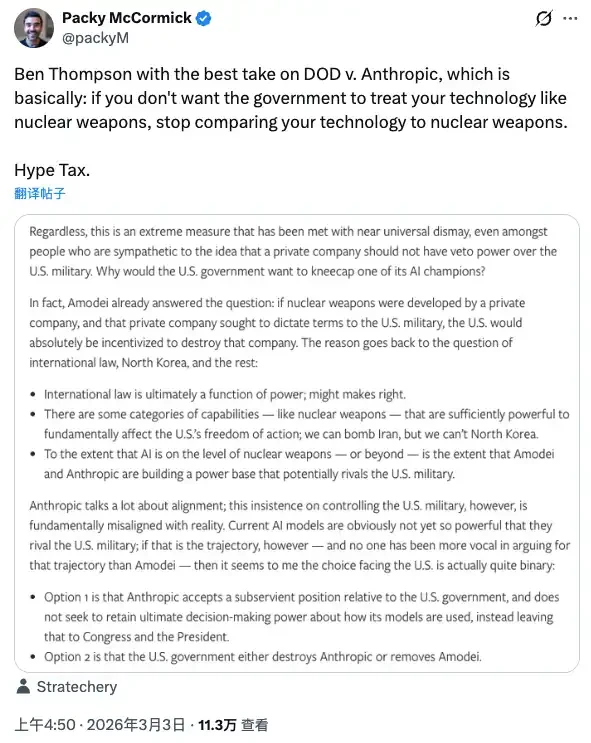

For years, Anthropic has framed its safety mission as "preventing civilization-level risks," equating the potential threat of frontier AI with nuclear weapons and positioning itself as the gatekeeper on this line of defense. This narrative is core to its brand and its way of gaining trust in the capital markets.

During the unfolding of this event, tech commentator Packy McCormick cited a concept by Ben Thompson: the Hype Tax. The idea is that if you use extreme narratives to build your influence, then when that narrative encounters real power, you have to pay the price for it. You compare AI technology to nuclear weapons, and the government will treat you accordingly.

Anthropic paid the price for this narrative: losing a contract, being labeled a security risk, being named by the President, and having all its products required to be purged from federal systems within six months.

But over the same weekend, the same narrative produced the exact opposite effect in another dimension.

Ordinary users didn't see contract clauses, legal interpretations, or debates about safety philosophies. They saw: one company said no and got kicked out by the government. Another company said yes and got the contract. They made their choice using their own judgment framework: a 295% surge in uninstalls, number one on the App Store, overloaded servers.

This is a rare collective consumer moral statement in the history of the AI industry.

Anthropic didn't spend a single dollar of PR budget on this event. Amodei's statement was measured, not calling for user support, not naming OpenAI, not portraying itself as a martyr. But it happened.

There's a noteworthy detail here: the event that drove users to Claude was essentially OpenAI doing something completely reasonable from a business perspective—signing an agreement when a competitor was banned and a contract was up for grabs—while claiming to have negotiated the same protective clauses. Altman also explicitly stated that he did this partly to help de-escalate the situation and prevent further harm to Anthropic.

Regardless of motive, the result is OpenAI got the contract, and Anthropic gained users. Both sides paid a price, both sides gained, just measured in different units.

There's one more thing worth mentioning here.

The Pentagon contract Anthropic lost is worth approximately $200 million.

Anthropic's current annualized revenue is $14 billion. The target is to reach $26 billion within 2026.

Anthropic just completed a $30 billion Series E financing round last month, with a valuation of $380 billion.

This math isn't hard to do now. But another question remains unanswered: when AI is truly used on a large scale for military decision-making, will those "technical guardrails" written into contracts and the deployed engineers actually be effective, whether they are OpenAI's or those originally demanded by Anthropic.

That question is not in any publicly available contract.