Is Musk really the victim?

- Core Thesis: As of May 2026, the legal dispute between OpenAI and Elon Musk reveals the complex journey of the AI giant from non-profit idealism to commercial reality. Evidence from the trial indicates that internal conflicts existed as early as 2017, and the non-profit mission has gradually been eroded by commercial control, computational power demands, and massive capital, while the promise of "benefiting all humanity" lacks institutional safeguards in practice.

- Key Elements:

- In 2017, OpenAI internally recognized that the non-profit structure could not support AGI development and began discussing for-profit plans, marking the early emergence of cracks in the organizational structure.

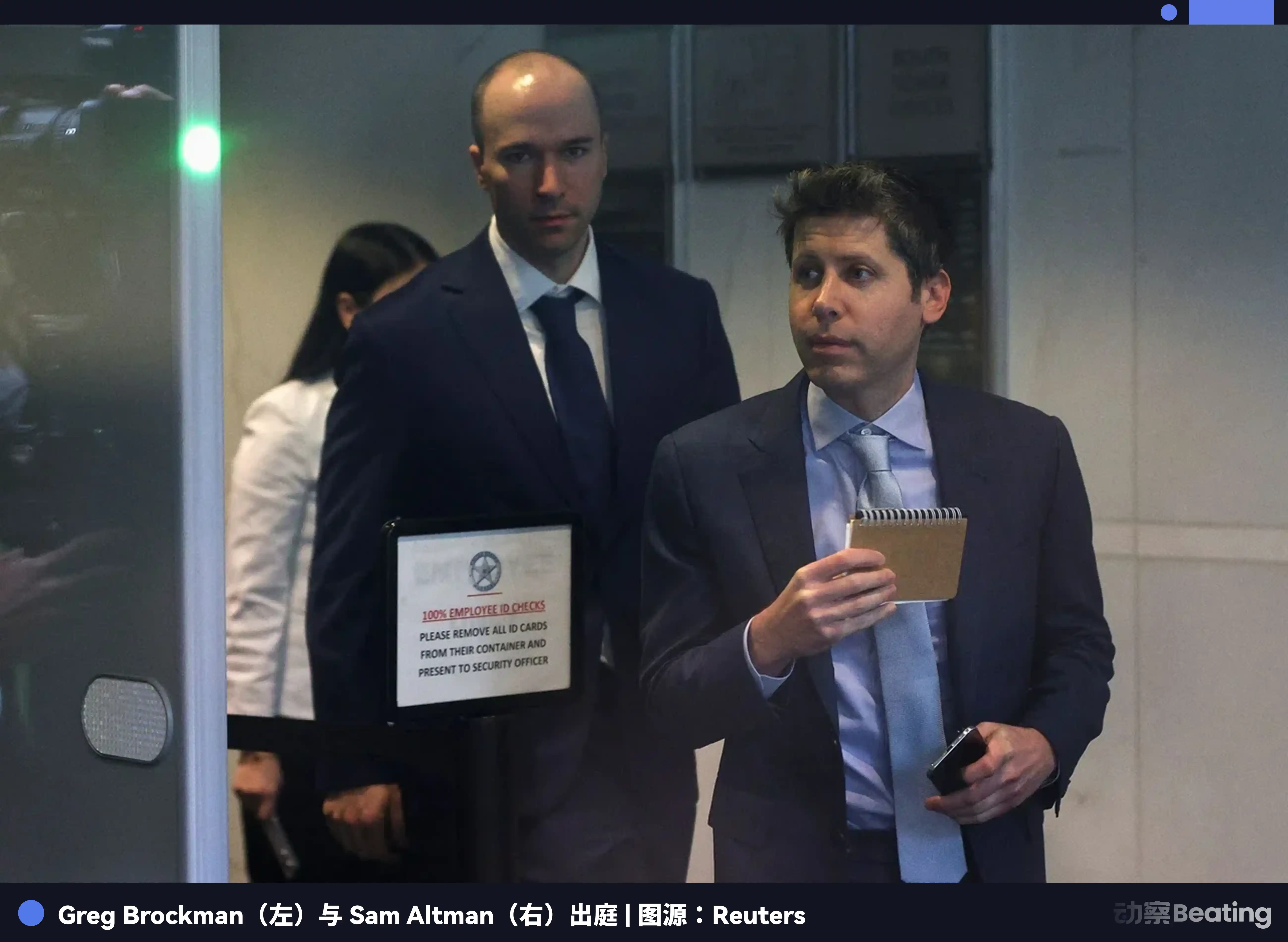

- The trial revealed Greg Brockman's private diary, addressing wealth goals and anxiety about the moral boundaries of being "non-profit," with the estimated value of his OpenAI equity approaching $30 billion.

- Elon Musk is portrayed as both concerned about AI risks and eager for control. He once proposed Tesla absorbing OpenAI. The core of the legal battle is the clash of mission and the struggle for control.

- Sam Altman's credibility issues are placed at the center of the problem. Several former colleagues (including Sutskever and Murati) called him a "liar" in court, undermining his qualification as the guardian of the mission.

- Microsoft's deep integration ($13 billion investment) and control over computational power made it difficult for the non-profit board to exercise independent oversight after the board crisis of 2023, with the mission being overwhelmed by commercial realities.

Original author: Sleepy

In May 2026, at the Federal Court in Oakland, OpenAI's filters were stripped away layer by layer.

What was presented to the jury was a chaotic Rashomon: Greg Brockman's private diary, a tangle of anxiety and calculation; Elon Musk's unwavering grip on power; Sam Altman's integrity issues skating close to the line; Microsoft's vast shadow over computing power and capital; and the dramatic yet hastily concluded boardroom coup at the end of 2023.

Amidst all this mess, there was one issue that sounded grand but became strikingly specific in the courtroom: Did OpenAI's original promise to "benefit all of humanity" still hold true?

As of May 15, 2026, the trial has yet to reach a final verdict, with the jury's advisory opinion still pending. But one thing has already happened for certain: OpenAI has been dragged back from mythology into the real world.

In recent years, OpenAI has often been portrayed as a story about the future. The explosion of ChatGPT, Altman's global tours, large models embedding themselves into offices, schools, phones, and corporate workflows. This was a company born with a quasi-religious sense of purpose, speaking of humanity's fate, the awakening of intelligence, the boundaries of safety, and the dawn of tomorrow—like a lighthouse built precariously early for humanity.

But the court doesn't care about that. The court asks about facts.

"All of Humanity" Takes the Witness Stand

In 2015, OpenAI was still pristine at its birth.

It declared itself a non-profit AI research company, aiming to develop digital intelligence in a way that would most broadly benefit all of humanity, unconstrained by the need to generate financial returns.

Altman and Musk were co-chairs, Brockman was CTO, and Ilya Sutskever was research director. Back then, OpenAI seemed to embody the last vestiges of Silicon Valley's golden age idealism—the brightest minds weren't serving a specific company, but securing humanity's future.

A decade later, that promise was brought into the court.

Musk's side argues that Altman, Brockman, and OpenAI secured his funding and trust based on the non-profit mission, only to later shift to a for-profit structure, benefiting themselves and Microsoft.

OpenAI's side argues that Musk's money was a donation with no specific strings attached; he was aware of discussions about a for-profit structure long ago but couldn't secure control; he's suing now out of regret for leaving and because his own company, xAI, has become a competitor.

Neither side was saying flattering things.

Musk positioned himself as the guardian of the mission. OpenAI positioned him as a founder who lost control. One says, "You stole a charity," the other says, "You just couldn't control it." In the end, the most awkward thing wasn't which side told a better story, but that the repeatedly invoked "all of humanity" never actually had a seat at the table.

The term "all of humanity" appeared in founding announcements, charters, speeches, and media coverage, occupying the moral high ground.

But in court, it was dissected into pieces of evidence: Did Brockman's diary represent his true intent? What did the 2017 emails reveal? What exactly was transferred when OpenAI LP was created in 2019? Did Microsoft's cloud and money alter the company's direction? Could Altman's integrity issues sustain the company's plea of "trust us"?

The more an AI company claims to represent humanity, the more specifically it should be asked: Which humanity do you mean? Who signs for these people? Who can remove you? Who can audit the books? Who can say no?

The court couldn't answer these questions for the public, but it forced them into the open.

OpenAI's story, therefore, no longer seems like the growth history of a futuristic company, but more like a settling of old scores. Once the books were opened, people realized the cracks didn't appear only after ChatGPT's explosive success.

The Cracks of 2017

OpenAI didn't change suddenly.

If you only look from ChatGPT onwards, you'd mistakenly think OpenAI was driven by money after success, like many companies that talk ideals first and then do business.

But the trial turned back the clock to 2017. Back then, OpenAI didn't have today's prominence, and AGI wasn't yet a buzzword on everyone's lips. But the founding team had already encountered a problem: if they were truly going to build general artificial intelligence, donations and passion alone would never be enough.

This is the most difficult moment for Silicon Valley idealism. The bigger the ideal, the bigger the bill. The bigger the bill, the harder it is for the organization to stay clean. All those grand speeches about humanity's future eventually boil down to chips, servers, engineers' salaries, cloud resources, and long-term capital. Without these, AGI is just a wish; with them, the non-profit structure becomes increasingly unsustainable.

In 2017, OpenAI was already internally discussing various paths: a for-profit affiliate, a B-corp, partnerships with existing companies, or hitching onto Tesla. Musk proposed making OpenAI reliant on Tesla for funding. OpenAI's side countered that Musk wasn't simply opposed to for-profit status; control was his non-negotiable demand.

That year also provided one scene worth remembering: Dota.

After OpenAI's AI defeated top human players in Dota 1v1, the team realized more acutely than ever that this thing could potentially become huge. The trial mentioned a discussion at Musk's San Francisco house, later referred to as the haunted mansion meeting, where they celebrated the technical breakthrough and debated whether OpenAI should go for-profit.

Many companies start reinterpreting themselves after a product succeeds. OpenAI did it earlier. Before it became the giant it is today, the founders already knew the non-profit structure couldn't sustain the AGI narrative. OpenAI's ideal needed a heavier machine to sustain it from the start.

Thus, an organization seemingly about scientific safety quickly entered control negotiations.

Who would hold the steering wheel? Musk or Altman? The non-profit board or future investors? Or that never-truly-present "all of humanity"?

Looking at Musk now, he was certainly an early key funder and helped build OpenAI's non-profit narrative. But he was also one of the first in this story to see the immense power AI could bring. And upon seeing it, he wanted to hold onto it tightly himself.

Musk's Steering Wheel

Musk repeatedly emphasized one thing in court: OpenAI was stolen.

This phrasing is powerful. It compresses a complex organizational shift into a phrase anyone can understand. A charity meant to serve humanity turned into a massive commercial machine. It sounds like theft of property and a moral betrayal.

But the story in court wasn't that simple.

OpenAI's lawyers focused their cross-examination of Musk on dismantling his image as a pure victim. They produced emails and documents, pressing him on whether he knew early on that OpenAI might need a for-profit structure, and whether he had tried to absorb OpenAI into Tesla or otherwise gain control.

Musk didn't like being dissected this way. He said the questions were trying to "trick me." The judge repeatedly asked him to give direct answers. When he tried to steer the conversation towards AI extinction risks, the judge reminded him this case wasn't going to discuss extinction very much.

These scenes are quite revealing of Musk.

He is accustomed to grand narratives. Humanity's fate, AI risk, Mars, free speech, civilizational survival—these are his favorite topics. But the court demanded he answer smaller, sharper questions: When did you know? Did you agree? Did you want control? Was your money for OpenAI a donation or an investment...?

The contradictions in Musk mirror the contradictions in OpenAI's story. He might genuinely fear AI becoming uncontrolled, and he might genuinely believe OpenAI has strayed from its mission. But this doesn't mean he wouldn't also want the company to operate according to his will.

The more one believes they are saving humanity, the easier it is to stubbornly think they should hold the steering wheel.

This isn't just Musk's problem. It's the undercurrent of many grand Silicon Valley narratives. They tend to dress up personal will as a human mission, the desire for control as a sense of responsibility, and organizational power as a necessity for the future. Musk just displays this more outwardly, more intensely, and more visibly.

So, Musk in this case isn't just the accuser; he is also evidence of the problem itself.

Brockman's Diary

Greg Brockman wasn't meant to be the most prominent figure in this drama.

Musk is too theatrical, Altman is too central, Sutskever is too tragic, Microsoft is too large. Brockman sits in between—a core early founder of OpenAI and a key figure in the company's operational reality. But this trial pushed him into the spotlight because his private diary became evidence.

In the second week of the trial, Brockman was repeatedly questioned about his diary, emails, and text messages. Musk's side used these materials to prove that he and Altman had self-interested motives from early on. OpenAI's side argued that Musk was taking things out of context.

The diary contained wealth goals. Anxiety about the company's revenue path. Phrases like "making the billions." More glaringly, the diary included a self-reminder about not being able to "steal" the non-profit from Musk without facing moral bankruptcy. Musk's lawyers seized upon these entries repeatedly. Brockman denied deceiving Musk and said these private writings weren't minutes of meetings but stream-of-consciousness personal notes.

A diary is not a verdict. It cannot directly prove they were committing fraud. It might contain rough thoughts written down in moments of fatigue, anxiety, and self-debate. Every writer knows that private notes don't equal final positions, let alone the complete truth.

But the real importance of Brockman's diary isn't what crime it proves. It's that it shows they knew where the boundaries were. The early core figures at OpenAI weren't stumbling blindly towards commercialization. They knew the "non-profit" shell carried moral weight, knew Musk's early funding was based on trust, and knew it would seem dishonest to firmly claim non-profit status while planning to shift to a different structure months later.

Knowing, however, didn't mean stopping.

During the trial, Brockman disclosed that the equity he held in OpenAI was valued at nearly $30 billion.

While this amount isn't cash or realized wealth—it's equity value based on valuation, still dependent on the company's prospects and deal structure—the symbolic significance is powerful. A man who once worried about moral boundaries in his private diary later sat in court being asked about his nearly $30 billion stake in OpenAI. Public mission and private wealth were placed on the same table at that moment.

Brockman is like many key figures in successful organizations: smart, dedicated, capable, possessing a sense of shame, but also able to convince himself, step by step, to keep moving forward.

This is where OpenAI's complexity lies. It's not a group of bad people conspiring to destroy an ideal. Rather, it's a group of smart people finding reasons to proceed at every junction, eventually embedding their initial promise into a machine they themselves might not fully control.

And at the center of this machine is Altman.

Altman's Debt of Trust

During this trial, Sam Altman was questioned about more than just the truth of specific statements. Musk's side fundamentally attacked his right to rule.

In closing arguments, Musk's lawyer Steven Molo placed Altman's integrity issues at the core. He told the jury that five people who worked with Altman for years—Musk, Sutskever, Murati, Toner, and McCauley—all called him a "liar."

These five names are more important than the accusation itself.

Musk is an adversary and could be seen as having a conflict of interest. But Sutskever was a co-founder and former chief scientist of OpenAI; Murati was the CTO and briefly interim CEO in 2023; Toner and McCauley were former board members. These are people within OpenAI's internal power structure.

We cannot simply label Altman as a good or bad person.

The feelings towards Altman within OpenAI are clearly complex. He can propel an organization to the center of the world, but also makes some core figures uneasy. He possesses immense organizational, fundraising, media, and political acumen, which is how the company got to where it is today.

When the board ousted Altman in 2023, OpenAI's official reason was that he was not "consistently candid" in his communications with the board. A few days later, Altman returned. In 2024, OpenAI published a summary of the WilmerHale investigation, acknowledging a breakdown of trust between the former board and Altman, but also concluding that the board acted too hastily, without notifying key stakeholders beforehand, without a complete investigation, or giving Altman a chance to respond.

These stories together constitute Altman's real debt of trust.

He is not a traditional hero. He has the face of a Silicon Valley nouveau riche: can talk about the mission, find money, organize talent, handle the media, negotiate with big companies, and turn a lab into a world-class company.

The more capable he is, the bigger the problem: If a company relies on his personal credibility to assure the world "we will benefit all of humanity," then his trustworthiness is no longer a matter of private character, but a matter of public governance.

Altman also mounted his own defense in court. He stated that Musk repeatedly tried to have Tesla absorb OpenAI, which wouldn't align with OpenAI's mission. He also argued that OpenAI has, in fact, created immense charitable value.

This is OpenAI's dilemma. It can claim it is still controlled by the non-profit, and that commercialization allows the non-profit to have greater value. But upon hearing this, it's hard for ordinary people not to ask: If a public mission relies on a massively valuable company and a strong CEO to protect it, is it truly a mission, or is it a loan of trust?

In 2023, the board tried to call in that loan. It failed.

When Mission Loses to Reality

OpenAI's board wasn't entirely powerless.

On paper, the non-profit board holds mission oversight. When OpenAI LP was created in 2019, OpenAI explained it as a capped-profit structure: employee and investor returns were capped, with excess profits going to the non-profit, which retained overall control. This design sounded like a compromise—allowing fundraising without fully surrendering the mission.

The problem was that reality developed much faster than the charter.

After 2019, OpenAI's binding with Microsoft deepened. Microsoft poured in capital, provided cloud and supercomputing resources, and gained commercialization rights. Court materials show that a significant portion of OpenAI's IP and employees were transferred to the for-profit entity. By the ChatGPT era, OpenAI was no longer just a research institution, but a commercial system connecting users, customers, developers, cloud resources, investors, and global competition.

Such a system can't be stopped at the push of a button.

Microsoft CEO Satya Nadella was asked in court about Microsoft's $13 billion investment in OpenAI and the potential ~$92 billion return if successful. His response was essentially that if the pie gets bigger, the non-profit also benefits.

This logic is classic: commercialization isn't a departure from the mission, but a way to expand the mission's funding sources.

Yet in the same testimony, text messages between Nadella and Altman about launching the paid version of ChatGPT were also mentioned. Nadella asked when the paid version would launch. Altman said computing power was insufficient and the user experience wasn't good enough yet, but Nadella was impatient, urging speed.

Once OpenAI and Microsoft were bound together, product timelines, customer commitments, computing power constraints, and commercial returns were all intertwined. The board could discuss the mission, but Microsoft needed to ensure customer experience. The board could worry about safety issues, but users and enterprises were already using the product. The board could fire the CEO, but employees, investors, partners, and public opinion would immediately push back.

Nadella's perspective on the 2023 board crisis was also significant. He stated he wasn't given a clear reason for Altman's ouster and criticized the board's handling as "amateur city." More critically, he was already prepared to have Altman and other employees come to Microsoft if they couldn't return to OpenAI.

This is reality. The non-profit board appears to hold the steering wheel, but the engine, accelerator, fuel, and passengers are no longer solely under its control. When an AI company is connected to massive valuations, cloud vendors, enterprise clients, employee stock options, and global users, the mission-representing board finds it very difficult to truly apply the brakes.

The bigger the AGI narrative, the larger the computing power bill; the larger the computing power bill, the greater the need for a cloud giant; the greater the need for a cloud giant, the less the mission can be protected merely by a charter.

In the AI era, computing power isn't just a back-office resource. Computing power is power itself. Whoever provides the computing power helps define how fast a company can go, where it